SFTP Stream Reader

SFTP stream reader is designed to read and access data from an SFTP server. SFTP stream readers typically authenticate with the SFTP server using username and password or SSH key-based authentication.

SFTP Stream Reader

All component configurations are classified broadly into the following sections:

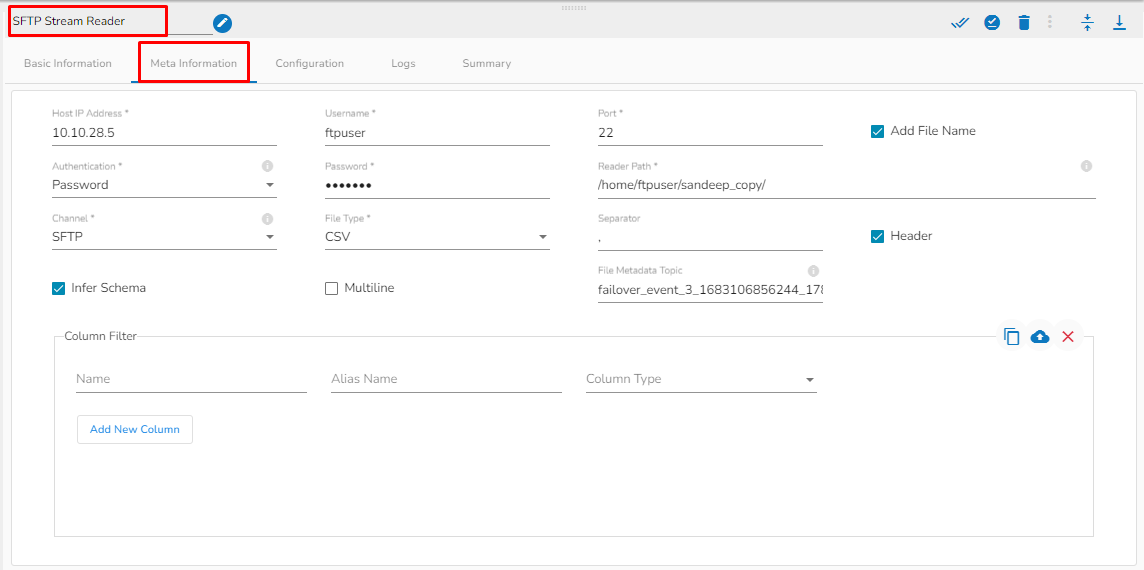

Meta Information

Please follow the demonstration to configure the component and its Meta Information.

Steps to configure the meta information of SFTP Stream Reader Component

Host: Enter the host.

Username: Enter username for SFTP stream reader.

Port: Provide the Port number.

Add File Name: Enable this option to get the file name along with the data.

Authentication: Select an authentication option using the drop-down list.

Password: Provide a password to authenticate the SFTP Stream reader component

PEM/PPK File: Choose a file to authenticate the SFTP Stream reader component. The user needs to upload a file if this authentication option has been selected.

Reader Path: Enter the path from where the file has to be read.

Channel: Select a channel option from the drop-down menu (the supported channel is SFTP).

File type: Select the file type from the drop-down:

CSV: The Header and Infer Schema fields get displayed with CSV as the selected File Type. Enable Header option to get the Header of the reading file and enable Infer Schema option to get true schema of the column in the CSV file. Schema: If CSV is selected as file type, then paste spark schema of CSV file in this field.

JSON: The Multiline and Charset fields get displayed with JSON as the selected File Type. Check-in the Multiline option if there is any multiline string in the file.

XML: Select this option to read XML file. If this option is selected, the following fields will get displayed:

Infer schema: Enable this option to get true schema of the column.

Path: Provide the path of the file.

Root Tag: Provide the root tag from the XML files.

Row Tags: Provide the row tags from the XML files.

Join Row Tags: Enable this option to join multiple row tags.

File Metadata Topic: Enter Kafka Event Name where the reading file metadata has to be sent.

Column filter: Select the columns which you want to read and if you want to change the name of the column, then put that name in the alias name section otherwise keep the alias name the same as of column name and then select a Column Type from the drop-down menu.

Use Download Data and Upload File options to select the desired columns.

Upload File: The user can upload the existing system files (CSV, JSON) using the Upload File icon.

Download Data (Schema): Users can download the schema structure in JSON format by using the Download Data icon.