External models can be imported into the Data Science Lab and experimented inside the Notebooks using the Import Model functionality.

Please Note:

The External models can be registered to the Data Pipeline module and inferred using the Data Science Lab script runner.

Only the Native prediction functionality will work for the External models.

Check out the illustration on importing a model.

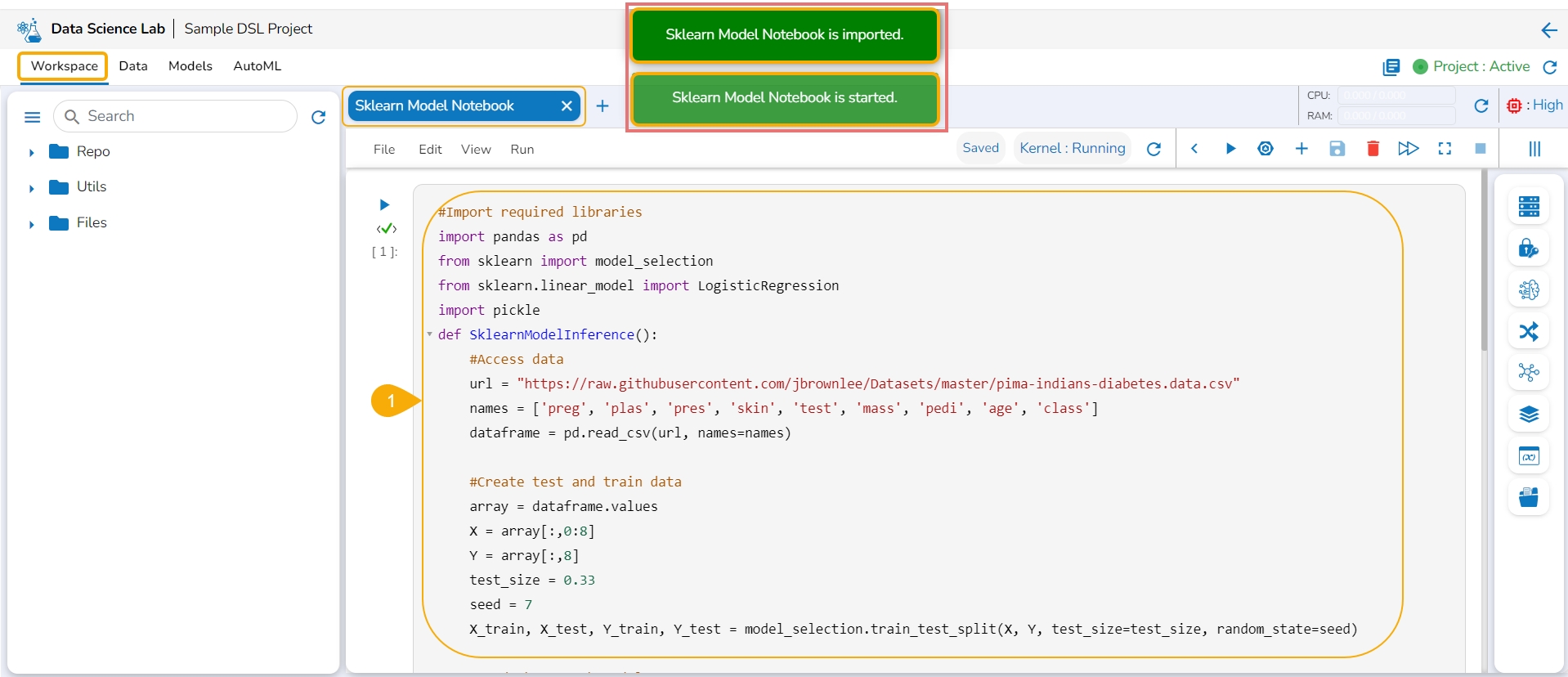

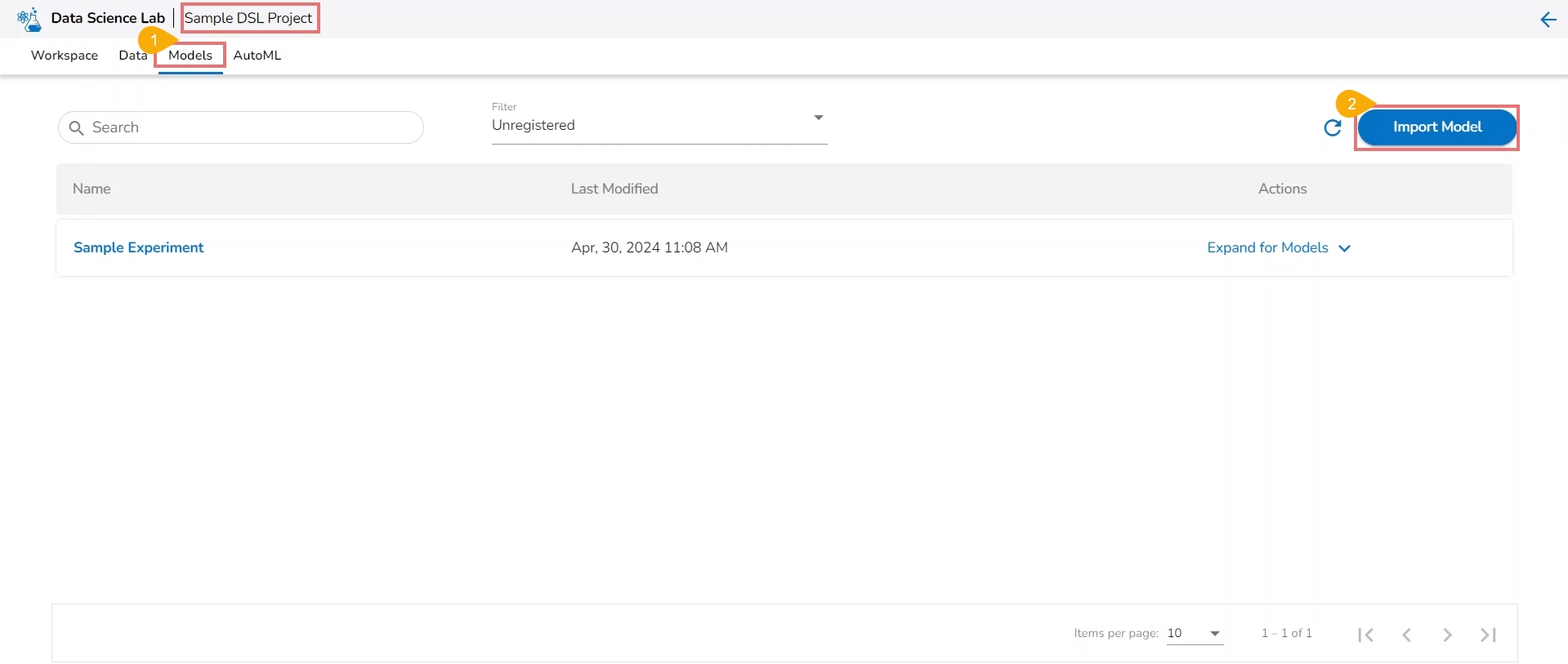

Navigate to the Model tab for a Data Science Project.

Click the Import Model option.

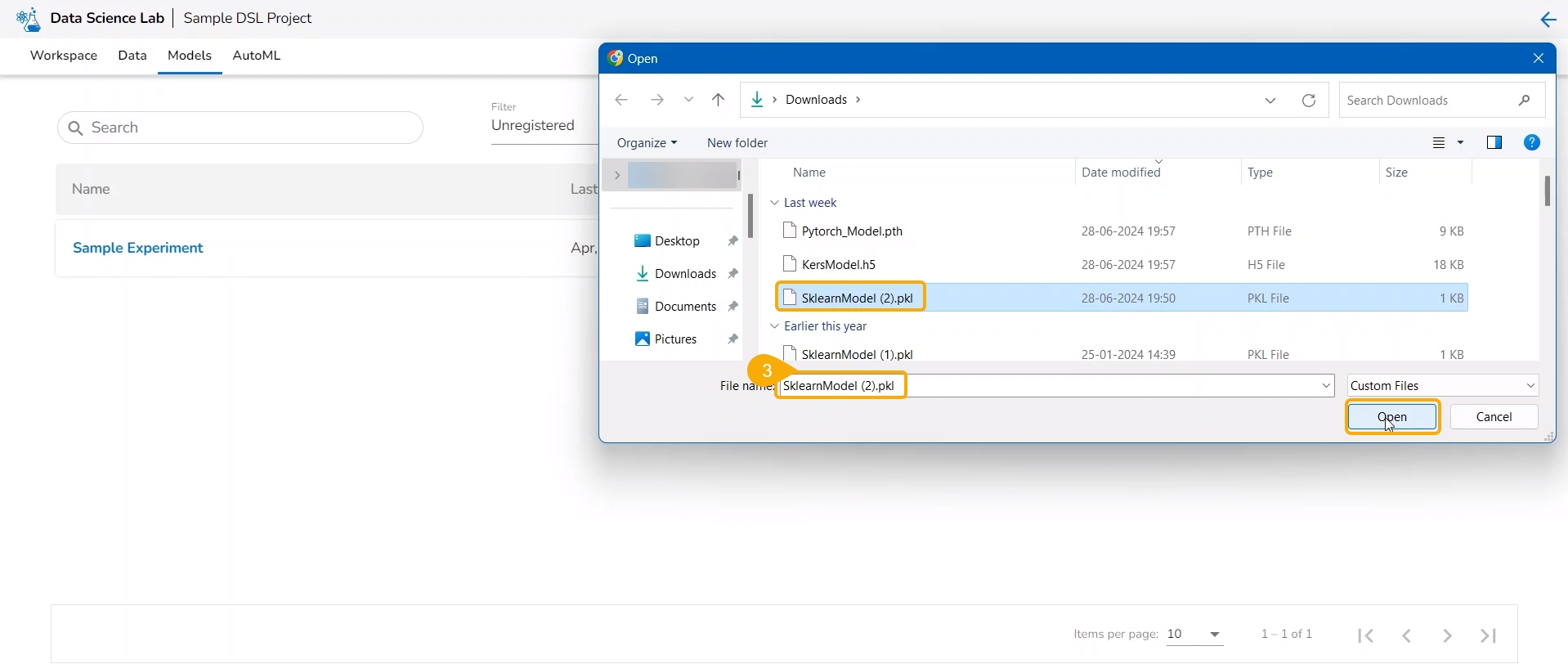

The user gets redirected to upload the model file. Select and upload the file.

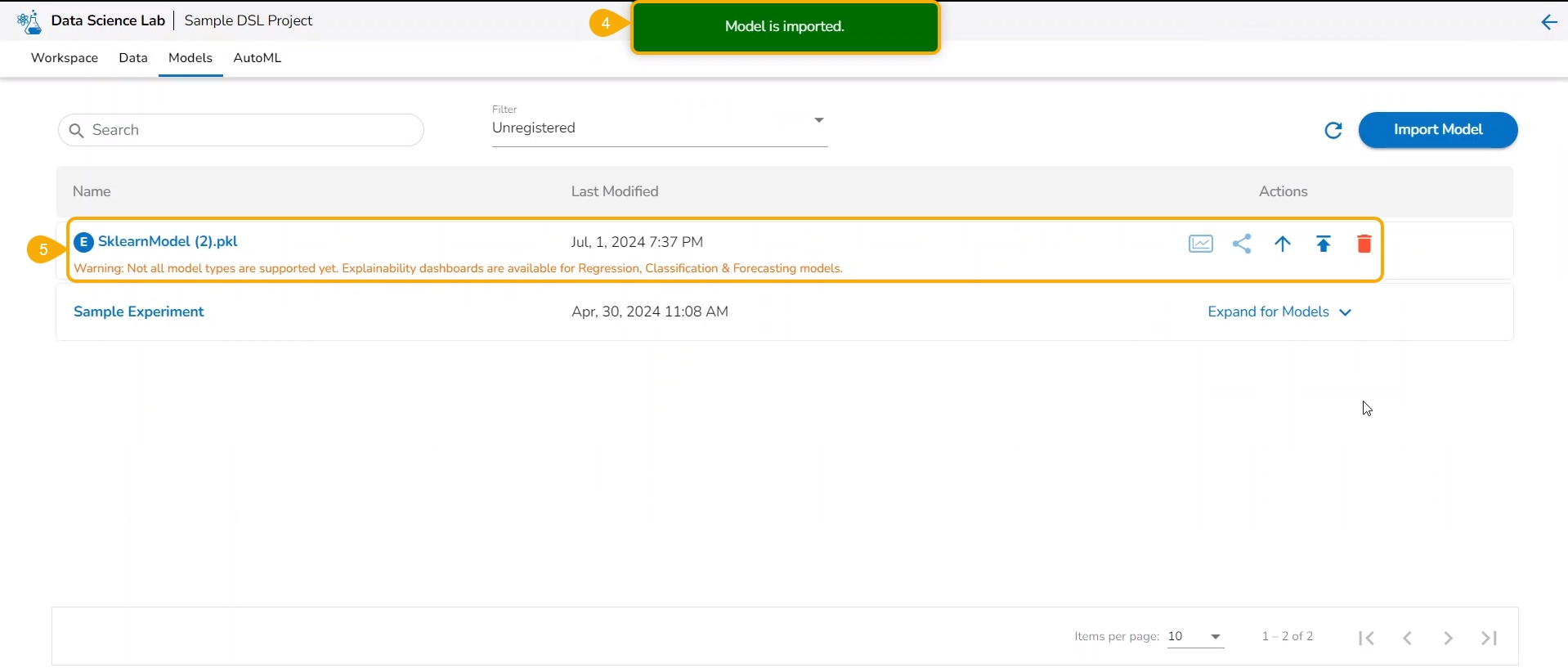

A notification message appears.

The imported model gets added to the model list.

Please Note: The Imported models are referred to as External models in the model list and are marked with a pre-fix to their names (as displayed in the above-given image).

The user needs to start a new .ipynb file with a wrapper function that includes Data, Imported Model, Predict function, and output Dataset with predictions.

Check out the walk-through on Export to Pipeline Functionality for a model.

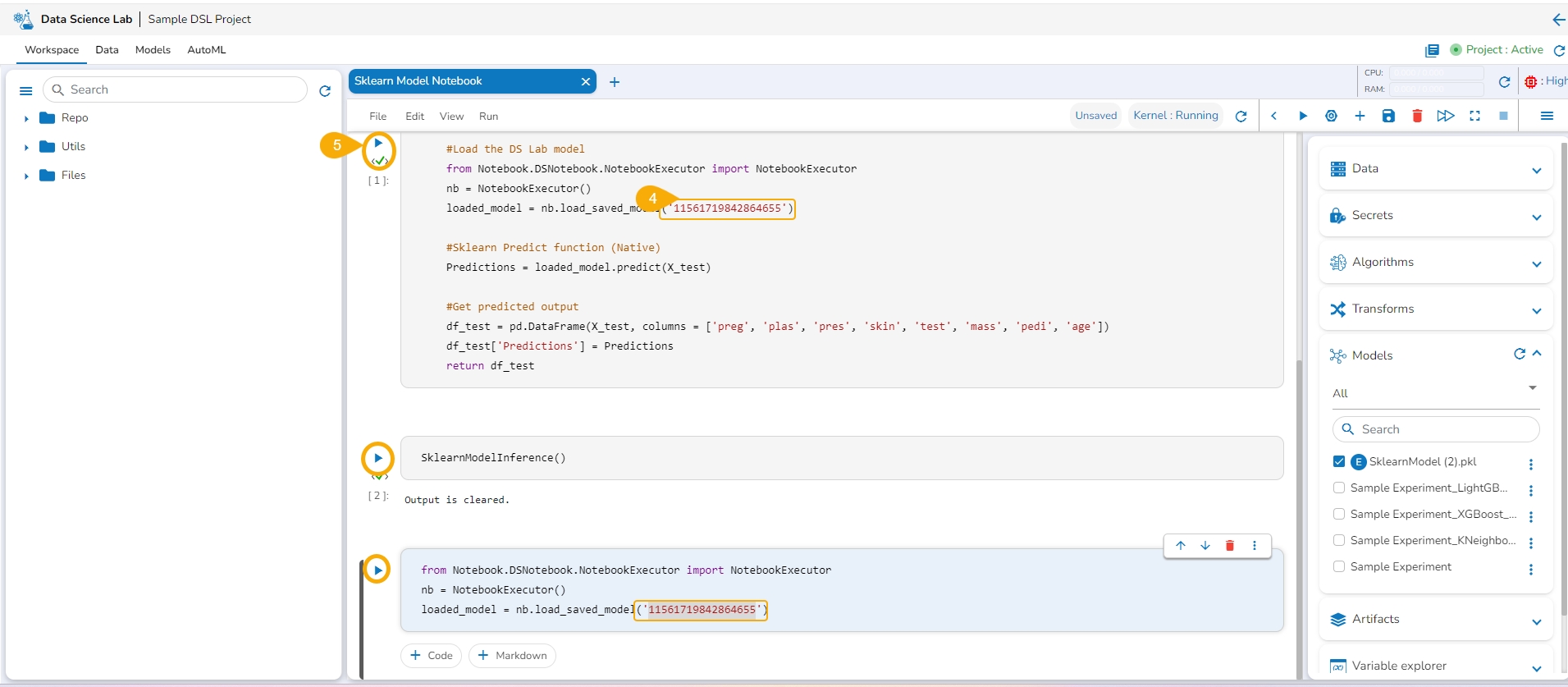

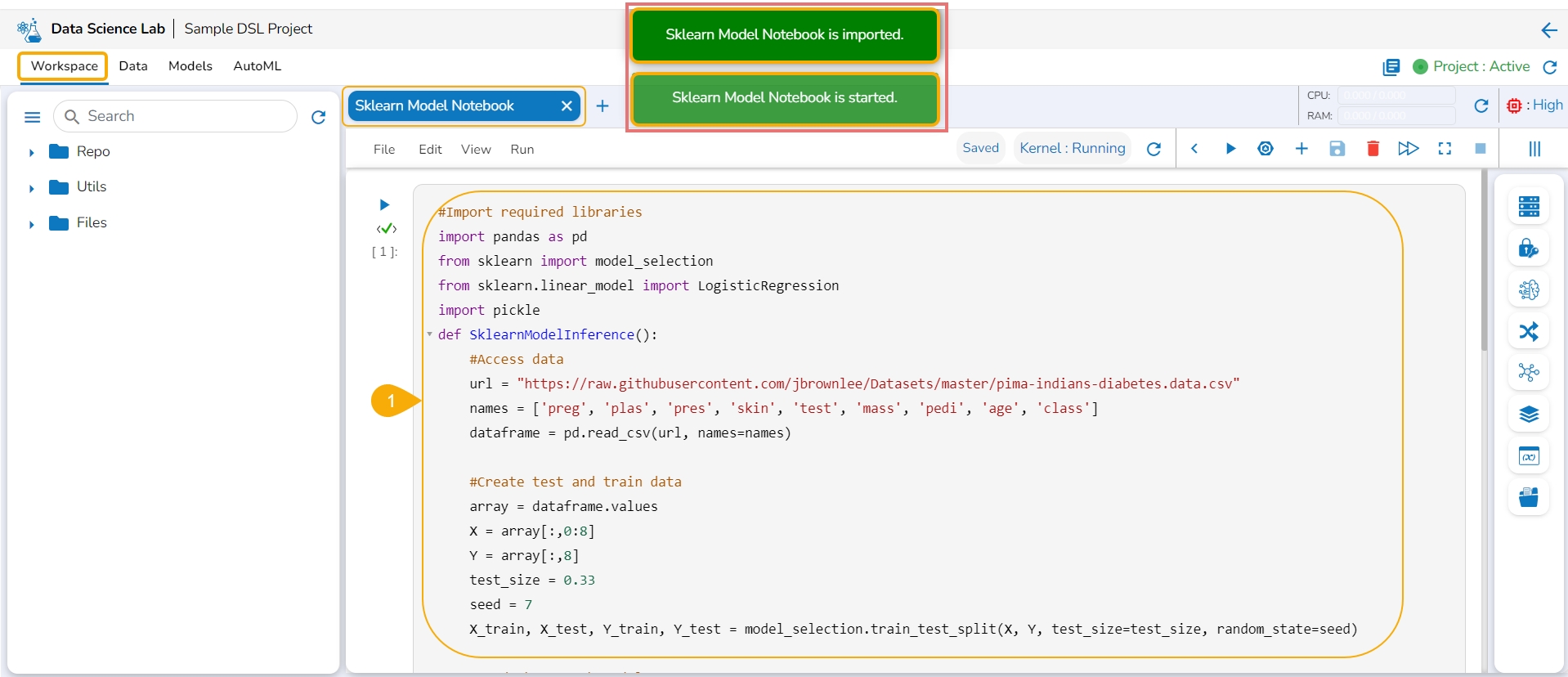

Navigate to a Data Science Notebook (.ipynb file) from an activated project. In this case, a pipeline has been imported with the wrapper function.

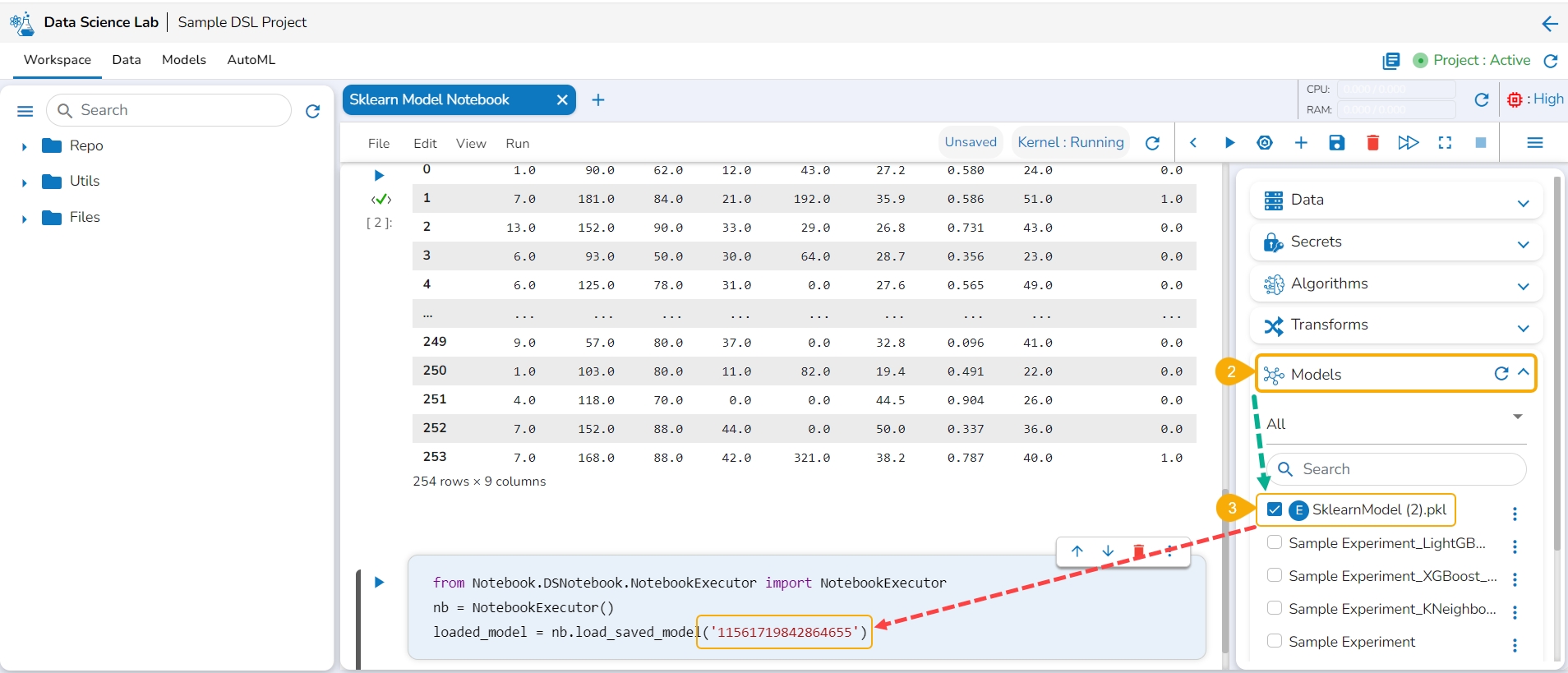

Access the Imported Model inside this .ipynb file.

Load the imported model to the Notebook cell.

Mention the Loaded imported model in the inference script.

Run the code cell with the inference script.

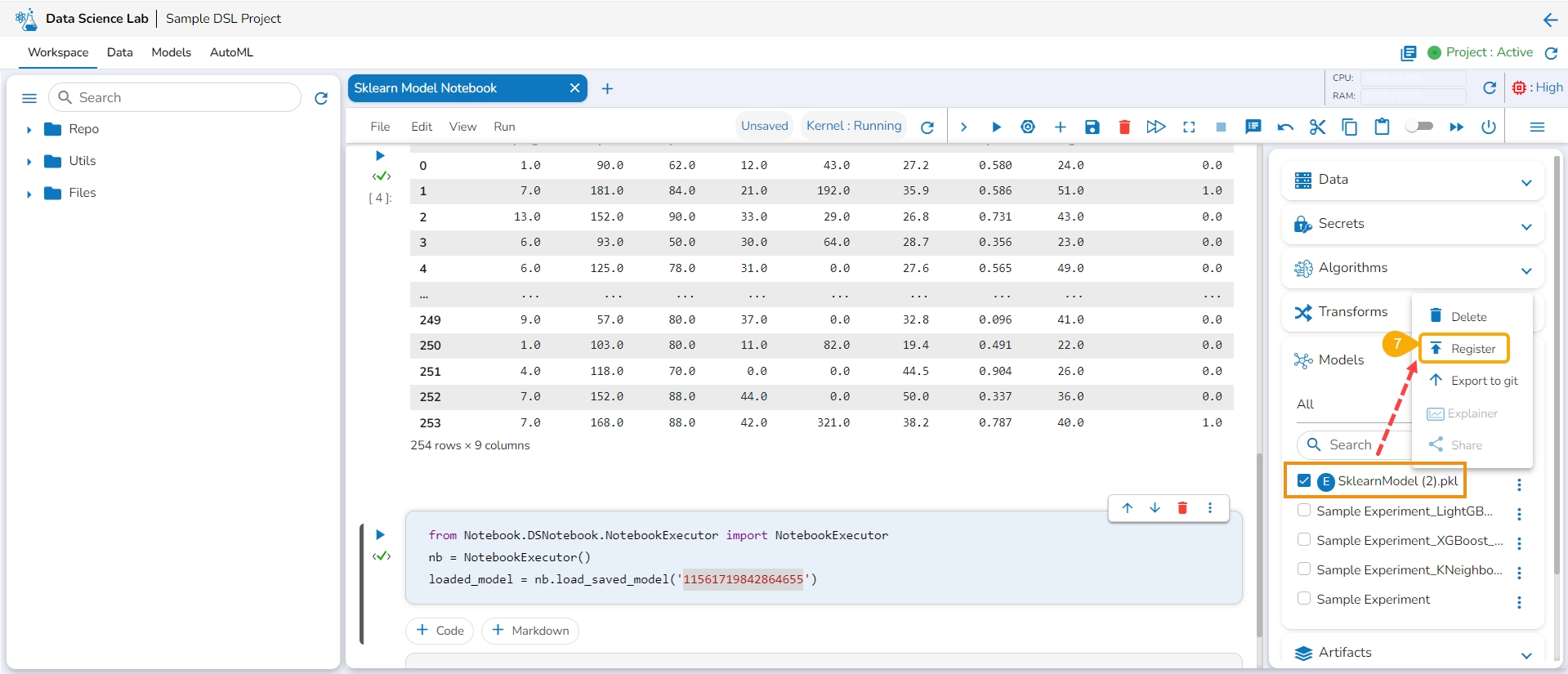

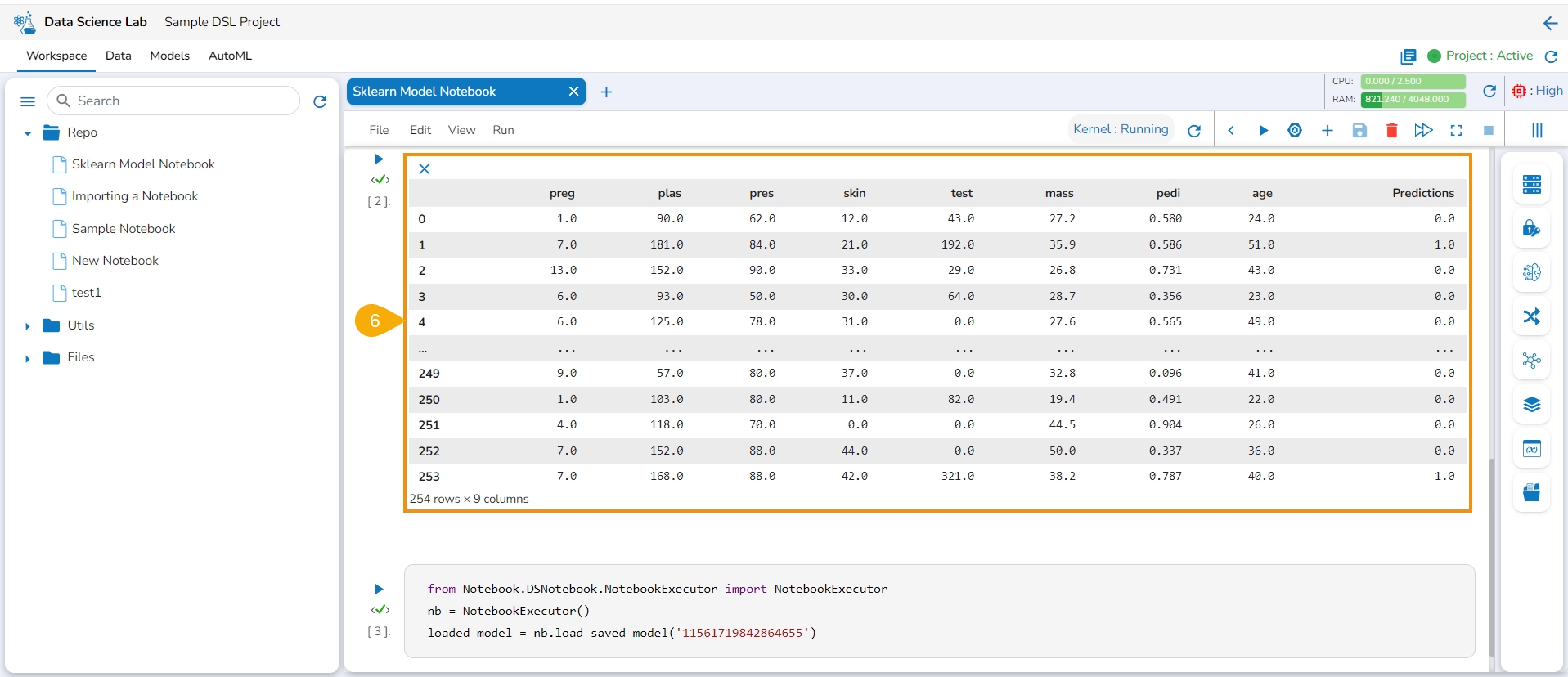

The Data preview is displayed below.

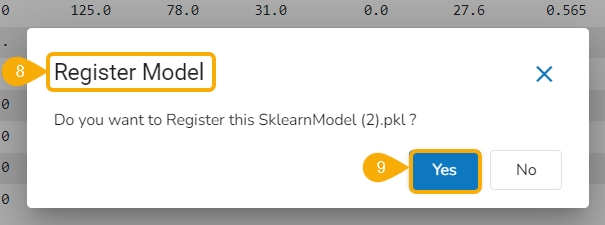

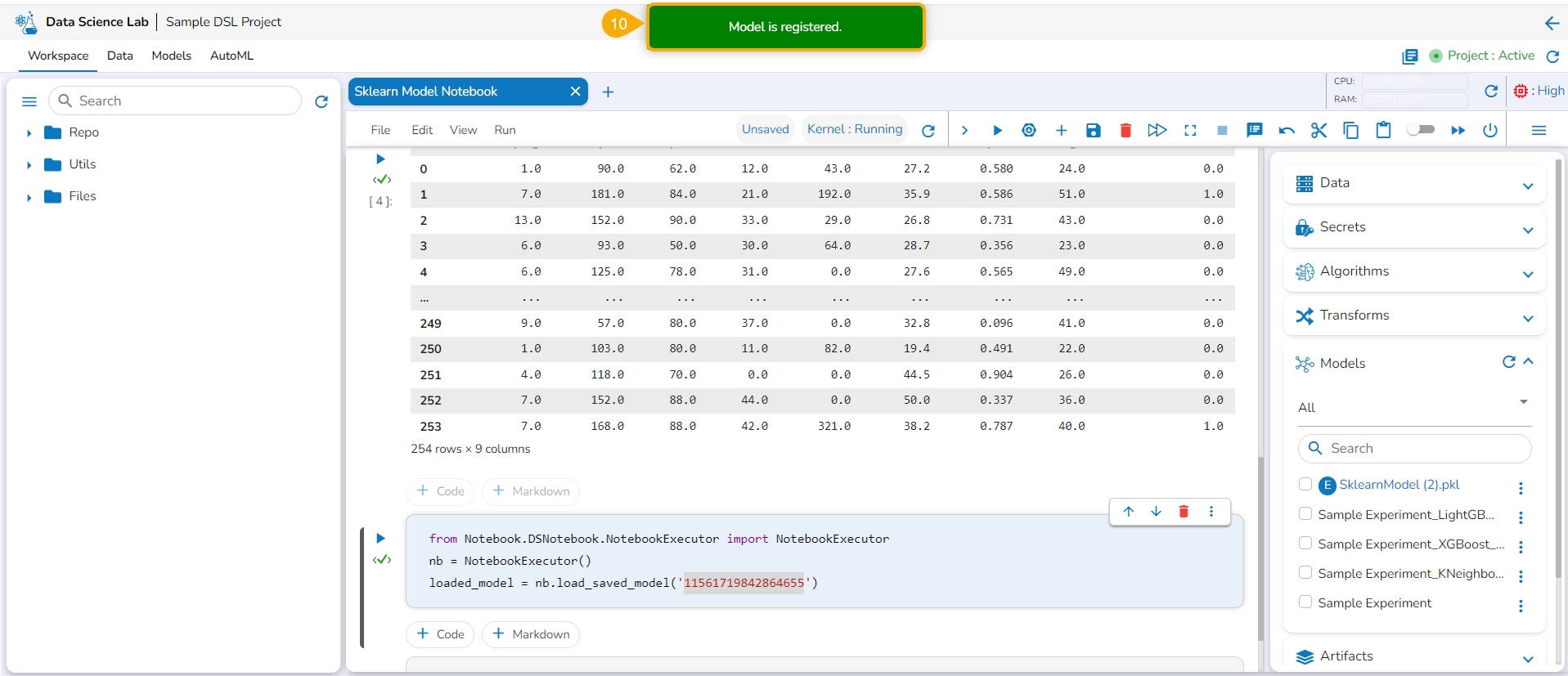

Click the Register option for the imported model from the ellipsis context menu.

The Register Model dialog box appears to confirm the model registration.

Click the Yes option.

A notification message appears, and the model gets registered.

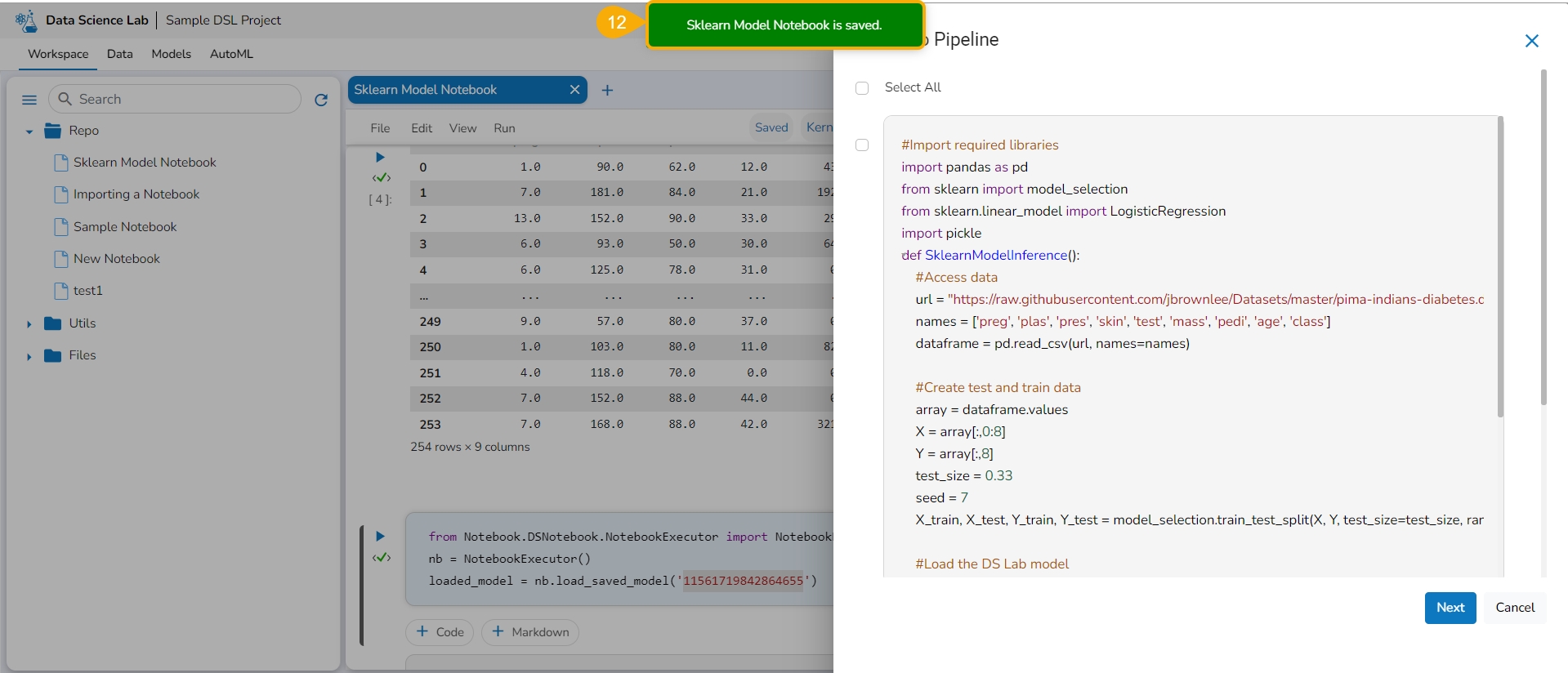

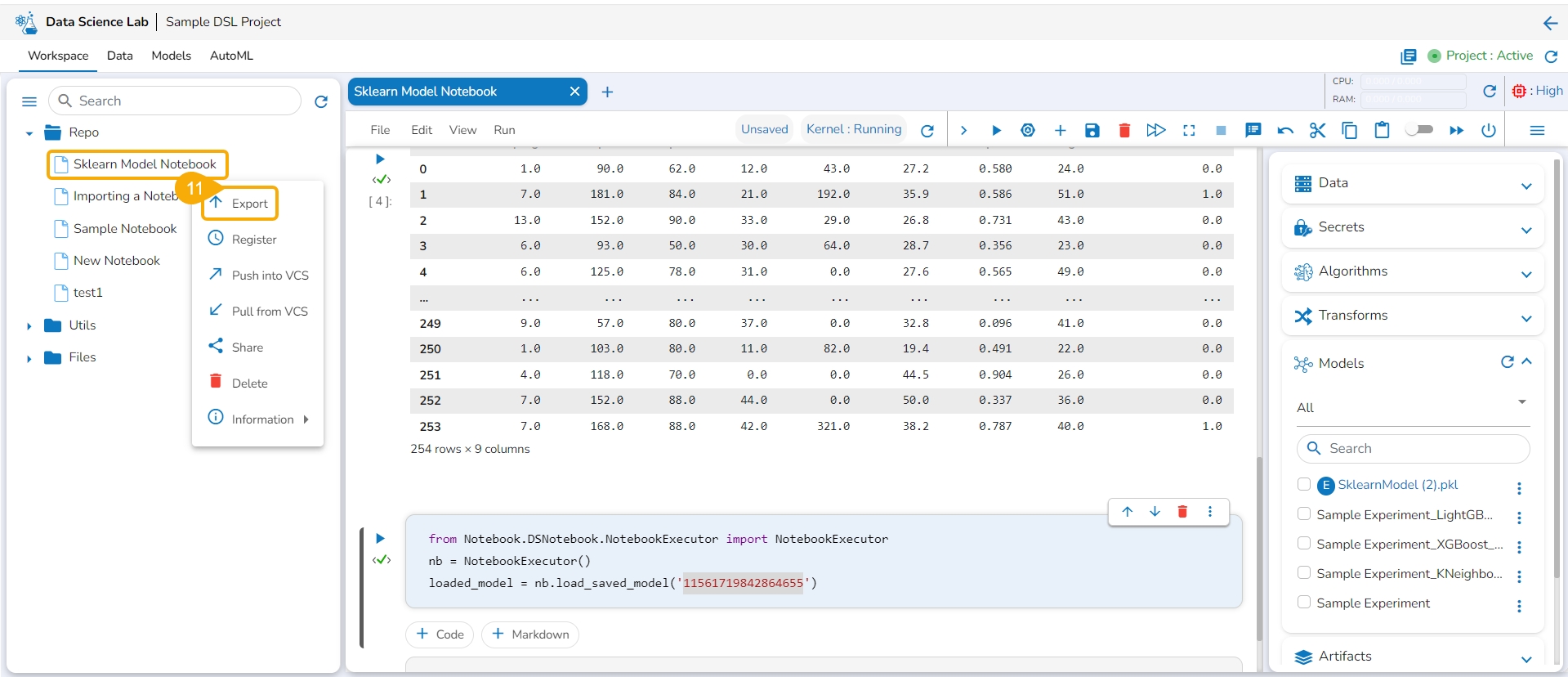

Export the script using the Export functionality provided for the Data Science Notebook (.ipynb file).

Another notification appears to ensure that the Notebook is saved.

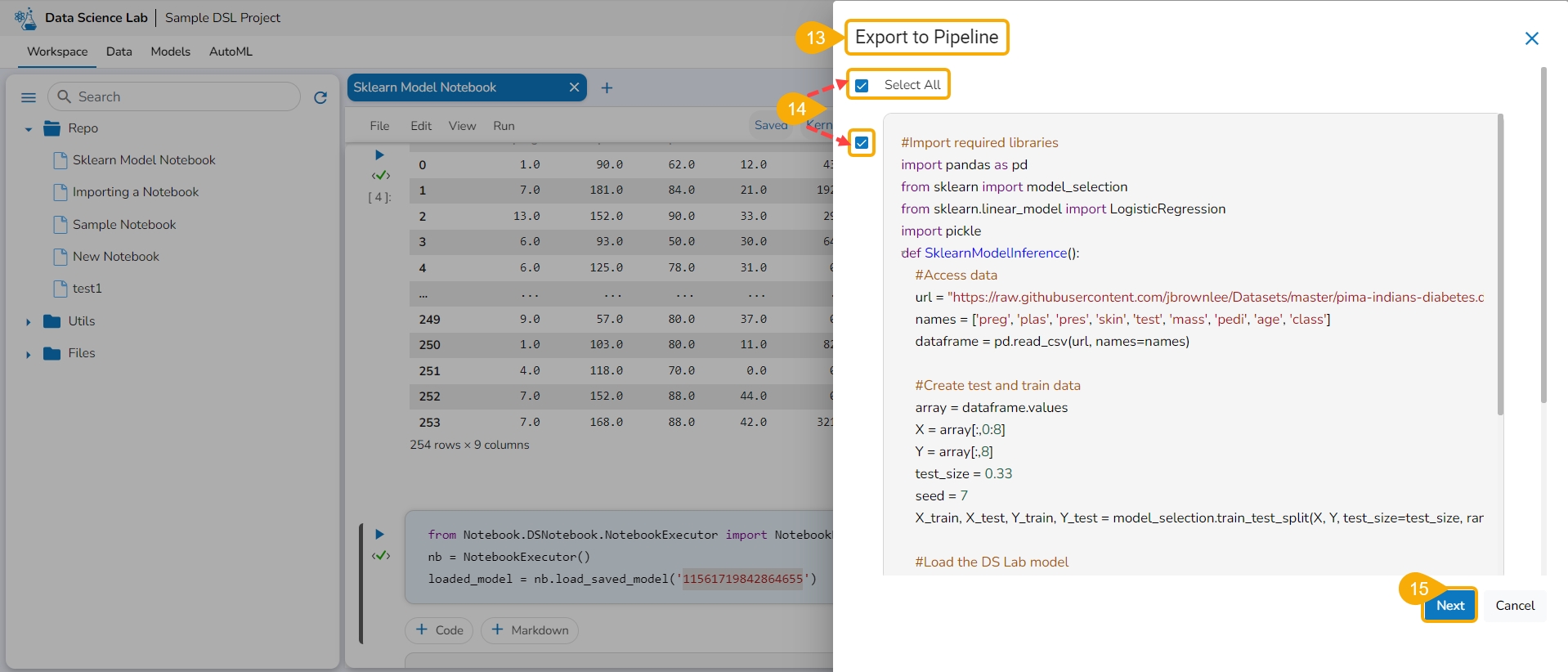

The Export to Pipeline window appears.

Select a specific script from the Notebook. or Choose the Select All option to select the full script.

Select the Next option.

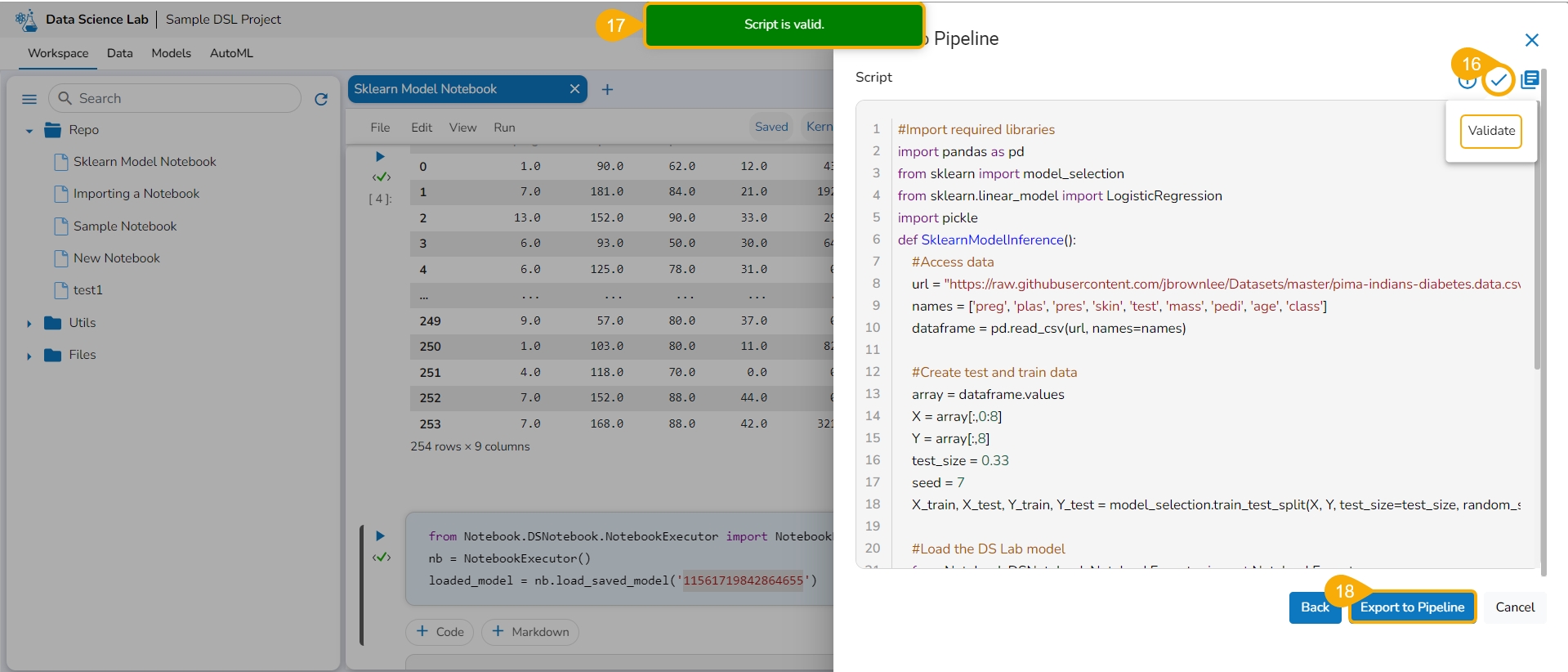

Click the Validate icon to validate the script.

A notification message appears to ensure the validity of the script.

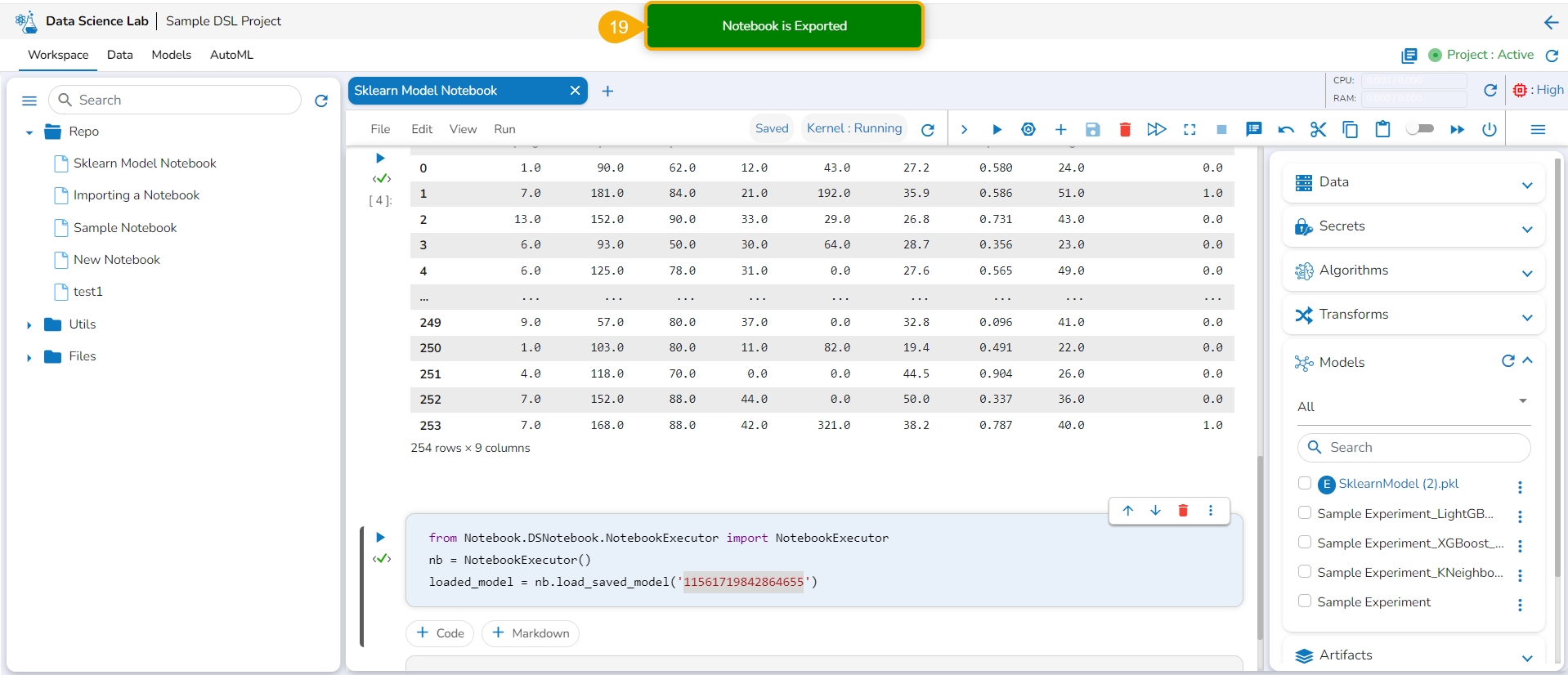

Click the Export to Pipeline option.

A notification message appears to ensure that the selected Notebook has been exported.

Please Note: The imported model gets registered to the Data Pipeline module as a script.

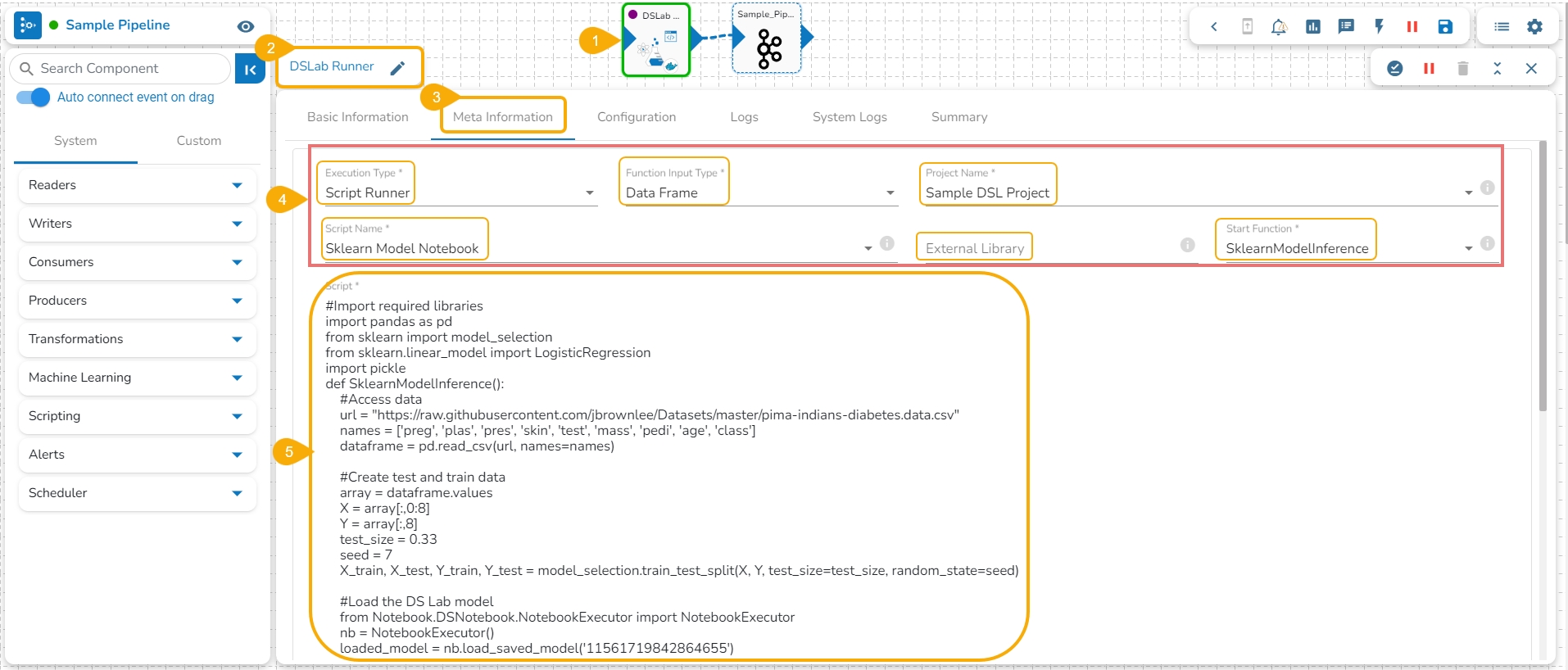

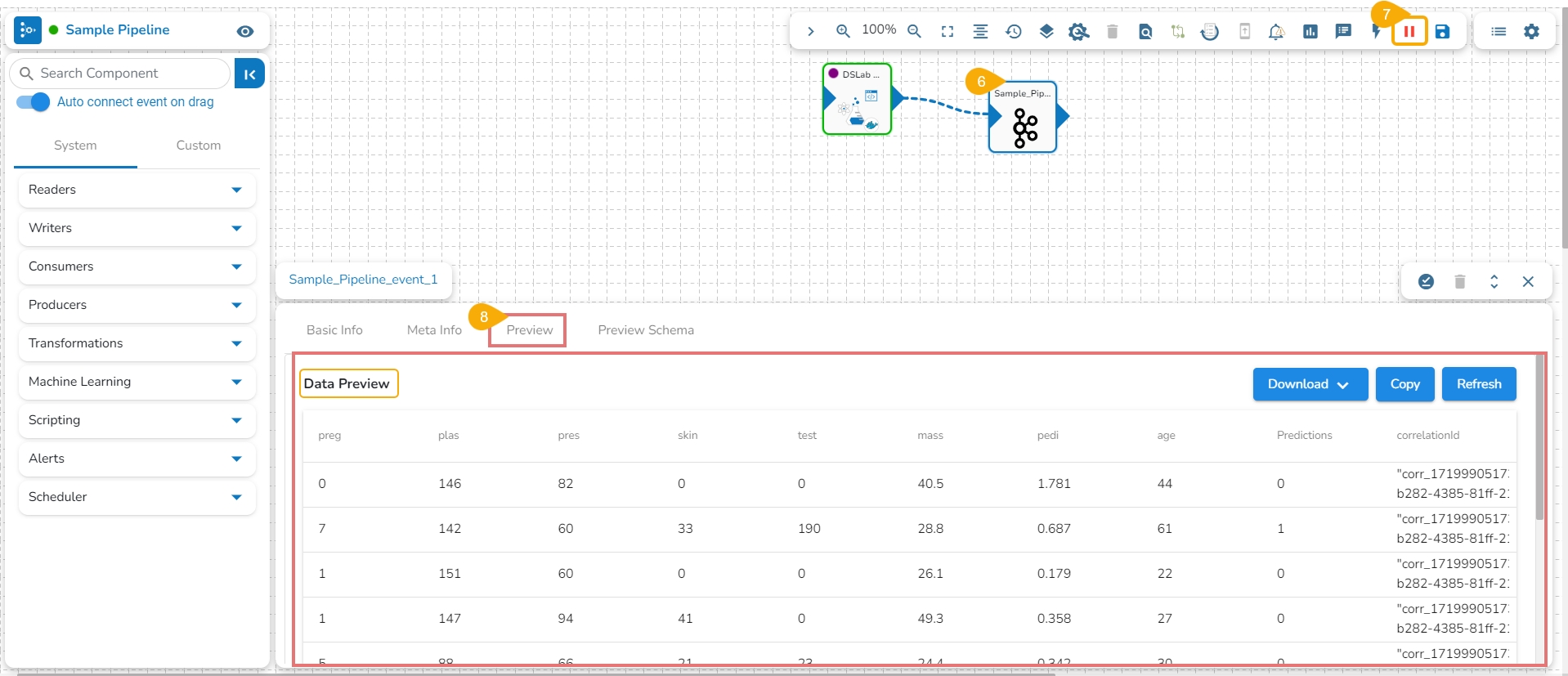

Navigate to the Data Pipeline Workflow editor.

Drag the DS Lab Runner component and configure the Basic Information.

Open the Meta Information tab of the DS Lab Runner component.

Configure the following information for the Meta Information tab.

Select Script Runner as the Execution Type.

Select function input type.

Select the project name.

Select the Script Name from the drop-down option. The same name given to the imported model appears as the script name.

Provide details for the External Library (if applicable).

Select the Start Function from the drop-down menu.

The exported model can be accessed inside the Script section.

The user can connect the DS Lab Script Runner component to an Input Event.

Run the Pipeline.

The model predictions can be generated in the Preview tab of the connected Input Event.

Please Note:

The Imported Models can be accessed through the Script Runner component inside the Data Pipeline module.

The execution type should be Model Runner inside the Data Pipeline while accessing the other exported Data Science models.

The supported extensions for External models - .pkl, .h5, .pth & .pt