Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

All the available Writer Task components for a Job are explained in this section.

Writers are a group of components that can write data to different DB and cloud storages.

There are Eight(8) Writers tasks in Jobs. All the Writers tasks is having the following tabs:

Meta Information: Configure the meta information same as doing in pipeline components.

Preview Data: Only ten random data can be previewed in this tab only when the task is running in Development mode.

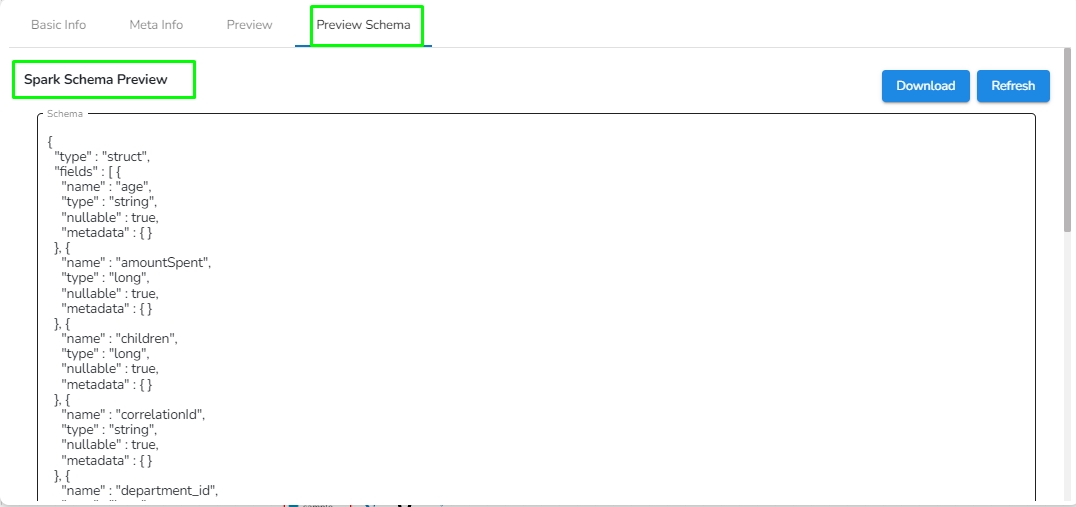

Preview schema: Spark schema of the data will be shown in this tab.

Logs: Logs of the tasks will display here.

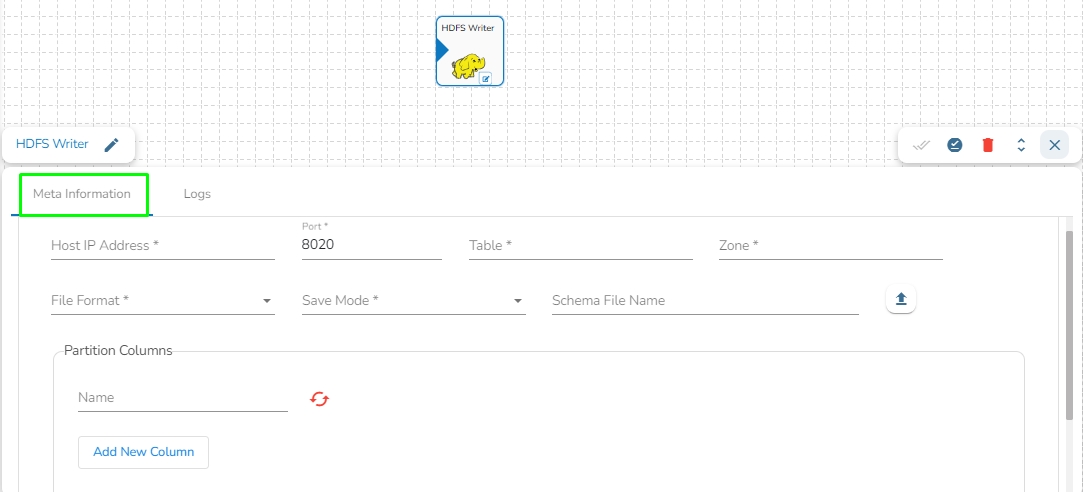

HDFS stands for Hadoop Distributed File System. It is a distributed file system designed to store and manage large data sets in a reliable, fault-tolerant, and scalable way. HDFS is a core component of the Apache Hadoop ecosystem and is used by many big data applications.

This task writes the data in HDFS(Hadoop Distributed File System).

Drag the HDFS writer task to the Workspace and click on it to open the related configuration tabs for the same. The Meta Information tab opens by default.

Host IP Address: Enter the host IP address for HDFS.

Port: Enter the Port.

Table: Enter the table name where the data has to be written.

Zone: Enter the Zone for HDFS in which the data has to be written. Zone is a special directory whose contents will be transparently encrypted upon write and transparently decrypted upon read.

File Format: Select the file format in which the data has to be written:

CSV

JSON

PARQUET

AVRO

Save Mode: Select the save mode.

Schema file name: Upload spark schema file in JSON format.

Partition Columns: Provide a unique Key column name to partition data in Spark.

Please Note: Please click the Save Task In Storage icon to save the configuration for the dragged writer task.

Loading...

Loading...

Loading...

Loading...

Loading...

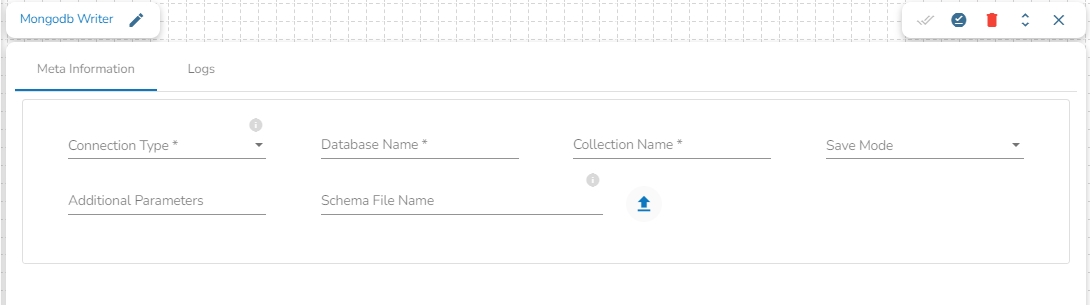

This task writes the data to MongoDB collection.

Drag the MongoDB writer task to the Workspace and click on it to open the related configuration tabs for the same. The Meta Information tab opens by default.

Connection Type: Select the connection type from the drop-down:

Standard

SRV

Connection String

Port (*): Provide the Port number (It appears only with the Standard connection type).

Host IP Address (*): The IP address of the host.

Username (*): Provide a username.

Password (*): Provide a valid password to access the MongoDB.

Database Name (*): Provide the name of the database where you wish to write data.

Additional Parameters: Provide details of the additional parameters.

Schema File Name: Upload Spark Schema file in JSON format.

Save Mode: Select the Save mode from the drop down.

Append: This operation adds the data to the collection.

Ignore: "Ignore" is an operation that skips the insertion of a record if a duplicate record already exists in the database. This means that the new record will not be added, and the database will remain unchanged. "Ignore" is useful when you want to prevent duplicate entries in a database.

Upsert: It is a combination of "update" and "insert". It is an operation that updates a record if it already exists in the database or inserts a new record if it does not exist. This means that "upsert" updates an existing record with new data or creates a new record if the record does not exist in the database.

Please Note: Please click the Save Task In Storage icon to save the configuration for the dragged writer task.

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

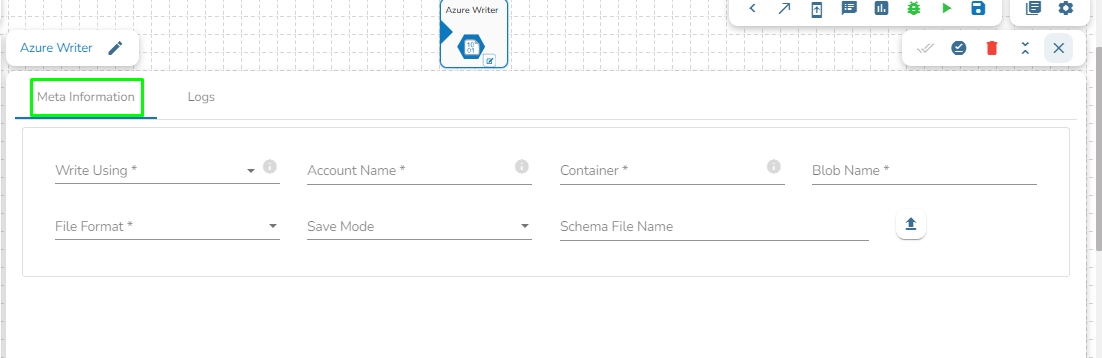

Azure is a cloud computing platform and service. It provides a range of cloud services, including infrastructure as a service (IaaS), platform as a service (PaaS), and software as a service (SaaS) offerings, as well as tools for building, deploying, and managing applications in the cloud.

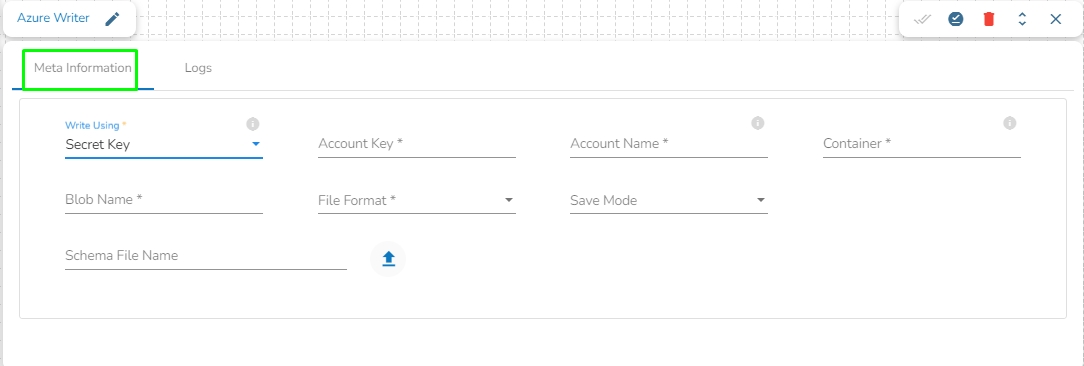

Azure Writer task is used to write the data in the Azure Blob Container.

Drag the Azure writer task to the Workspace and click on it to open the related configuration tabs for the same. The Meta Information tab opens by default.

Write using: There are three(3) options available under this tab:

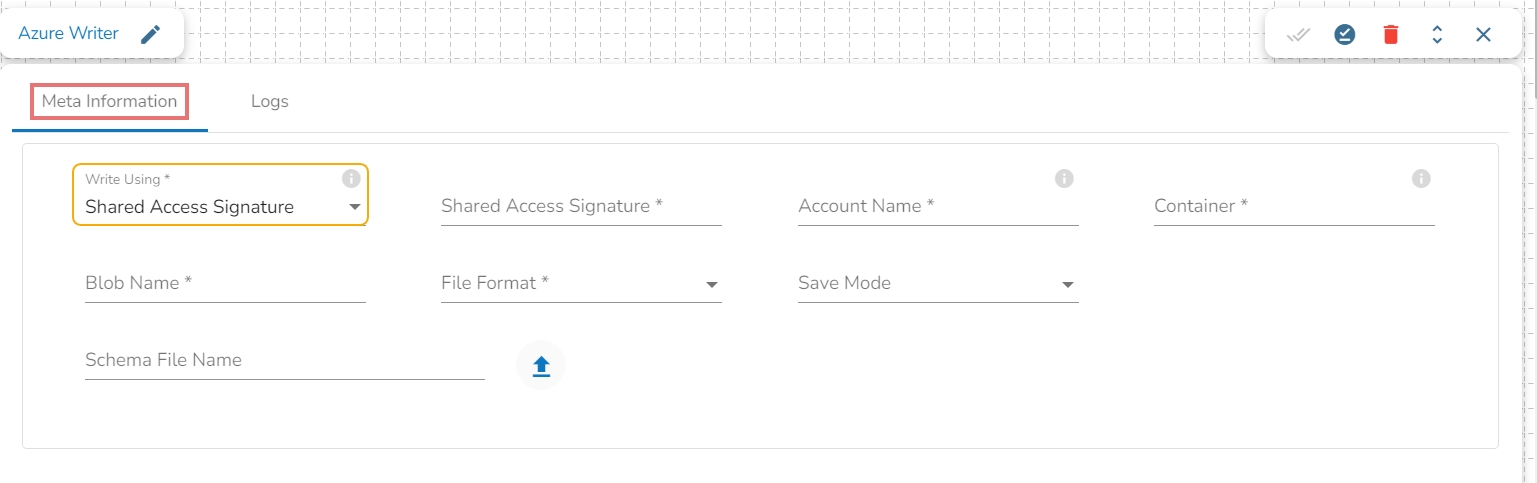

Shared Access Signature:

Secret Key

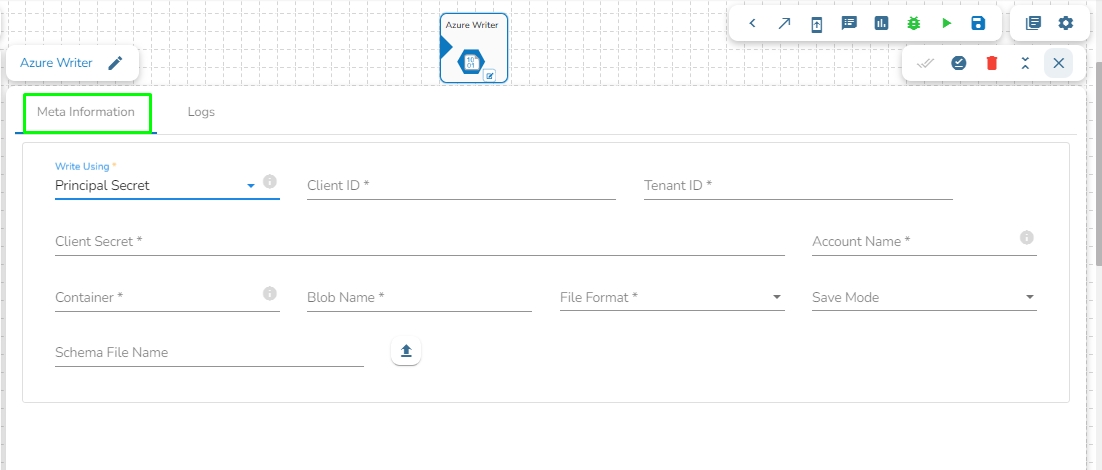

Principal Secret

Provide the following details:

Shared Access Signature: This is a URI that grants restricted access rights to Azure Storage resources.

Account Name: Provide the Azure account name.

Container: Provide the container name from where the blob is located. A container is a logical unit of storage in Azure Blob Storage that can hold blobs. It is similar to a directory or folder in a file system, and it can be used to organize and manage blobs.

Blob Name: Enter the Blob name. A blob is a type of object storage that is used to store unstructured data, such as text or binary data, like images or videos.

File Format: There are four(4) types of file extensions are available under it, select the file format in which the data has to be written:

CSV

JSON

PARQUET

AVRO

Save Mode: Select the Save mode from the drop down.

Append

Overwrite

Schema File Name: Upload spark schema file in JSON format.

Account Key: Enter the azure account key. In Azure, an account key is a security credential that is used to authenticate access to storage resources, such as blobs, files, queues, or tables, in an Azure storage account.

Account Name: Provide the Azure account name.

Container: Provide the container name from where the blob is located. A container is a logical unit of storage in Azure Blob Storage that can hold blobs. It is similar to a directory or folder in a file system, and it can be used to organize and manage blobs.

Blob Name: Enter the Blob name. A blob is a type of object storage that is used to store unstructured data, such as text or binary data, like images or videos.

File type: There are four(4) types of file extensions are available under it:

CSV

JSON

PARQUET

AVRO

Schema File Name: Upload spark schema file in JSON format.

Save Mode: Select the Save mode from the drop down.

Append

Overwrite

Provide the following details:

Client ID: Provide Azure Client ID. The client ID is the unique Application (client) ID assigned to your app by Azure AD when the app was registered.

Tenant ID: Provide the Azure Tenant ID. Tenant ID (also known as Directory ID) is a unique identifier that is assigned to an Azure AD tenant, which represents an organization or a developer account. It is used to identify the organization or developer account that the application is associated with.

Client Secret: Enter the Azure Client Secret. Client Secret (also known as Application Secret or App Secret) is a secure password or key that is used to authenticate an application to Azure AD.

Account Name: Provide the Azure account name.

Container: Provide the container name from where the blob is located. A container is a logical unit of storage in Azure Blob Storage that can hold blobs. It is similar to a directory or folder in a file system, and it can be used to organize and manage blobs.

Blob Name: Enter the Blob name. A blob is a type of object storage that is used to store unstructured data, such as text or binary data, like images or videos.

File type: There are four(4) types of file extensions are available under it:

CSV

JSON

PARQUET

AVRO

Save Mode: Select the Save mode from the drop down.

Append

Overwrite

Schema File Name: Upload spark schema file in JSON format.

Please Note: Please click the Save Task In Storage icon to save the configuration for the dragged writer task.

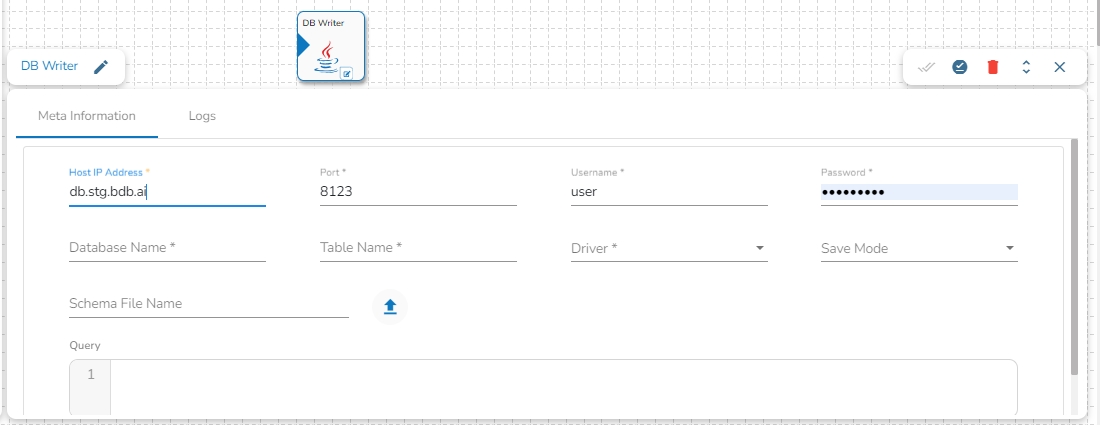

This task is used to write data in the following databases: MYSQL, MSSQL, Oracle, ClickHouse, Snowflake, PostgreSQL, Redshift.

Drag the DB writer task to the Workspace and click on it to open the related configuration tabs for the same. The Meta Information tab opens by default.

Host IP Address: Enter the Host IP Address for the selected driver.

Port: Enter the port for the given IP Address.

Database name: Enter the Database name.

Table name: Provide a single or multiple table names. If multiple table name has be given, then enter the table names separated by comma(,).

User name: Enter the user name for the provided database.

Password: Enter the password for the provided database.

Driver: Select the driver from the drop down. There are 6 drivers supported here: MYSQL, MSSQL, Oracle, ClickHouse, Snowflake, PostgreSQL, Redshift.

Schema File Name: Upload spark schema file in JSON format.

Save Mode: Select the Save mode from the drop down.

Append

Overwrite

Query: Write the create table(DDL) query.

Please Note: Please click the Save Task In Storage icon to save the configuration for the dragged writer task.

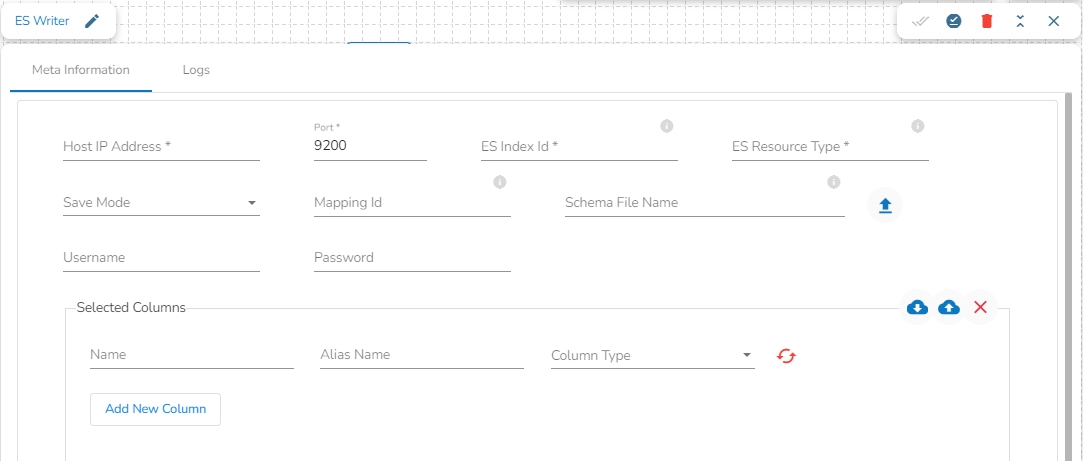

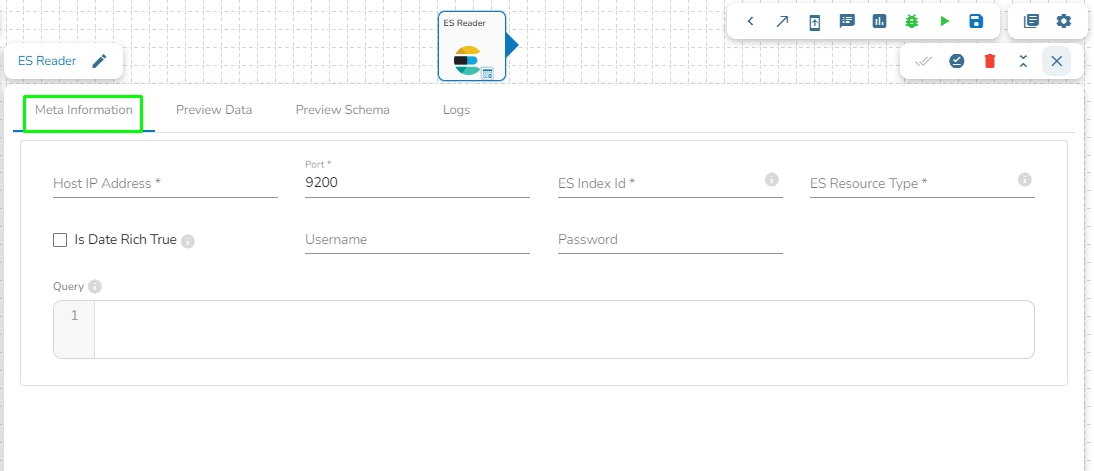

Elasticsearch is an open-source search and analytics engine built on top of the Apache Lucene library. It is designed to help users store, search, and analyze large volumes of data in real-time. Elasticsearch is a distributed, scalable system that can be used to index and search structured, semi-structured, and unstructured data.

This task is used to write the data in Elastic Search engine.

Drag the ES writer task to the Workspace and click on it to open the related configuration tabs for the same. The Meta Information tab opens by default.

Host IP Address: Enter the host IP Address for Elastic Search.

Port: Enter the port to connect with Elastic Search.

Index ID: Enter the Index ID to read a document in elastic search. In Elasticsearch, an index is a collection of documents that share similar characteristics, and each document within an index has a unique identifier known as the index ID. The index ID is a unique string that is automatically generated by Elasticsearch and is used to identify and retrieve a specific document from the index.

Mapping ID: Provide the Mapping ID. In Elasticsearch, a mapping ID is a unique identifier for a mapping definition that defines the schema of the documents in an index. It is used to differentiate between different types of data within an index and to control how Elasticsearch indexes and searches data.

Resource Type: Provide the resource type. In Elasticsearch, a resource type is a way to group related documents together within an index. Resource types are defined at the time of index creation, and they provide a way to logically separate different types of documents that may be stored within the same index.

Username: Enter the username for elastic search.

Password: Enter the password for elastic search.

Schema File Name: Upload spark schema file in JSON format.

Save Mode: Select the Save mode from the drop down.

Append

Selected columns: The user can select the specific column, provide some alias name and select the desired data type of that column.

Please Note: Please click the Save Task In Storage icon to save the configuration for the dragged writer task.

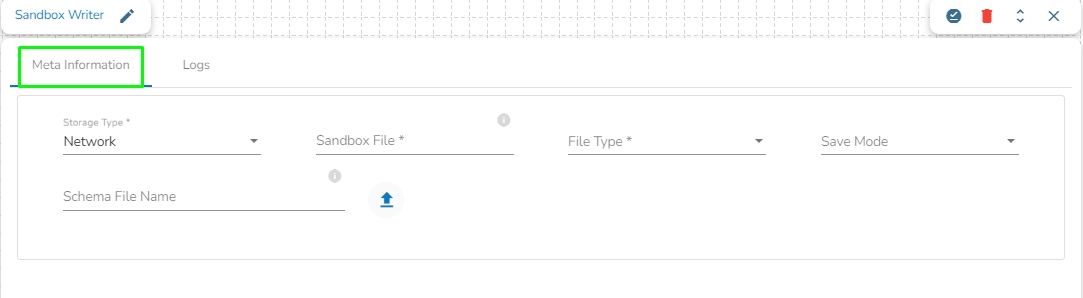

This task writes data to network pool of Sandbox.

Drag the Sandbox writer task to the Workspace and click on it to open the related configuration tabs for the same. The Meta Information tab opens by default.

Storage Type: This field is pre-defined.

Sandbox File: Enter the file name.

File Type: Select the file type in which the data has to be written. There are 4 files types supported here:

CSV

JSON

Save Mode: Select the Save mode from the drop down.

Append

Overwrite

Schema File Name: Upload spark schema file in JSON format.

Please Note: Please click the Save Task In Storage icon to save the configuration for the dragged writer task.

All the available Reader Task components are included in this section.

Readers are a group of tasks that can read data from different DB and cloud storages. In Jobs, all the tasks run in real-time.

There are eight(8) Readers tasks in Jobs. All the readers tasks contains the following tabs:

Meta Information: Configure the meta information same as doing in pipeline components.

Preview Data: Only ten(10) random data can be previewed in this tab only when the task is running in Development mode.

Preview schema: Spark schema of the reading data will be shown in this tab.

Logs: Logs of the tasks will display here.

This section provides details about the various categories of the task components which can be used in the Spark Job.

There are three categories of task components available:

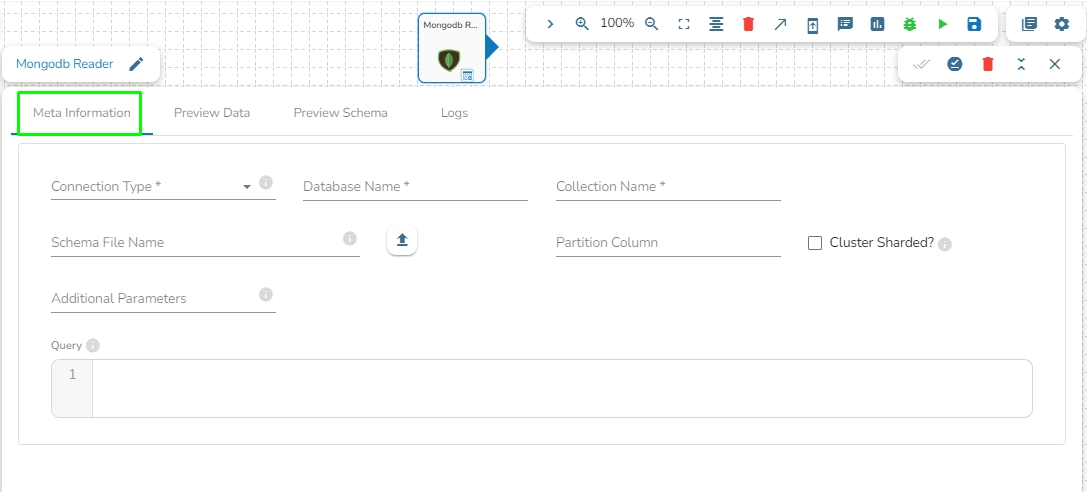

This task is used to read data from MongoDB collection.

Drag the MongoDB reader task to the Workspace and click on it to open the related configuration tabs for the same. The Meta Information tab opens by default.

Connection Type: Select the connection type from the drop-down:

Standard

SRV

Connection String

Port (*): Provide the Port number (It appears only with the Standard connection type).

Host IP Address (*): The IP address of the host.

Username (*): Provide a username.

Password (*): Provide a valid password to access the MongoDB.

Database Name (*): Provide the name of the database where you wish to write data.

Additional Parameters: Provide details of the additional parameters.

Cluster Shared: Enable this option to horizontally partition data across multiple servers.

Schema File Name: Upload Spark Schema file in JSON format.

Query: Please provide Mongo Aggregation query in this field.

Please Note: Please click the Save Task In Storage icon to save the configuration for the dragged reader task.

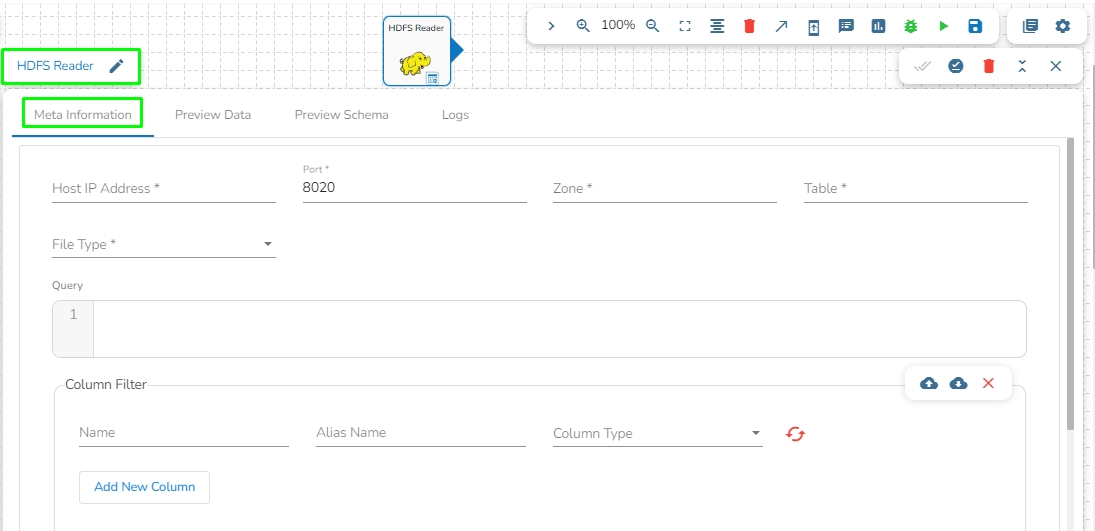

HDFS stands for Hadoop Distributed File System. It is a distributed file system designed to store and manage large data sets in a reliable, fault-tolerant, and scalable way. HDFS is a core component of the Apache Hadoop ecosystem and is used by many big data applications.

This task reads the file located in HDFS (Hadoop Distributed File System).

Drag the HDFS reader task to the Workspace and click on it to open the related configuration tabs for the same. The Meta Information tab opens by default.

Host IP Address: Enter the host IP address for HDFS.

Port: Enter the Port.

Zone: Enter the Zone for HDFS. Zone is a special directory whose contents will be transparently encrypted upon write and transparently decrypted upon read.

File Type: Select the File Type from the drop down. The supported file types are:

CSV: The Header and Infer Schema fields get displayed with CSV as the selected File Type. Enable Header option to get the Header of the reading file and enable Infer Schema option to get true schema of the column in the CSV file.

JSON: The Multiline and Charset fields get displayed with JSON as the selected File Type. Check-in the Multiline option if there is any multiline string in the file.

PARQUET: No extra field gets displayed with PARQUET as the selected File Type.

AVRO: This File Type provides two drop-down menus.

Compression: Select an option out of the Deflate and Snappy options.

Compression Level: This field appears for the Deflate compression option. It provides 0 to 9 levels via a drop-down menu.

XML: Select this option to read XML file. If this option is selected, the following fields will get displayed:

Infer schema: Enable this option to get true schema of the column.

Path: Provide the path of the file.

Root Tag: Provide the root tag from the XML files.

Row Tags: Provide the row tags from the XML files.

Join Row Tags: Enable this option to join multiple row tags.

Path: Provide the path of the file.

Partition Columns: Provide a unique Key column name to partition data in Spark.

Please Note: Please click the Save Task In Storage icon to save the configuration for the dragged reader task.

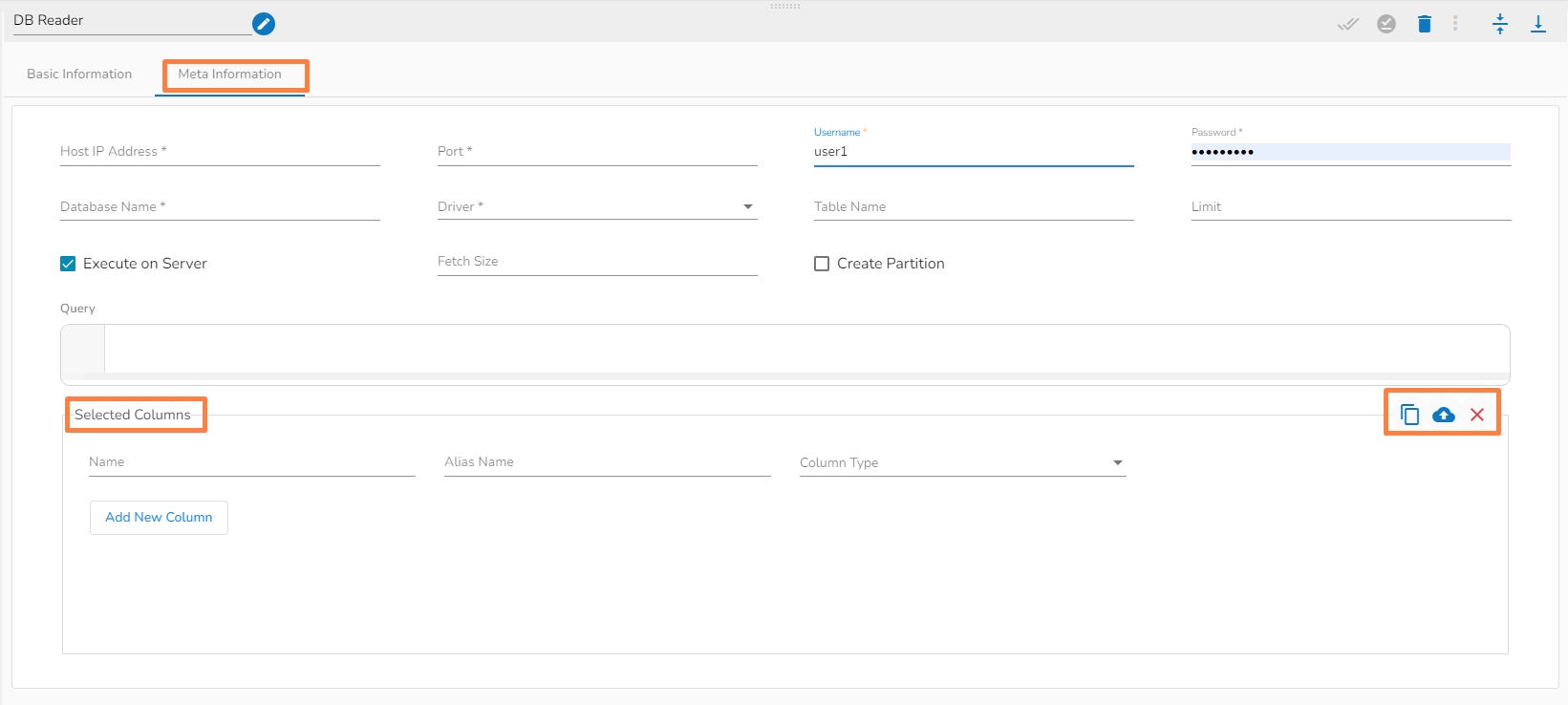

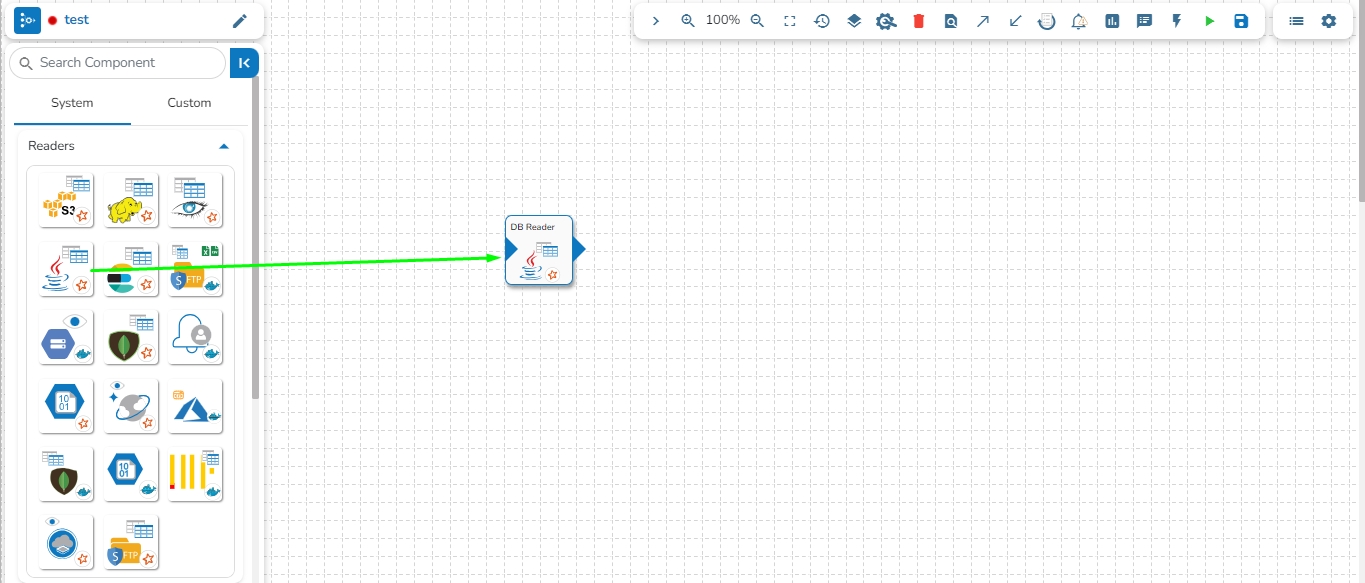

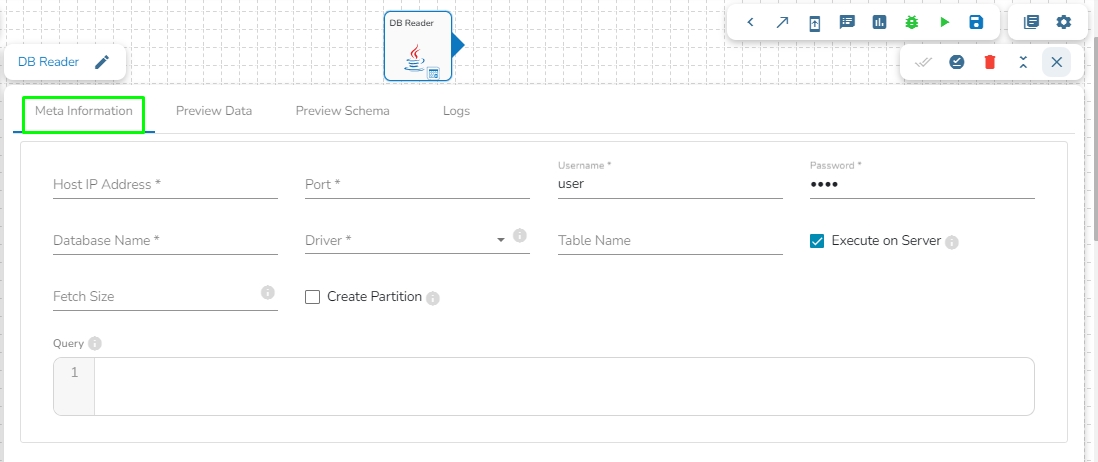

This task is used to read the data from the following databases: MYSQL, MSSQL, Oracle, ClickHouse, Snowflake, PostgreSQL, Redshift.

Drag the DB reader task to the Workspace and click on it to open the related configuration tabs for the same. The Meta Information tab opens by default.

Host IP Address: Enter the Host IP Address for the selected driver.

Port: Enter the port for the given IP Address.

Database name: Enter the Database name.

Table name: Provide a single or multiple table names. If multiple table name has be given, then enter the table names separated by comma(,).

User name: Enter the user name for the provided database.

Password: Enter the password for the provided database.

Driver: Select the driver from the drop down. There are 7 drivers supported here: MYSQL, MSSQL, Oracle, ClickHouse, Snowflake, PostgreSQL, Redshift.

Fetch Size: Provide the maximum number of records to be processed in one execution cycle.

Create Partition: This is used for performance enhancement. It's going to create the sequence of indexing. Once this option is selected, the operation will not execute on server.

Partition By: This option will appear once create partition option is enabled. There are two options under it:

Auto Increment: The number of partitions will be incremented automatically.

Index: The number of partitions will be incremented based on the specified Partition column.

Query: Enter the spark SQL query in this field for the given table or table(s). Please refer the below image for making query on multiple tables.

Please Note:

The ClickHouse driver in the Spark components will use HTTP Port and not the TCP port.

In the case of data from multiple tables (join queries), one can write the join query directly without specifying multiple tables, as only one among table and query fields is required.

Please click the Save Task In Storage icon to save the configuration for the dragged reader task.

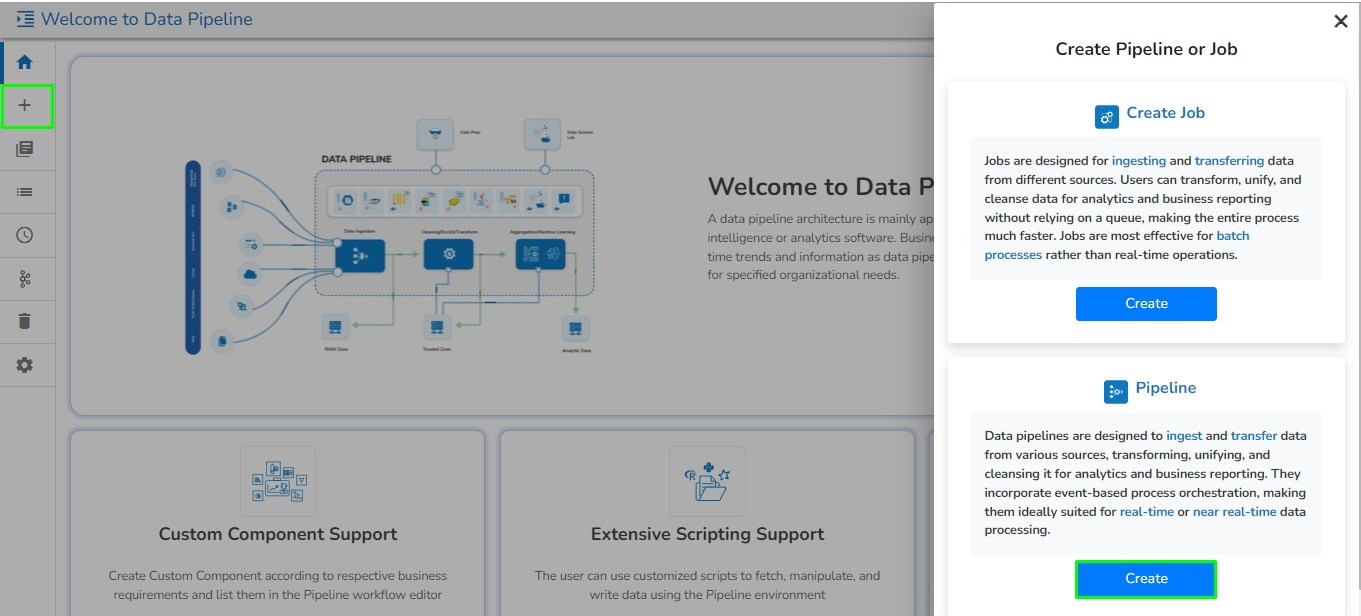

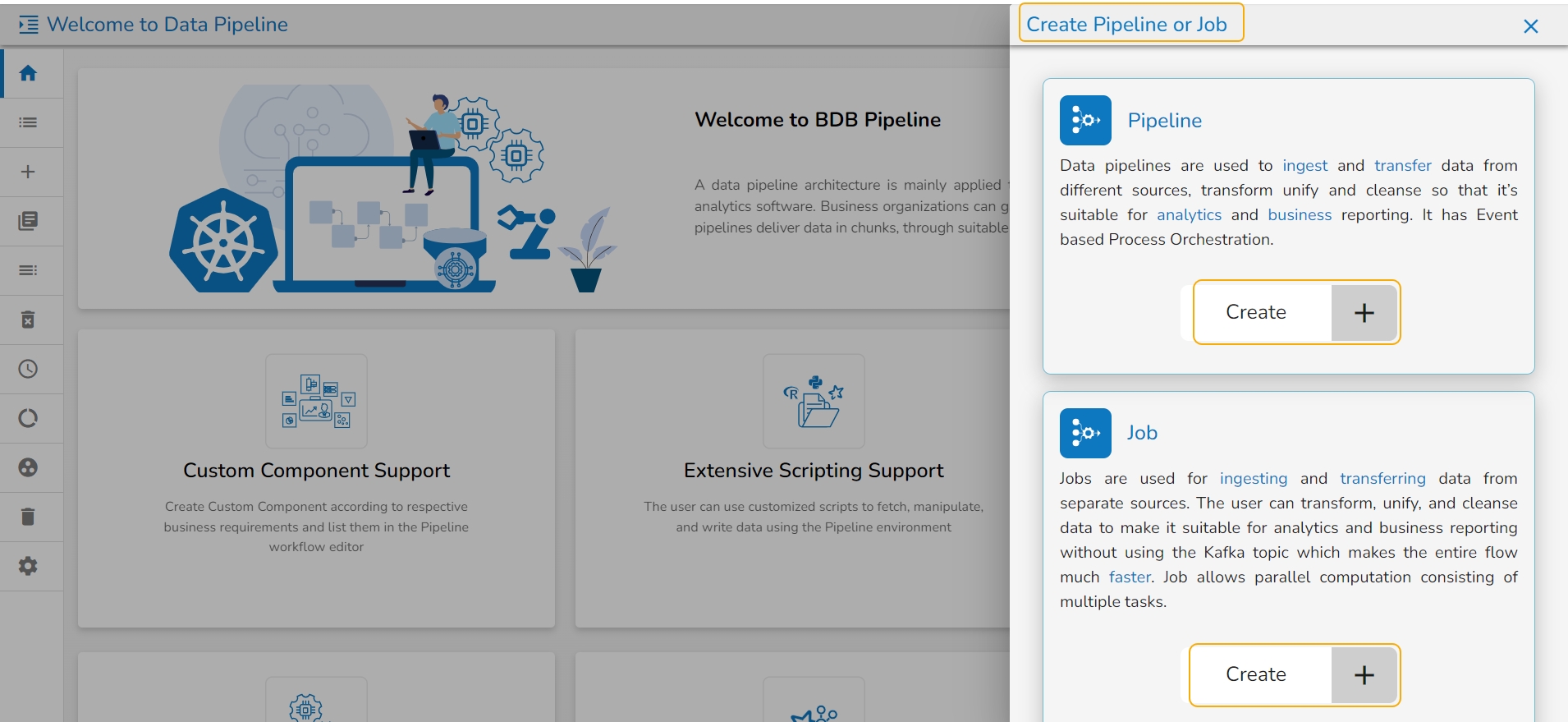

This section provides the steps involved in creating a new Pipeline flow.

Check out the below-given illustration on how to create a new pipeline.

Navigate to the Create Pipeline or Job interface.

Click the Create option provided for Pipeline.

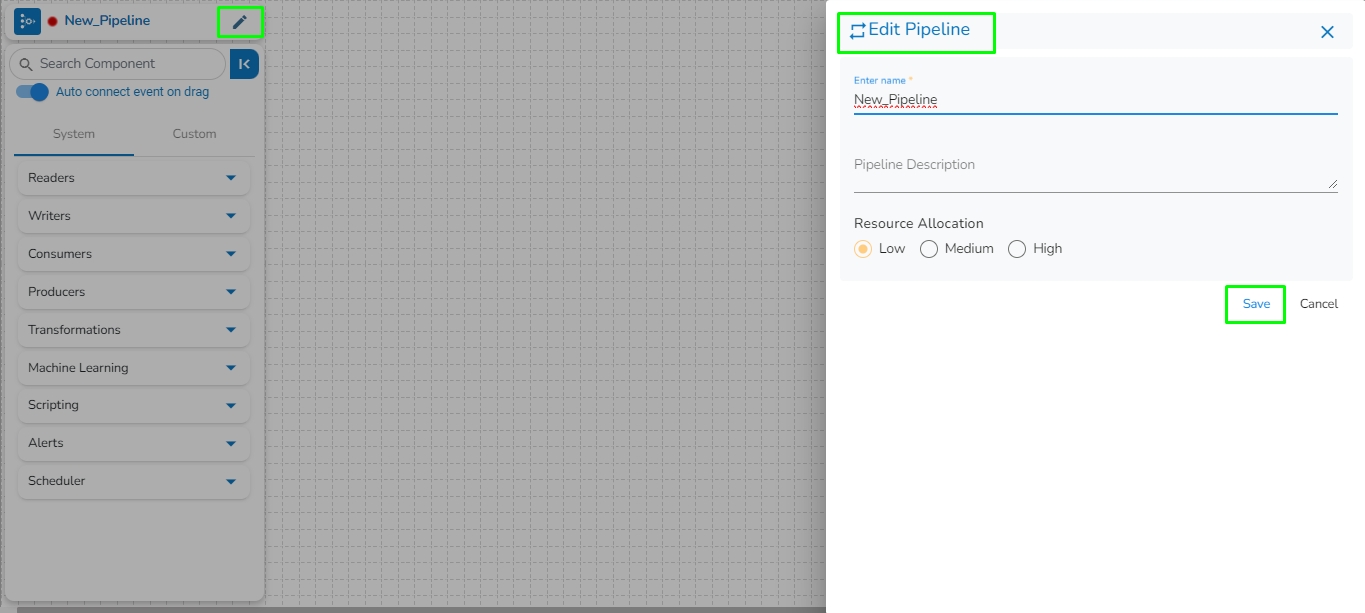

The New Pipeline window opens asking for the basic information.

Enter a name for the new Pipeline.

Describe the Pipeline (Optional).

Select a resource allocation option using the radio button- the given choices are:

Low

Medium

High

Please Note: This feature is used to deploy the pipeline with high, medium, or low-end configurations according to the velocity and volume of data that the pipeline must handle. All the components saved in the pipeline are then allocated resources based on the selected Resource Allocation option depending on the component type (Spark and Docker).

Click the Save option to create the pipeline. By clicking the Save option, the user gets redirected to the pipeline workflow editor.

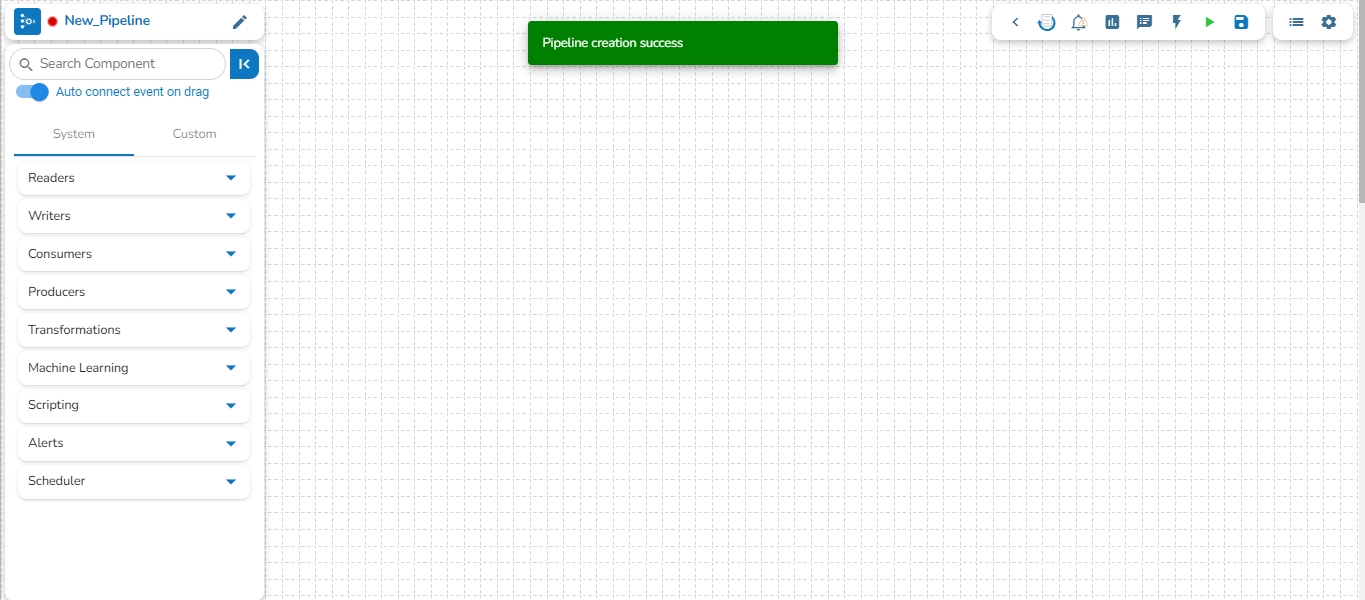

A success message appears to confirm the creation of a new pipeline.

The Pipeline Editor page opens for the newly created pipeline.

Resource allocation can be changed anytime by clicking on top left edit icon near pipeline name.

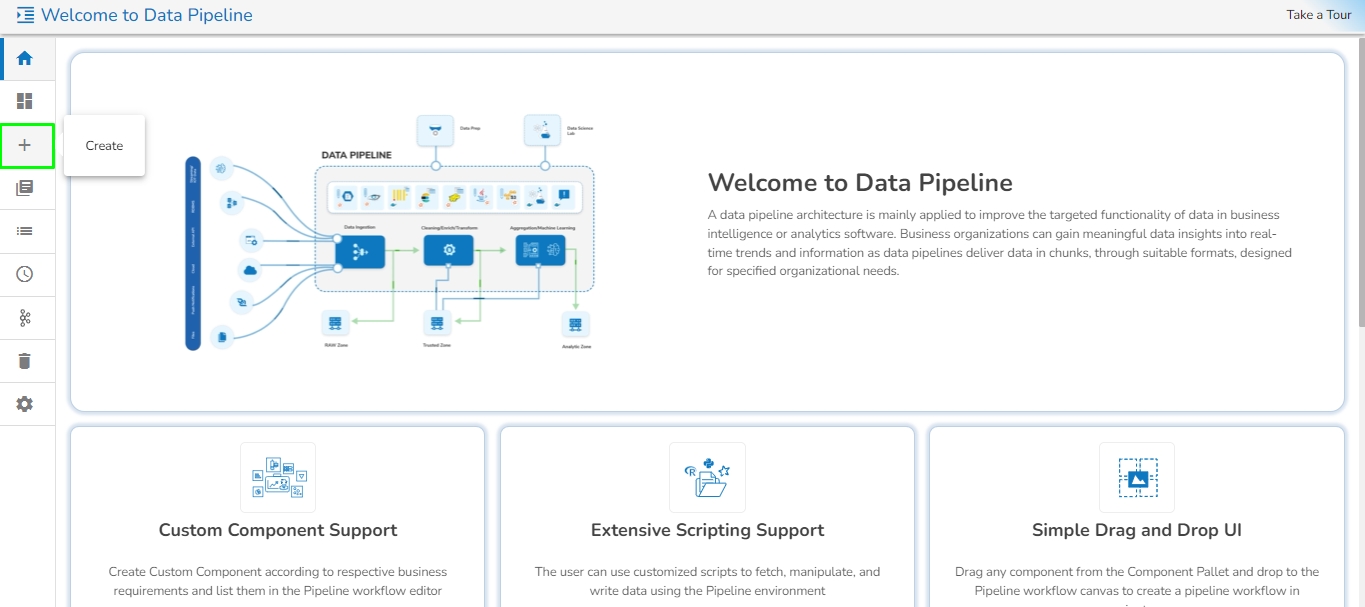

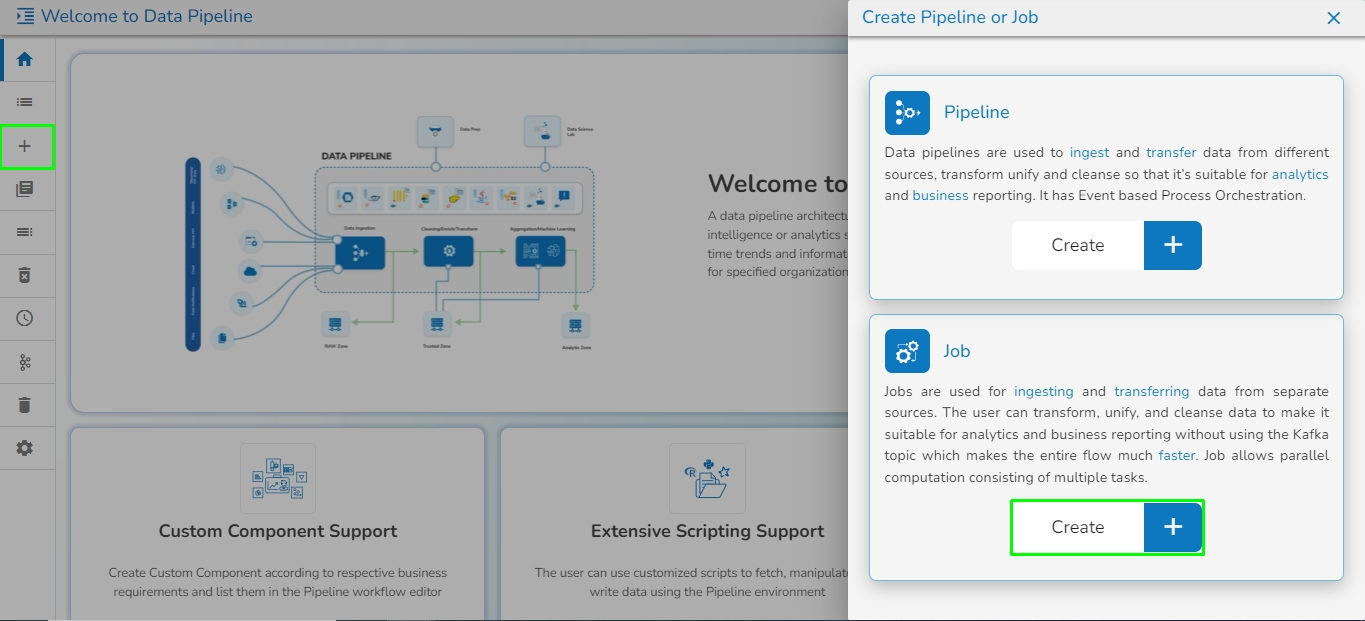

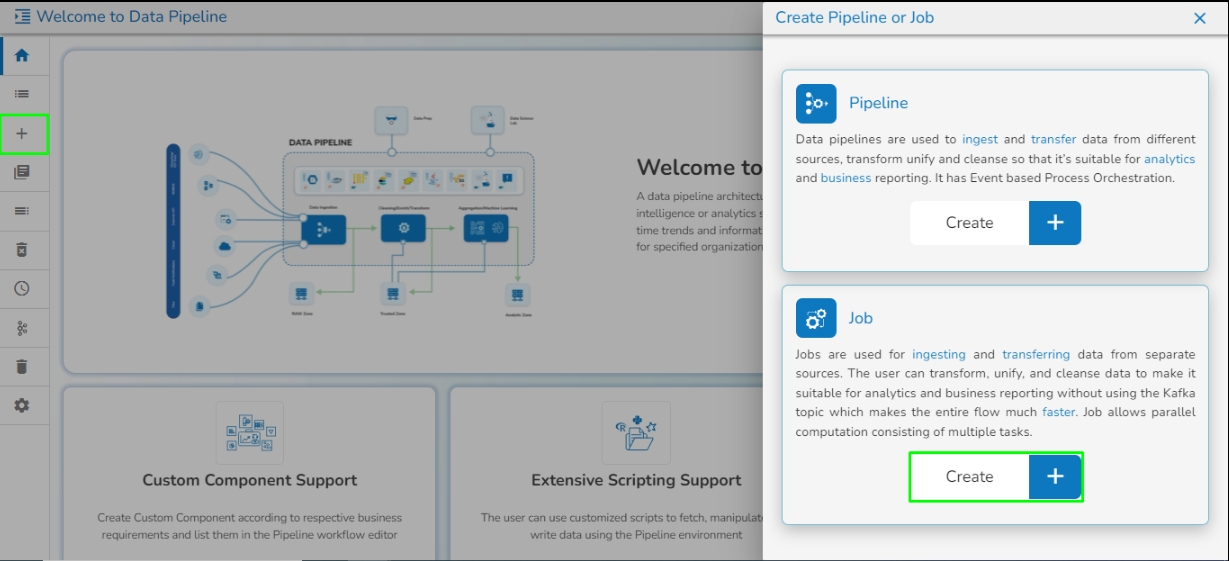

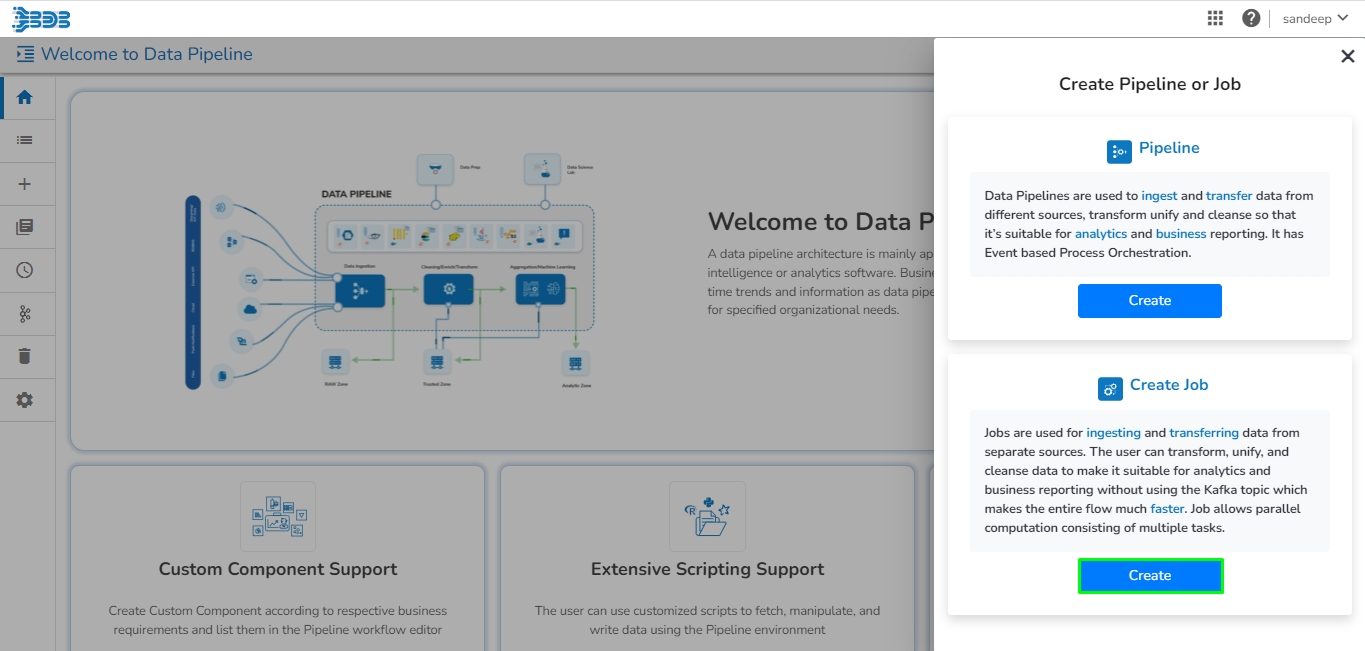

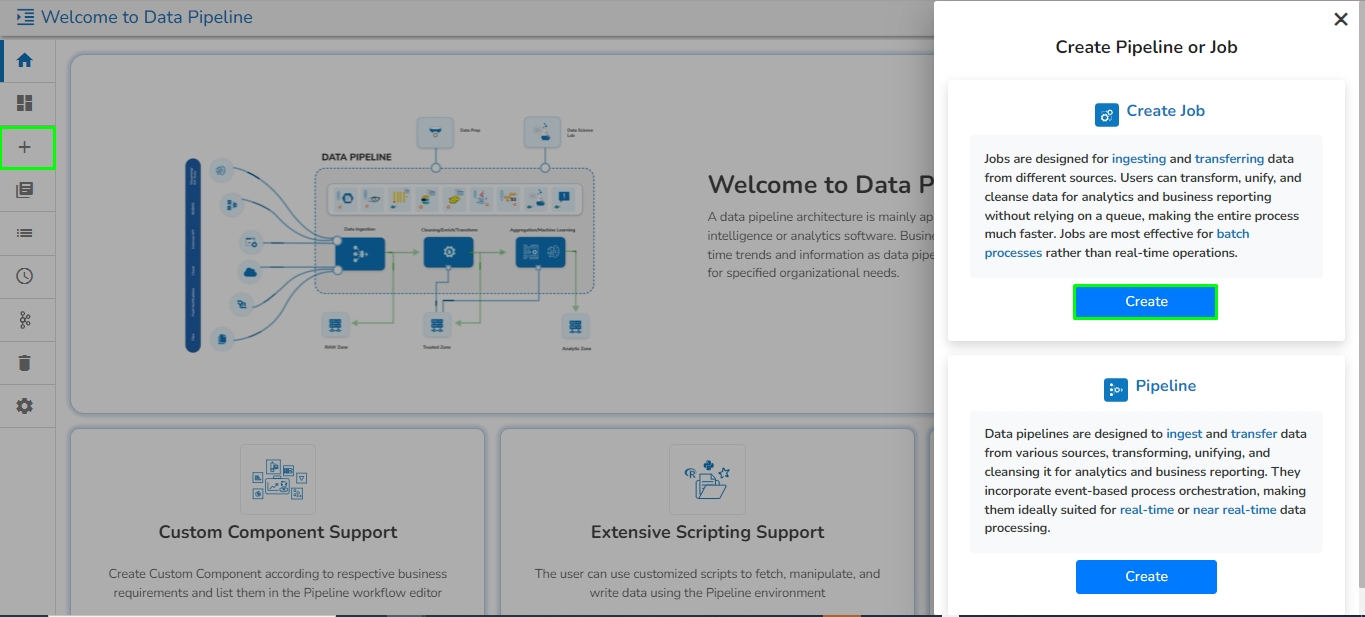

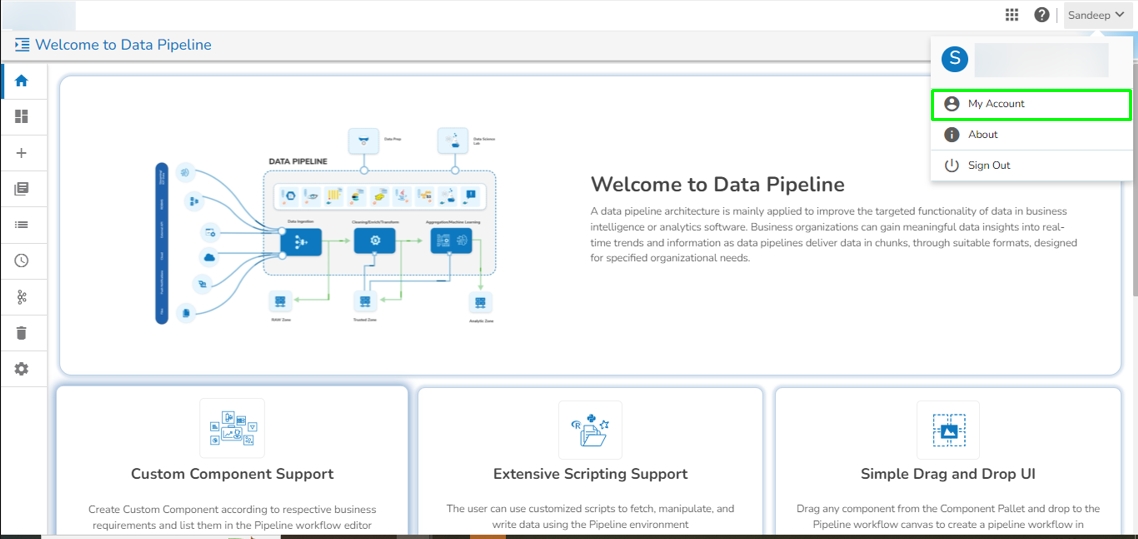

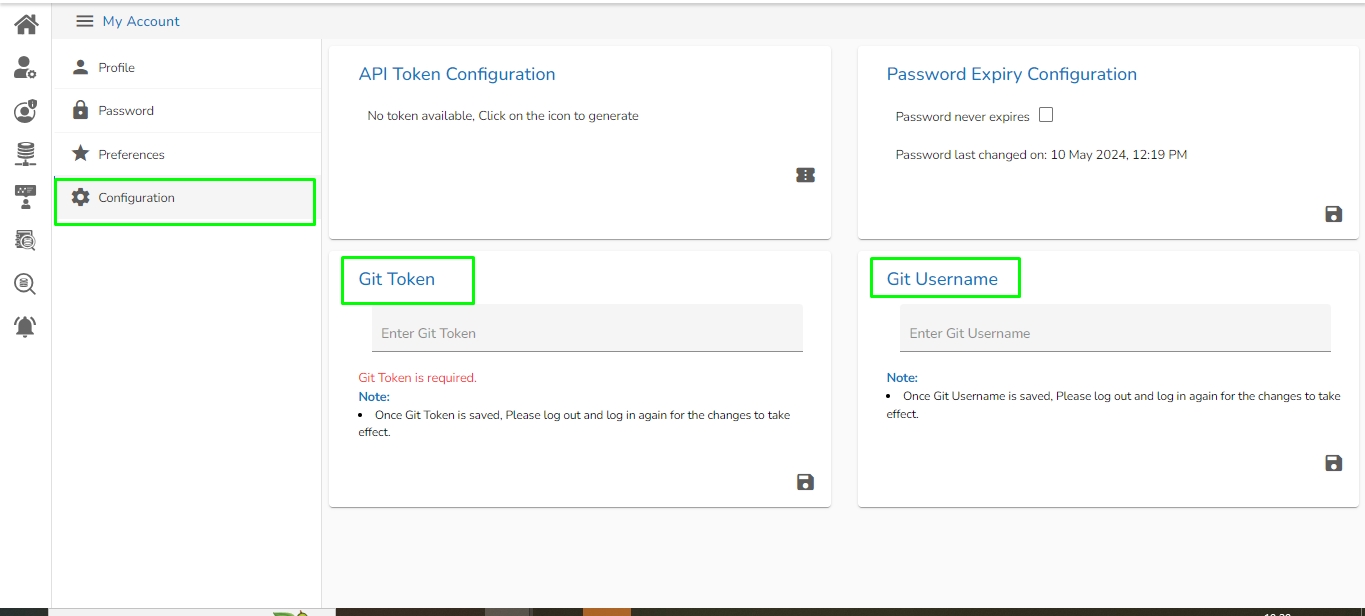

Create a new Pipeline or Job based on the selected create option.

The Pipeline Homepage offers the Create option to initiate with Pipeline or Job creation based on the need of user.

Navigate to the Pipeline List page.

Click the Create icon.

The Create Pipeline or Job user interface opens prompting to create a new Pipeline and Job.

Please Note: Based on your selection, the next page opens. Refer the Create a New Pipeline and Create a Job sections to get the details.

This section focuses on the Configuration tab provided for any Pipeline component.

For each component that gets deployed, we have an option to configure the resources i.e., Memory and CPU.

We have two deployment types:

Docker

Spark

Go through the given illustration to understand how to configure a component using the Docker deployment type.

After we save the component and pipeline, the component gets saved with the default configuration of the pipeline i.e., Low, Medium, and High. After we save the pipeline, we can see the configuration tab in the component. There are multiple things.

For the Docker components, we have the Request and Limit configurations.

We can see the CPU and Memory options to be configured.

CPU: This is the CPU configuration where we can specify the number of cores that we need to assign to the component.

Please Note: 1000 means 1 core in the configuration of docker components. When we put 100 that means 0.1 core has been assigned to the component.

Memory: This option is to specify how much memory you want to dedicate to that specific component.

Please Note: 1024 means 1GB in the configuration of the docker components.

Instances: The number of instances is used for parallel processing. If we give N no. of instances those many pods will get deployed.

Go through the below given walk-through to understand the steps to configure a component with Spark configuration type.

The Spark Components configuration is slightly different from the Docker components. When the spark components are deployed, there are two pods that come up:

Driver

Executor

Provide the Driver and executor configurations separately.

Instances: The number of instances is used for parallel processing. If we give N no. of instances in executors configuration those many executors pods will get deployed.

Please Note: Till the current release, the minimum requirement to deploy a driver is 0.1 Cores and 1 core for the executor. It can change with the upcoming versions of Spark.

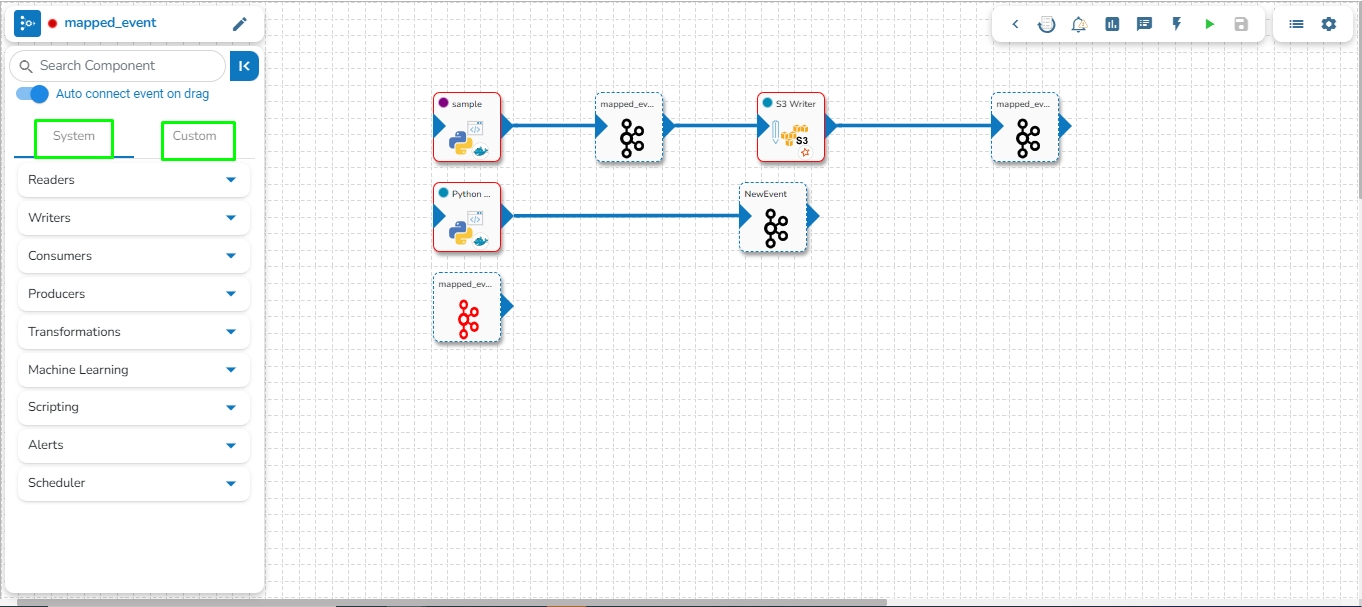

Adding components to a pipeline workflow.

Check out the below given walk-through to add components to Pipeline Workflow editor canvas.

The Component Pallet is situated on the left side of the User Interface on the Pipeline Workflow. It has the System and Custom components tabs listing the various components.

The System components are displayed in the below given image:

Once the Pipeline gets saved in the pipeline list, the user can add components to the canvas. The user can drag the required components to the canvas and configure it to create a Pipeline workflow or Data flow.

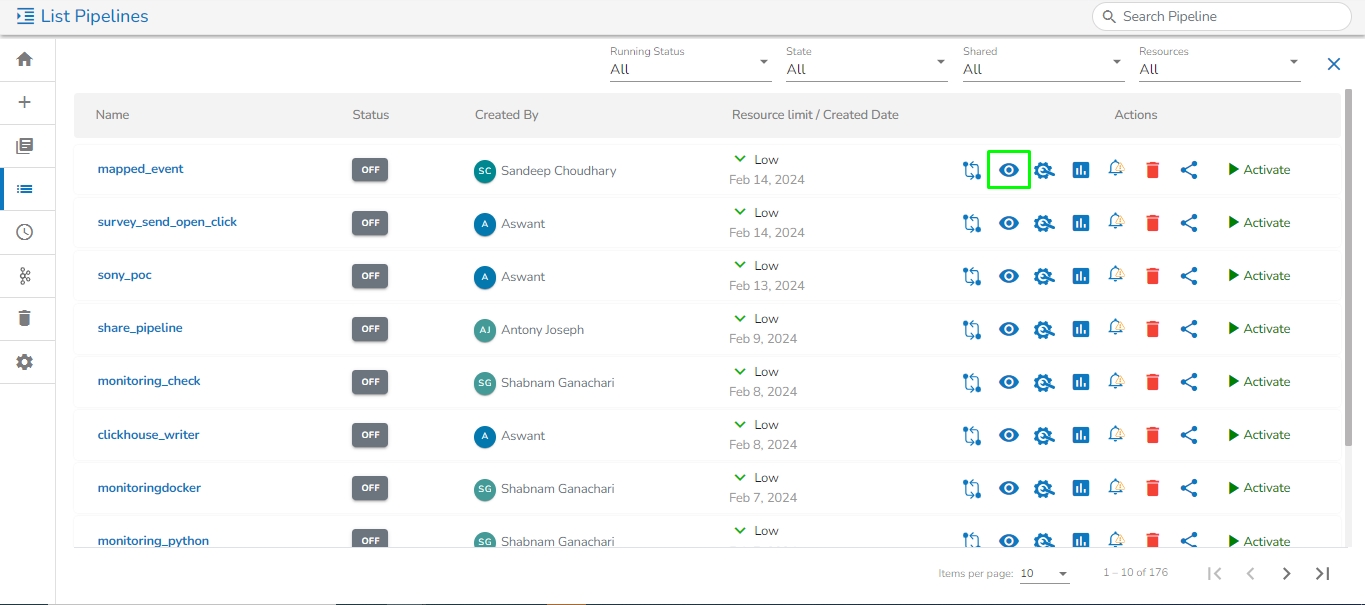

Navigate to the existing data pipeline from the List Pipelines page.

Click the View icon for the pipeline.

The Pipeline Editor opens for the selected pipeline.

Drag and drop the new required components or make changes in the existing component’s meta information or change the component configuration (E.g., the DB Reader is dragged to the workspace in the below-given image).

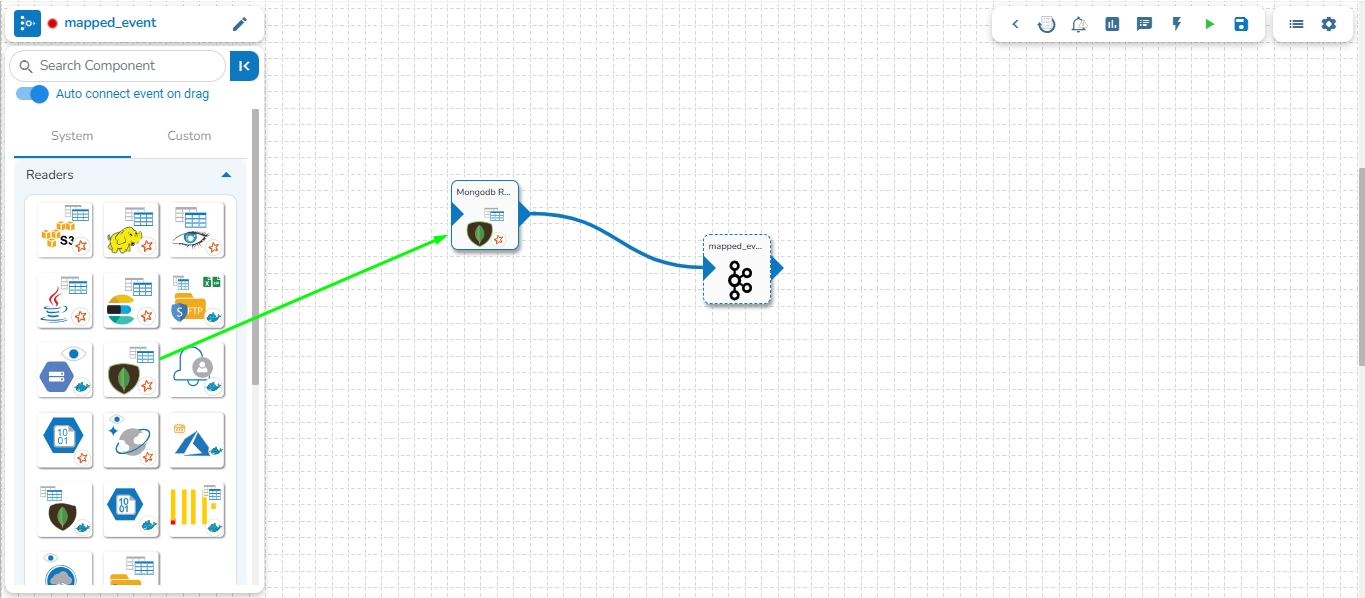

Once dragged and dropped to the pipeline workspace, components can be directly connected to the nearest Kafka Event. To enable the auto-connect feature, the user needs to ensure that the Auto connect event on drag option is enabled, which is the default setting.

Please refer to the Event page for more details on creating Kafka Events and connecting them to components using various methods.

Click on the dragged component and configure the Basic Information tab, which opens by default.

Open the Meta Information tab, which is next to the Basic Information tab, and configure it.

Make sure to click the Save Component in Storage icon to update the component details and pipeline to reflect the recent changes in the pipeline. (The user can drag and configure other required components to create the Pipeline Workflow.

Click the Update Pipeline icon to save the changes.

A success message appears to assure that the pipeline has been successfully updated.

Click the Activate Pipeline icon to activate the pipeline (It appears only after the newly created pipeline gets successfully updated).

A dialog window opens to confirm the action of pipeline activation.

Click the YES option to activate the pipeline.

A success message appears confirming the activation of the pipeline.

Another success message appears to confirm that the pipeline has been updated.

The Status for the pipeline gets changed on the Pipeline List page.

Please Note:

Click the Delete icon from the Pipeline Editor page to delete the selected pipeline. The deleted Pipeline gets removed from the Pipeline list.

Refer to the Component Panel section to get detailed information on the each Pipeline Component.

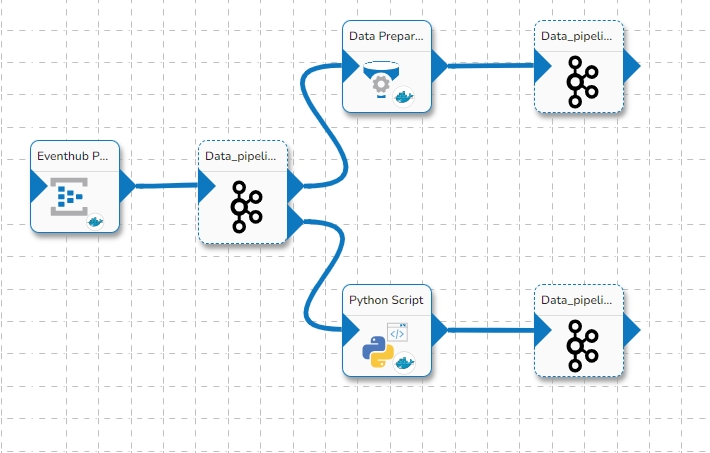

An event-driven architecture uses events to trigger and communicate between decoupled services and is common in modern applications built with microservices.

The connecting components help to assemble various pipeline components and create a Pipeline Workflow. Just click and drag the component you want to use into the editor canvas. Connect the component output to a Kafka Event.

Once a Pipeline is created the User Interface of the Data Pipeline provides a canvas for the user to build the data flow (Pipeline Workflow).The Pipeline assembling process can be divided into two parts as mentioned below:

Adding Components to the Canvas

Adding Connecting Components (Events) to create the Data flow/ Pipeline workflow

Each components inside a pipeline are fully decoupled. Each component acts as a producer and consumer of data. The design is based on event-driven process orchestration. For passing the output of one component to another component we need an Intermediatory event. An event-driven architecture contains three items:

Event Producer [Components]

Event Stream [Event (Kafka topic/ DB Sync)

Event Consumer [Components]

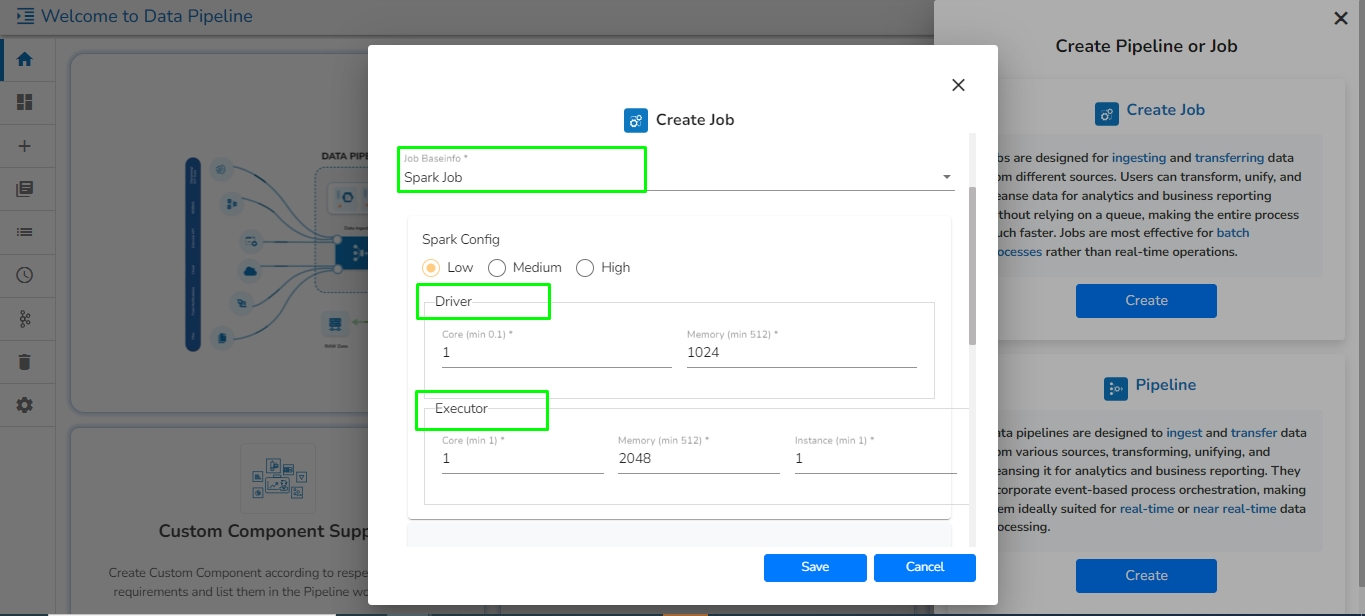

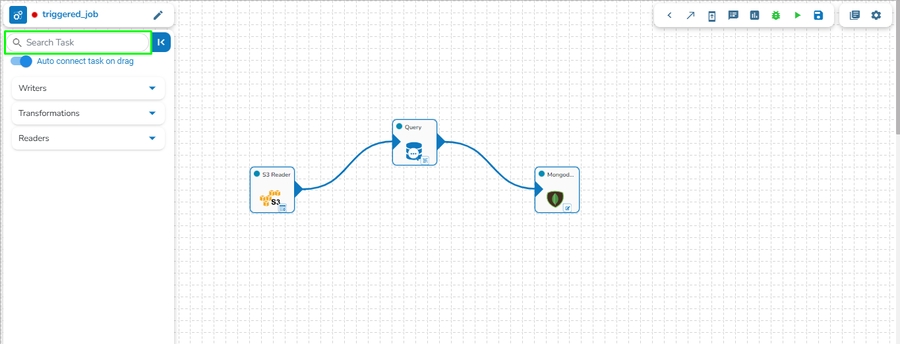

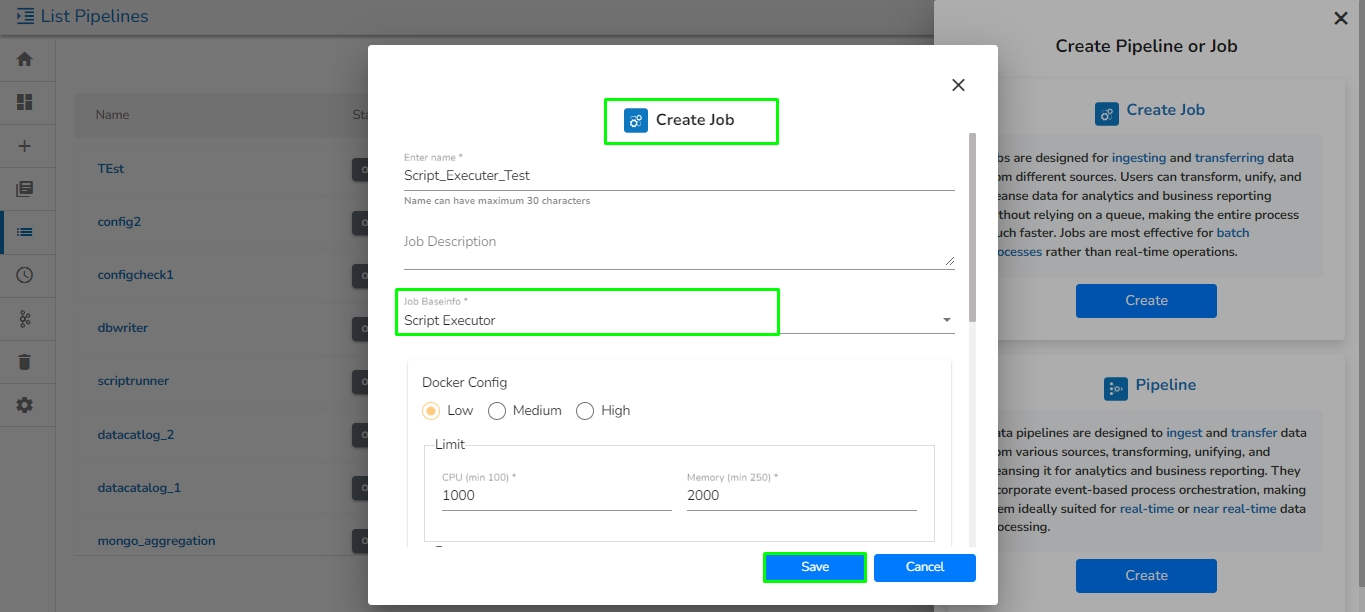

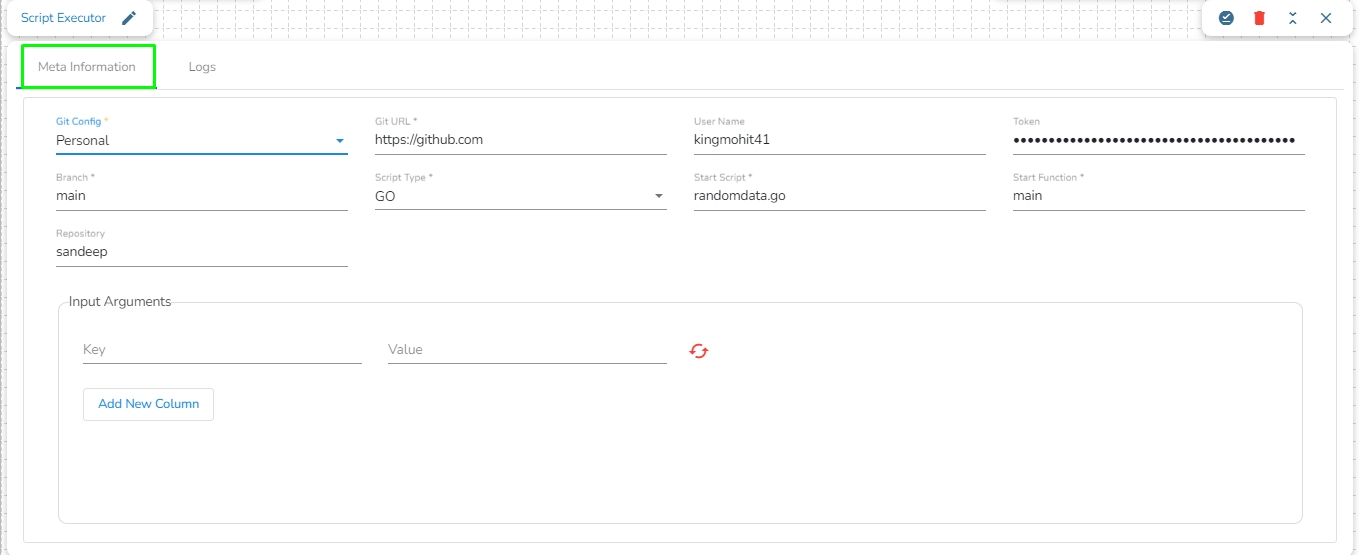

This section provides detailed information on the Jobs to make your data process faster.

Jobs are used for ingesting and transferring data from separate sources. The user can transform, unify, and cleanse data to make it suitable for analytics and business reporting without using the Kafka topic which makes the entire flow much faster.

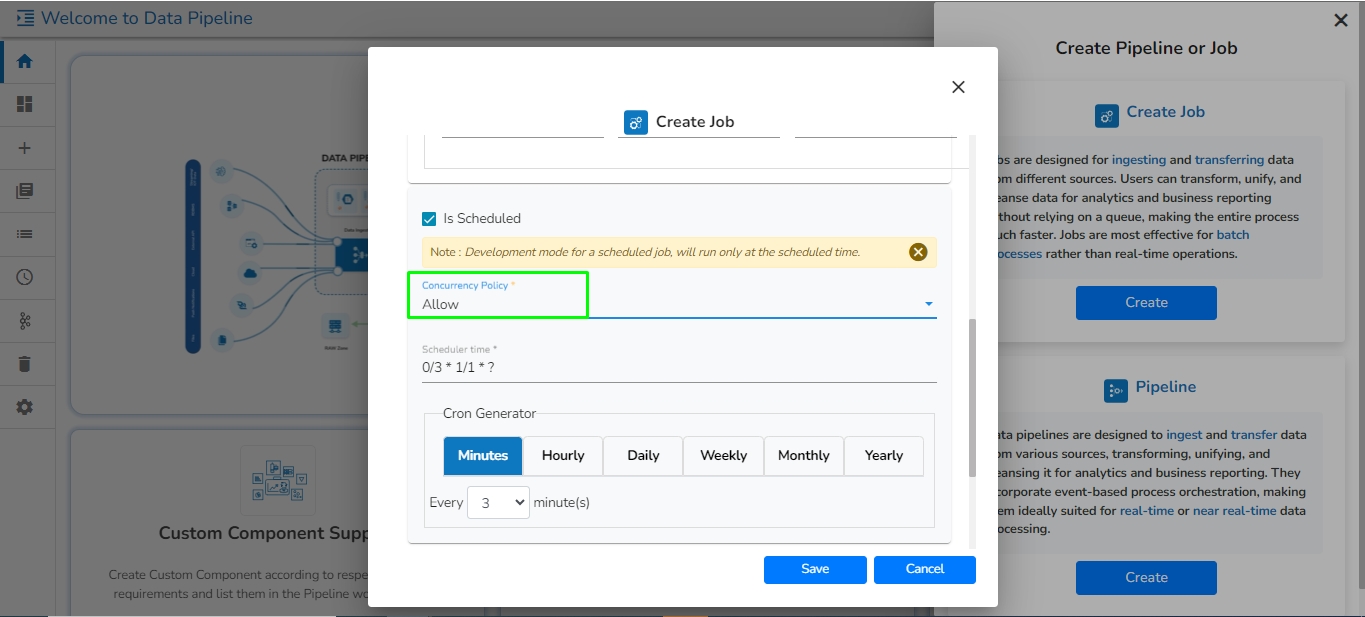

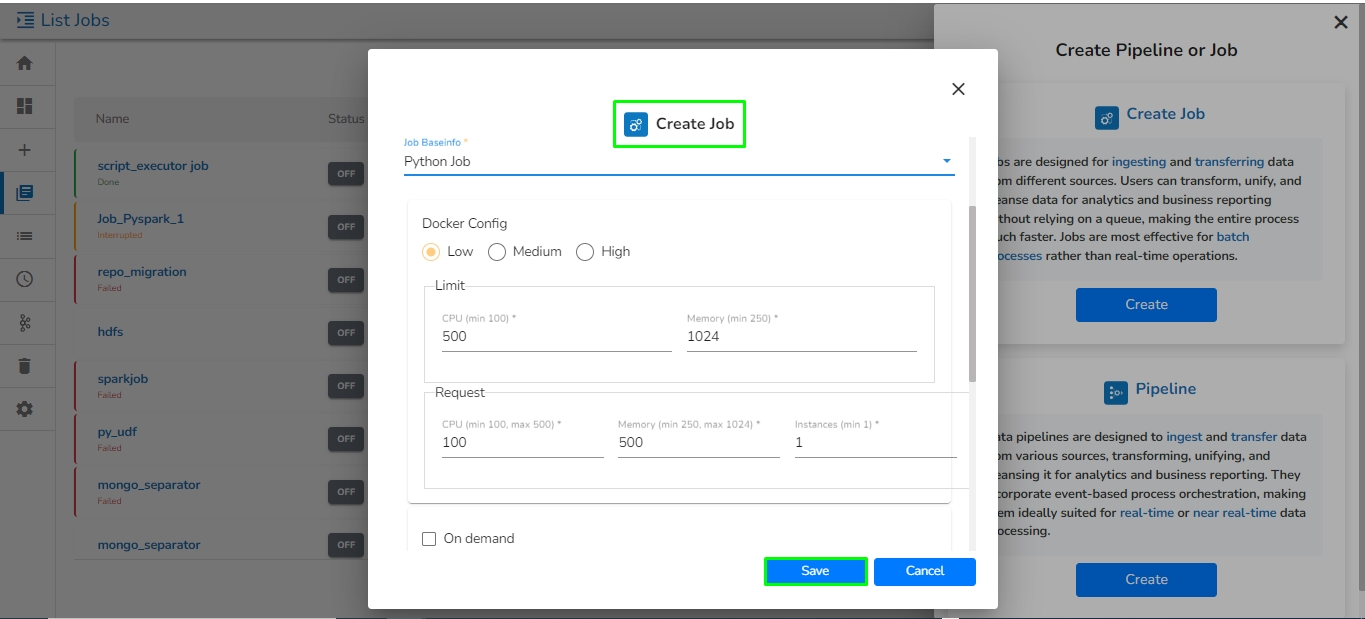

Check out the given demonstration to understand how to create and activate a job.

Navigate to the Data Pipeline homepage.

Click on the Create icon.

Navigate to the Create Pipeline or Job interface.

The New Job dialog box appears redirecting the user to create a new Job.

Enter a name for the new Job.

Describe the Job(Optional).

Job Baseinfo: In this field, there are three options:

Trigger By: There are 2 options for triggering a job on success or failure of a job:

Success Job: On successful execution of the selected job the current job will be triggered.

Failure Job: On failure of the selected job the current job will be triggered.

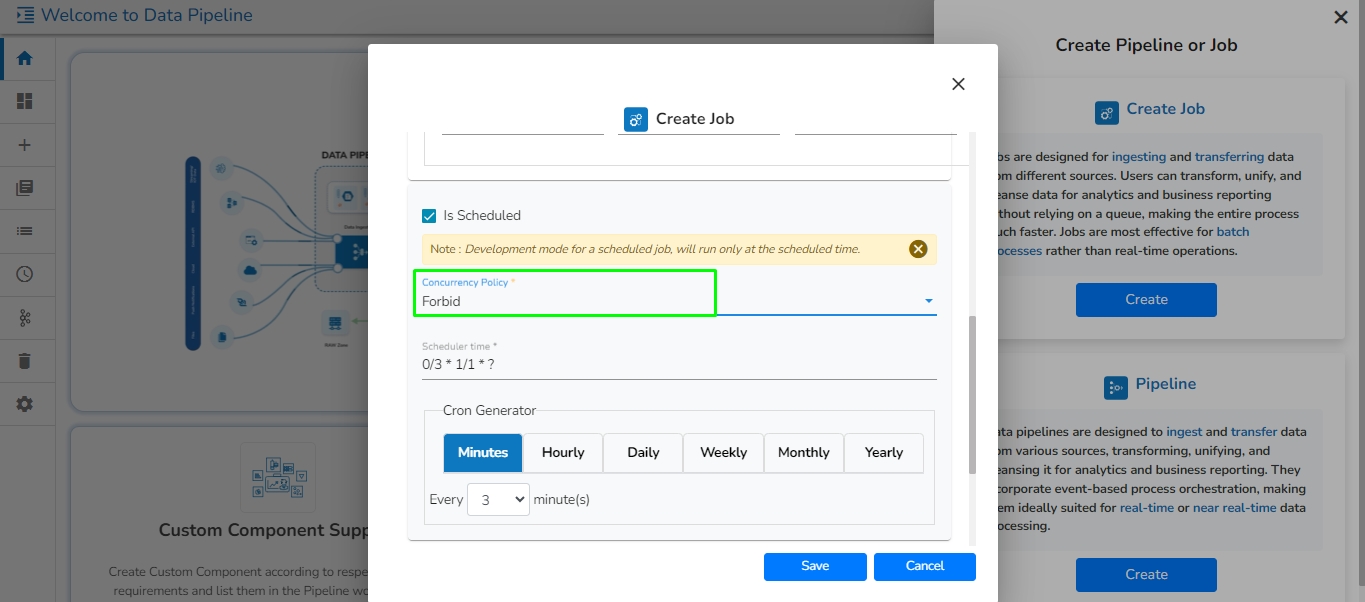

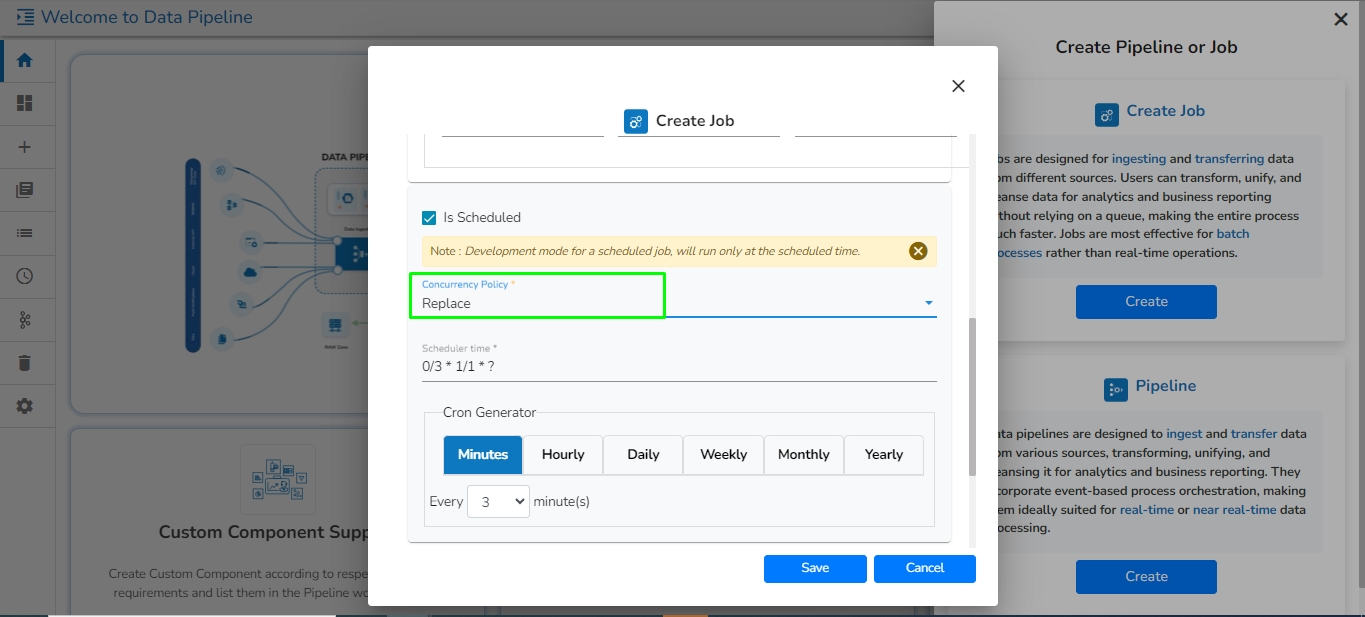

Is Scheduled?

A job can be scheduled for a particular timestamp. Every time at the same timestamp the job will be triggered.

Job must be scheduled according to UTC.

Concurrency Policy: Concurrency policy schedulers are responsible for managing the execution and scheduling of concurrent tasks or threads in a system. They determine how resources are allocated and utilized among the competing tasks. Different scheduling policies exist to control the order, priority, and allocation of resources for concurrent tasks.

Please Note:

Concurrency Policy will appear only when "Is Scheduled" is enabled.

If the job is scheduled, then the user has to activate it for the first time. Afterward, the job will automatically be activated each day at the scheduled time.

There are 3 Concurrency Policy available:

Allow: If a job is scheduled for a specific time and the first process is not completed before the next scheduled time, the next task will run in parallel with the previous tasks.

Forbid: If a job is scheduled for a specific time and the first process is not completed before the next scheduled time, the next task will wait until all the previous tasks are completed.

Replace: If a job is scheduled for a specific time and the first process is not completed before the next scheduled time, the previous task will be terminated and the new task will start processing.

Spark Configuration

Select a resource allocation option using the radio button. The given choices are:

Low

Medium

High

This feature is used to deploy the Job with high, medium, or low-end configurations according to the velocity and volume of data that the Job must handle.

Also, provide the resources to Driver and Executer according to the requirement.

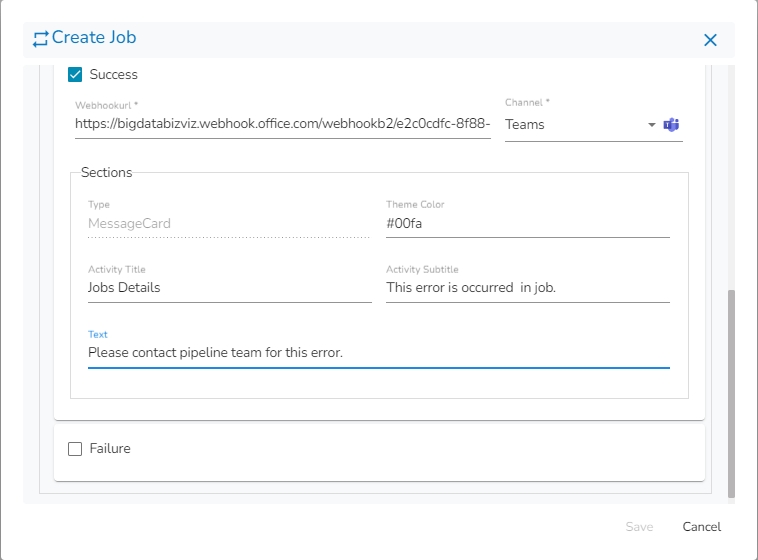

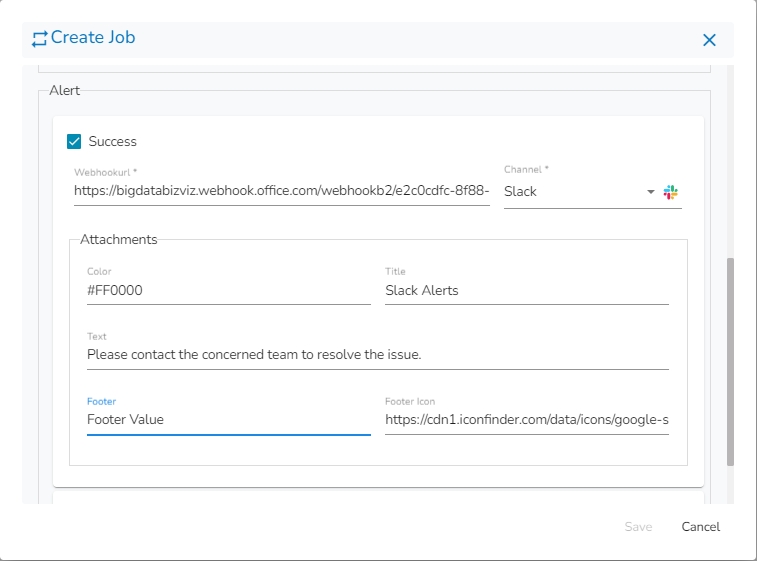

Alert: There are 2 options for sending an alert:

Success: On successful execution of the configured job, the alert will be sent to selected channel.

Failure: On failure of the configured job, the alert will be sent to selected channel.

Please go through the given link to configure the Alerts in Job: Job Alert

Click the Save option to create the job.

A success message appears to confirm the creation of a new job.

The Job Editor page opens for the newly created job.

Please Note:

The Trigger by feature will not work if the selected Trigger by job is running in the Development mode. Trigger by feature will only work when the selected Trigger by Job is activated.

By clicking the Save option, the user gets redirected to the job workflow editor.

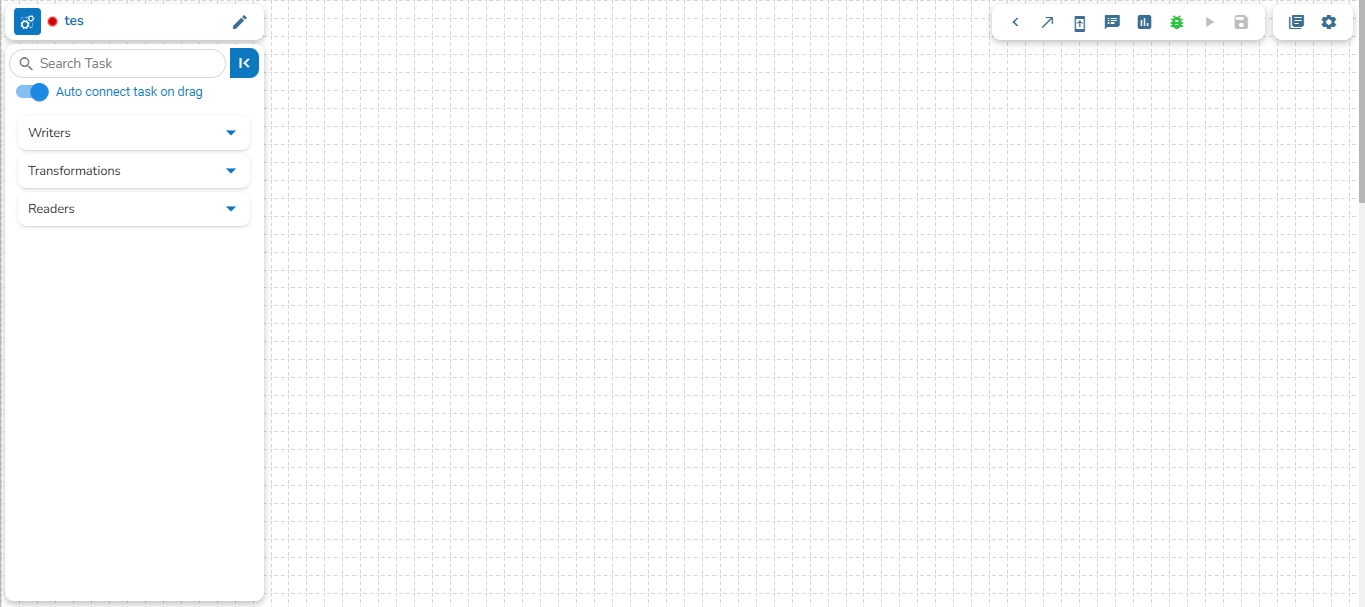

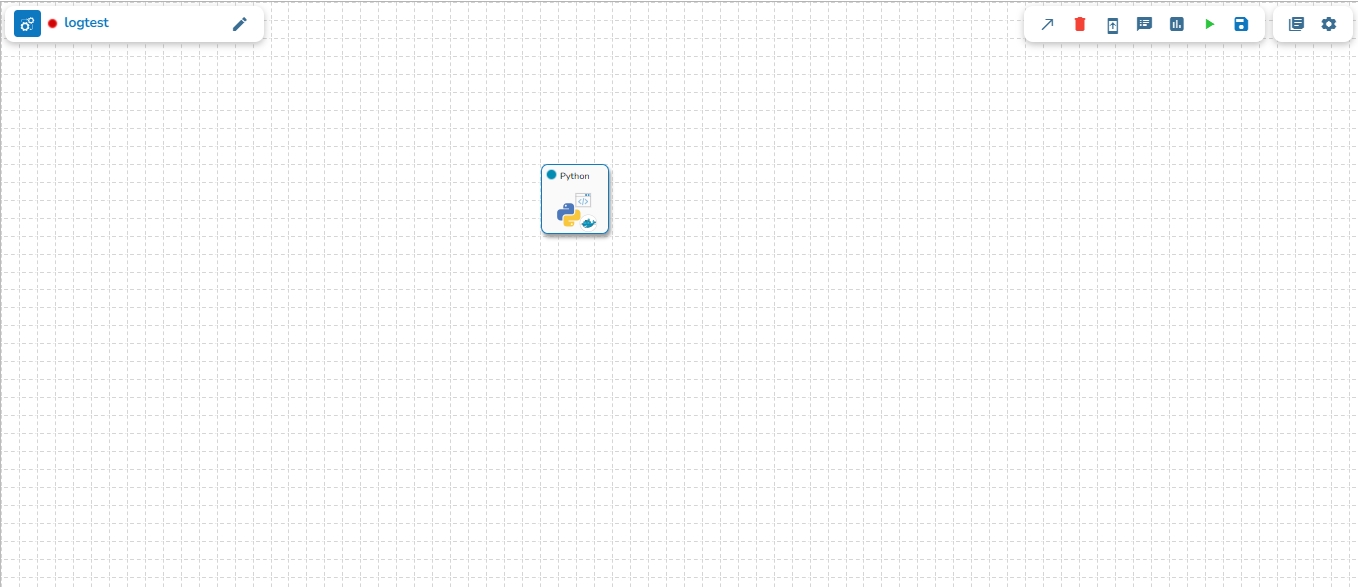

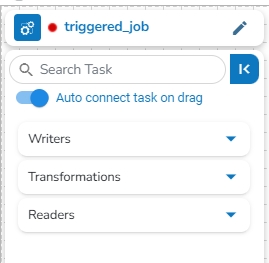

The Job Editor Page provides the user with all the necessary options and components to add a task and eventually create a Job workflow.

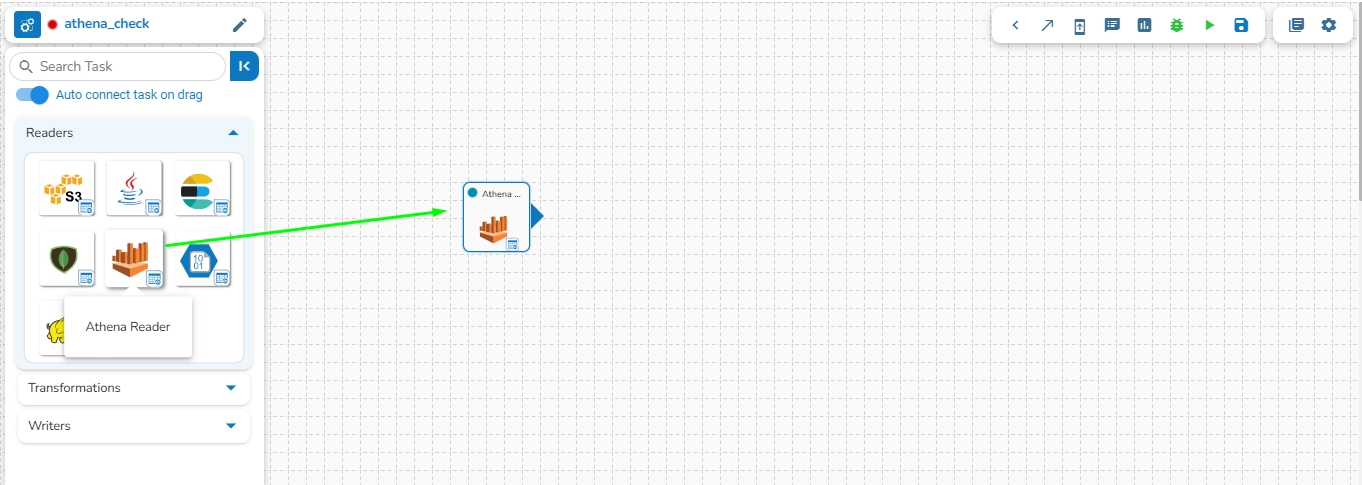

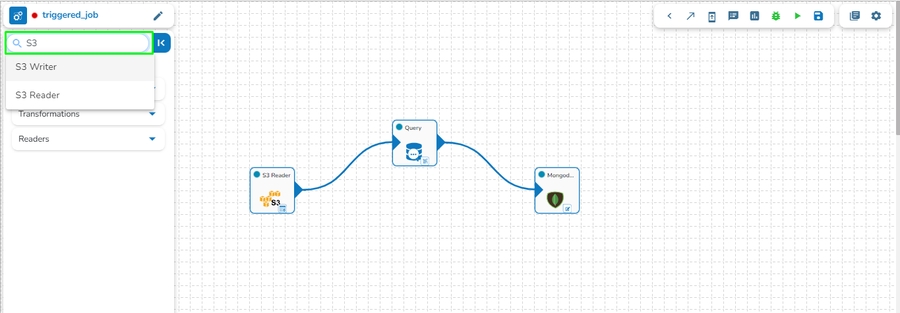

Once the Job gets saved in the Job list, the user can add a Task to the canvas. The user can drag the required tasks to the canvas and configure it to create a Job workflow or dataflow.

The Job Editor appears displaying the Task Pallet containing various components mentioned as Tasks.

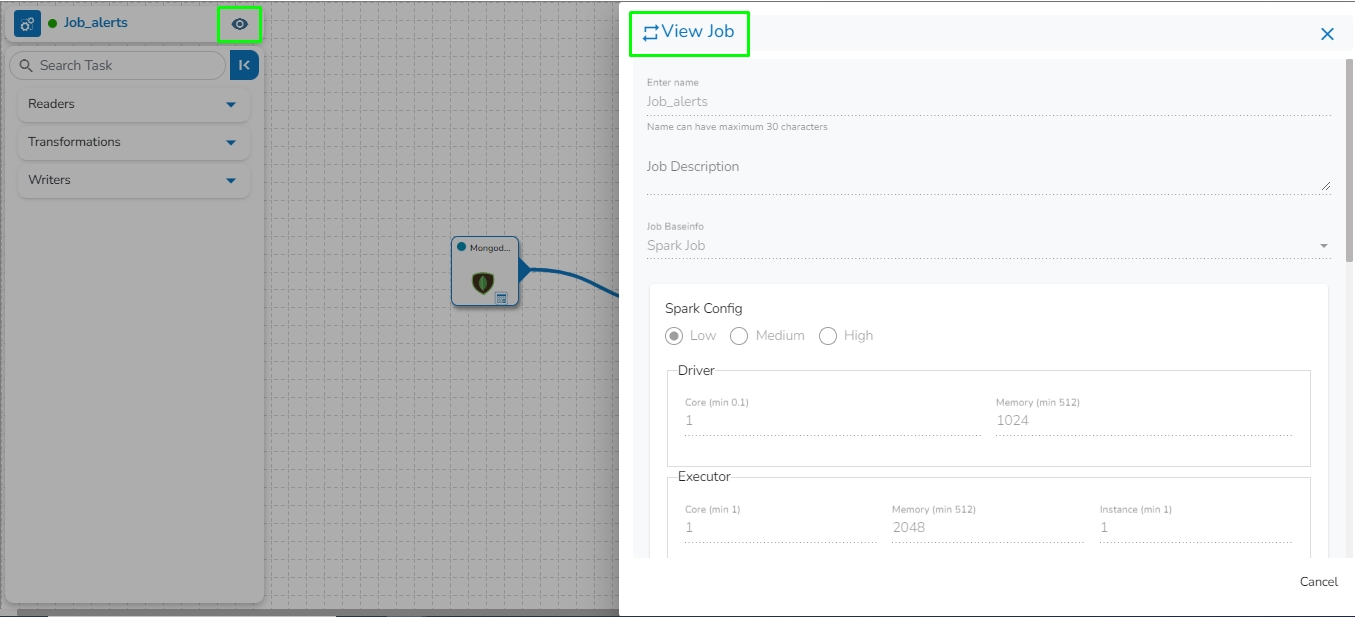

Navigate to the Job List page.

It will list all the jobs and display the Job type if it is Spark Job or PySpark Job in the type column.

Select a Job from the displayed list.

Click the View icon for the Job.

Please Note: Generally, the user can perform this step-in continuation to the Job creation, but if the user has come out of the Job Editor the above steps can help to access it.

The Job Editor opens for the selected Job.

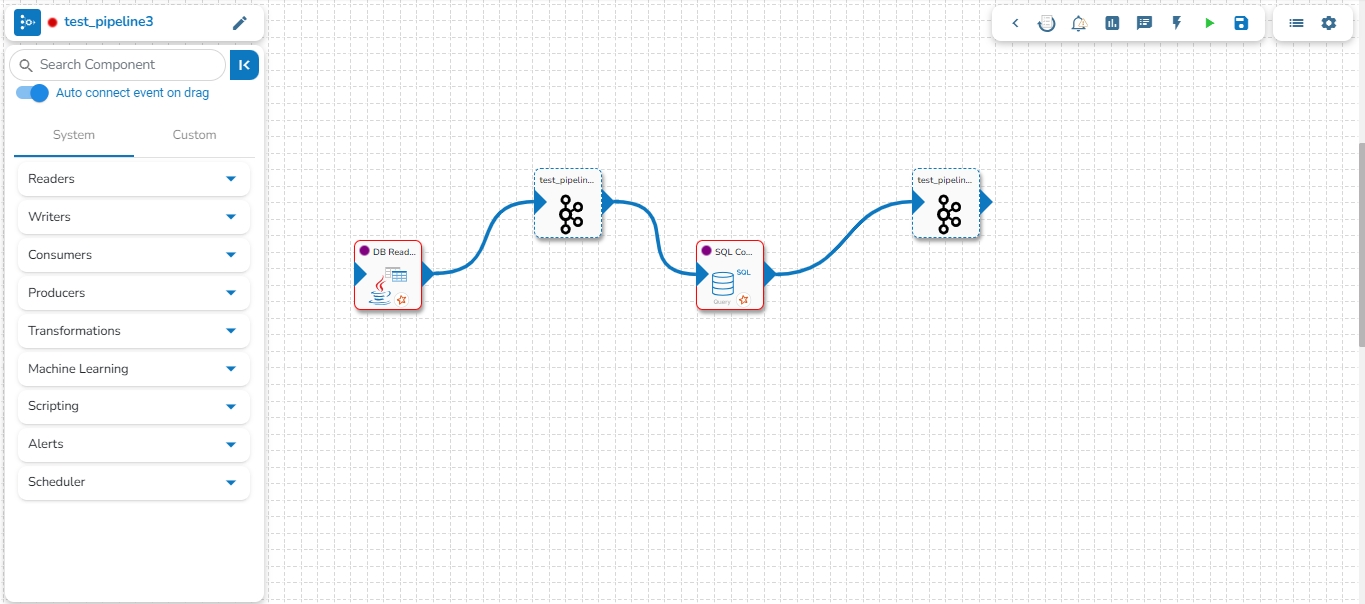

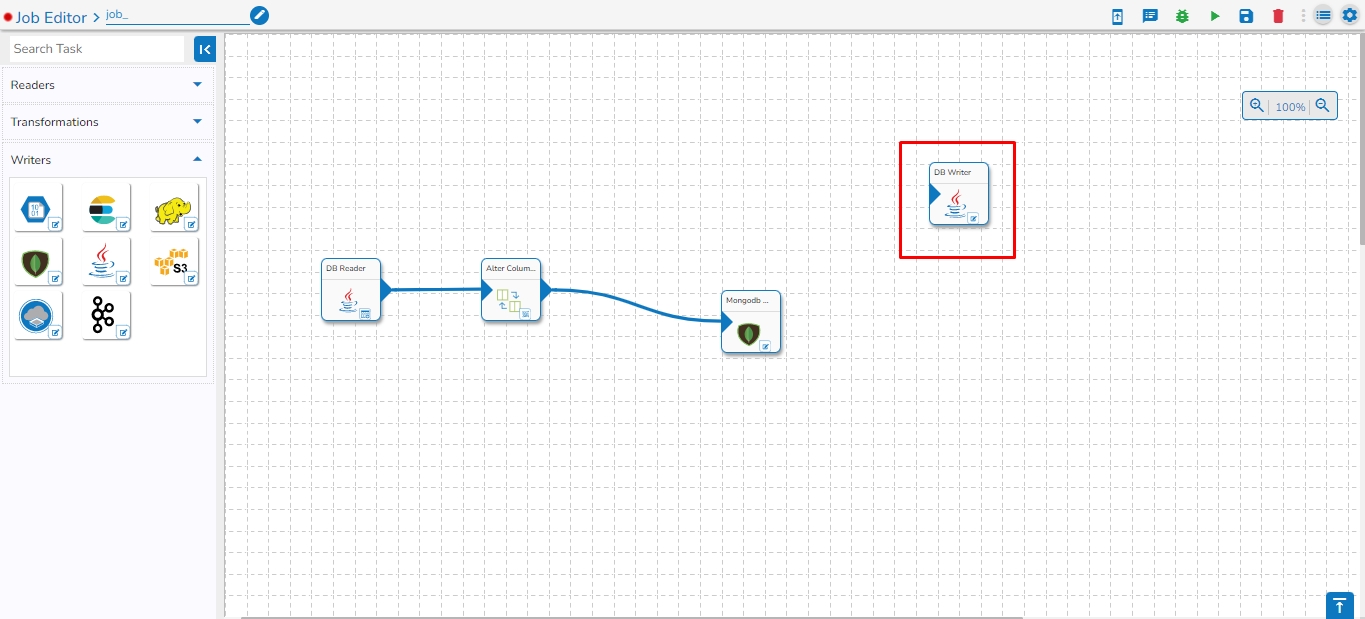

Drag and drop the new required task, make changes in the existing task’s meta information, or change the task configuration as the requirement. (E.g., the DB Reader is dragged to the workspace in the below-given image):

Click on the dragged task icon.

The task-specific fields open asking the meta-information about the dragged component.

Open the Meta Information tab and configure the required information for the dragged component.

Click the given icon to validate the connection.

Click the Save Task in Storage icon.

A notification message appears.

A dialog window opens to confirm the action of job activation.

Click the YES option to activate the job.

A success message appears confirming the activation of the job.

Once the job is activated, the user can see their job details while running the job by clicking on the View icon; the edit option for the job will be replaced by the View icon when the job is activated.

Please Note:

If the job is not running in Development mode, there will be no data in the preview tab of tasks.

The Status for the Job gets changed on the job List page when they are running in the Development mode or it is activated.

Users can get a sample of the task data under the Preview Data tab provided for the tasks in the Job Workflows.

Navigate to the Job Editor page for a selected job.

Open a task from where you want to preview records.

Click the Preview Data tab to view the content.

Please Note:

Users can preview, download, and copy up to 10 data entries.

Click the Download icon to download the data in CSV, JSON, or Excel format.

Click on the Copy option to copy the data as a list of dictionaries.

Users can drag and drop column separators to adjust columns' width, allowing personalized data view.

This feature helps accommodate various data lengths and user preferences.

The event data is displayed in a structured table format.

The table supports sorting and filtering to enhance data usability. The previewed data can be filtered based on the Latest, Beginning, and Timestamp options.

The Timestamp filter option redirects the user to select a timestamp from the Time Range window. The user can select a start and end date or choose from the available time ranges to apply and get a data preview.

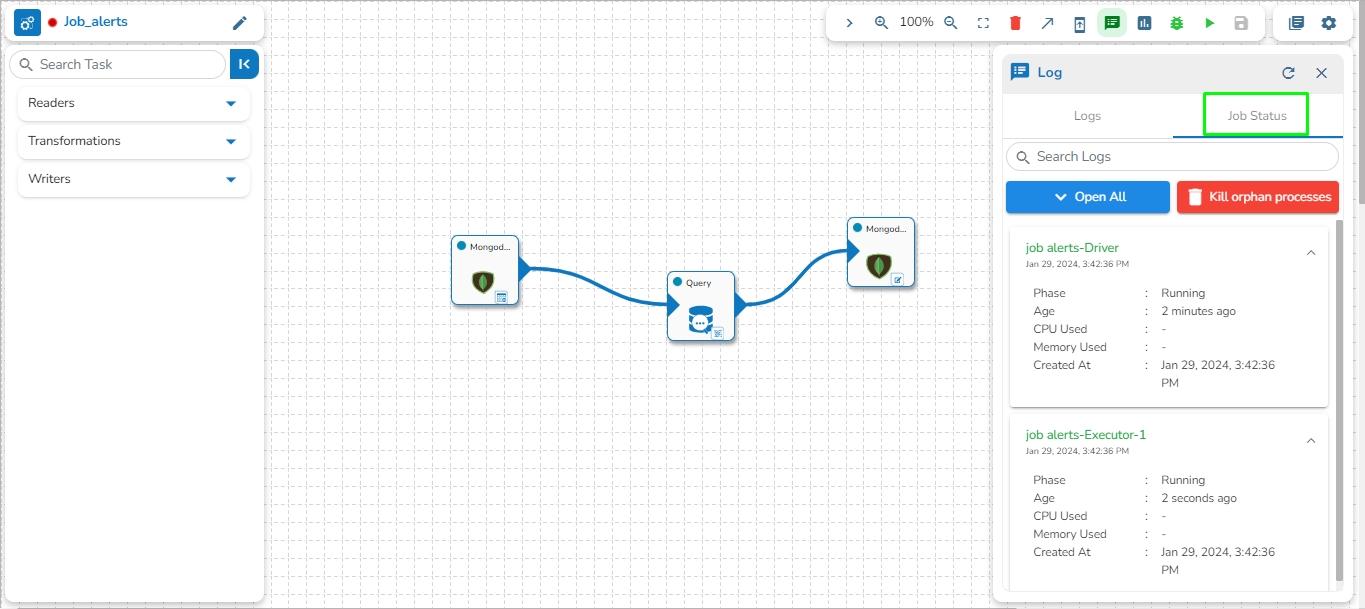

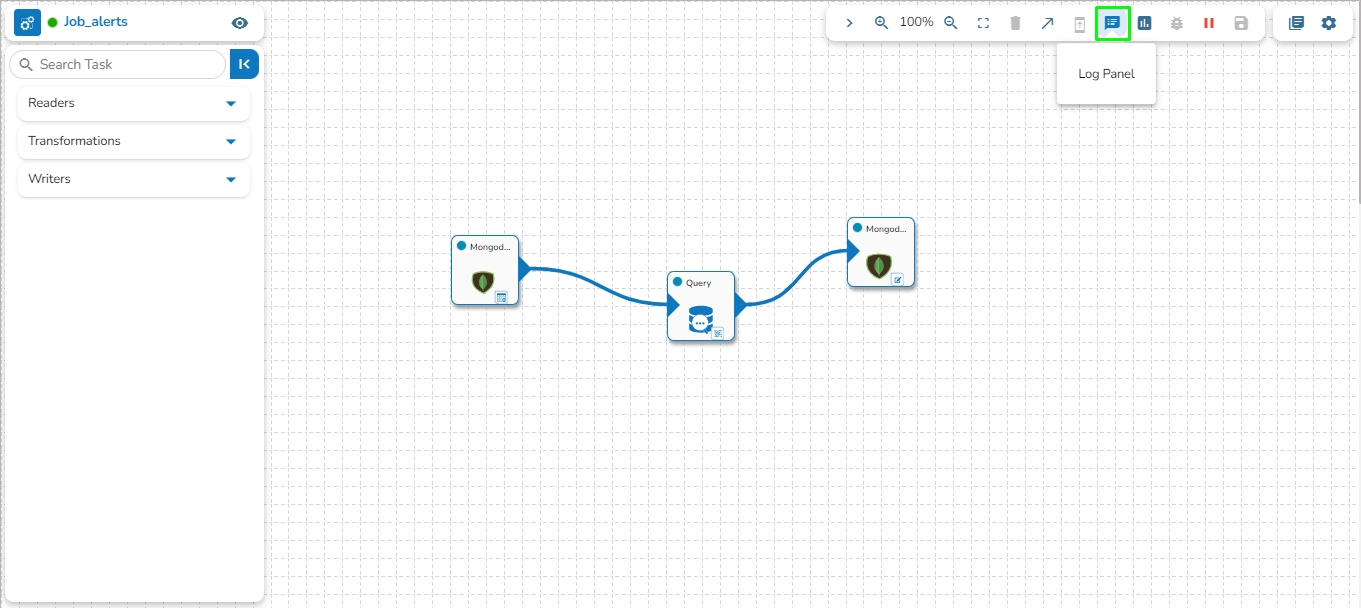

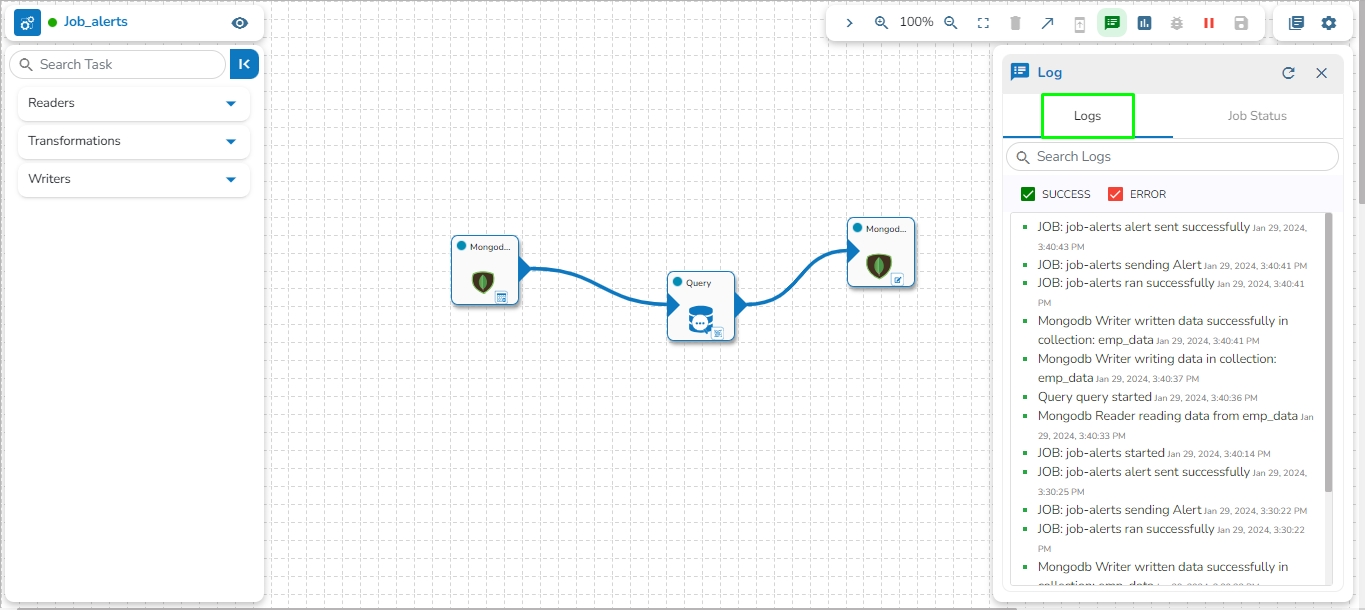

The Toggle Log Panel displays the Logs and Advanced Logs tabs for the Job Workflows.

Navigate to the Job Editor page.

Click the Toggle Log Panel icon on the header.

A panel Toggles displaying the collective logs of the job under the Logs tab.

Select the Job Status tab to display the pod status of the complete Job.

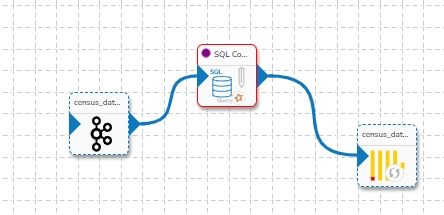

Please Note: If any orphan task is used in the Job Editor Workspace that is not in use, it will cause failure for the entire Job. So, avoid using any orphan task in the Job. Please see the image below, for reference. In the image below the highlighted DB writer task is an orphan task and if the Job is activated, then this Job will fail because the orphan DB writer task is not getting any input. Please avoid the use of an orphan task inside the Job Editor workspace.

Navigate to the Job Editor page.

The Job Version update button will display a red dot indicating that new updates are available for the selected job.

The Confirm dialog box appears.

Click the YES option.

After the job is upgraded, the Upgrade Job Version button gets disabled. It will display that the job is up to date and no updates are available.

A Kafka event refers to a single piece of data or message that is exchanged between producers and consumers in a Kafka messaging system. Kafka events are also known as records or messages. They typically consist of a key, a value, and metadata such as the topic and partition. Producers publish events to Kafka topics, and consumers subscribe to these topics to consume events. Kafka events are often used for real-time data streaming, messaging, and event-driven architectures.

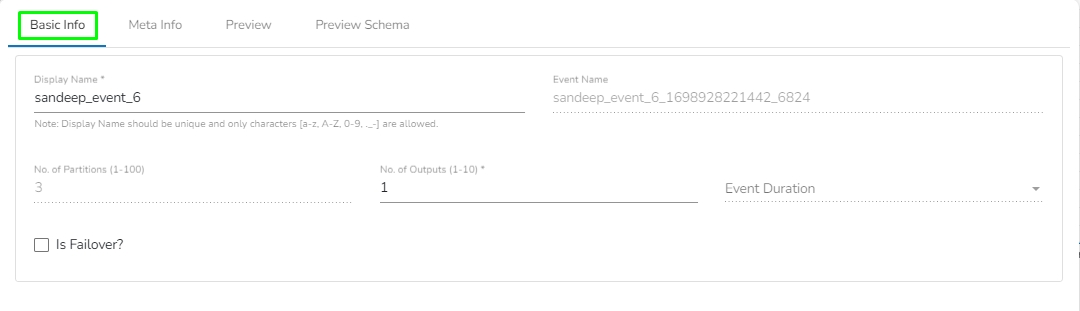

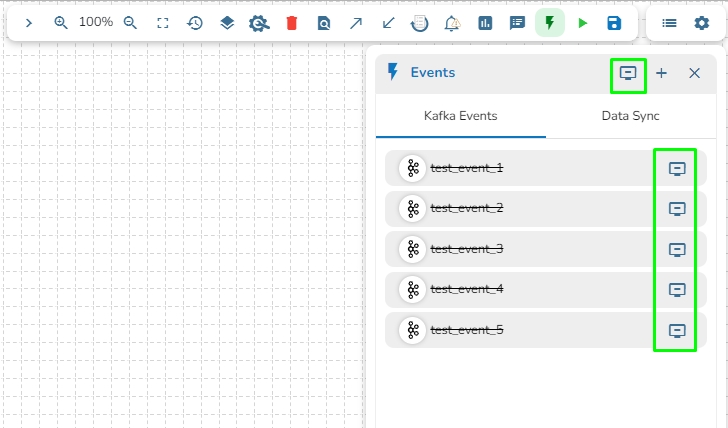

Check out the given illustration to understand how to create a Kafka Event.

Click on the Event Panel option in the pipeline toolbar and Click on Add New Event icon.

The New Event window opens.

Provide a name for the new Event.

Select Event Duration: Select an option from the below-given options.

Short (4 hours)

Medium (8 hours)

Half Day (12 hours)

Full Day (24 hours)

Long (48 hours)

Week (168 hours)

Please Note: The event data gets erased after 7 days if no duration option is selected from the available options. The Offsets expire as well.

No. of Partitions: Enter a value between 1 to 100. The default number of partitions is 3.

No. of Outputs: Define the number of outputs using this field.

Is Failover: Enable this option to create the event as the Failover Event. If a Failover Event is created, it must be mapped with a component to retrieve failed data from that component.

Click the "Add Event" option.

The Event will be created successfully, and the newly created Event is added to the Kafka Events tab in the Events panel.

Once the Kafka Event is created, the user can drag it to the pipeline workspace and connect it to any component.

On hovering over the event in the pipeline workspace, the user can see the following information for that event.

Event Name

Duration

Number of Partitions

The user can edit the following information of the Kafka Event after dragging it to the pipeline workspace:

Display Name

No. of Outputs

Is Failover

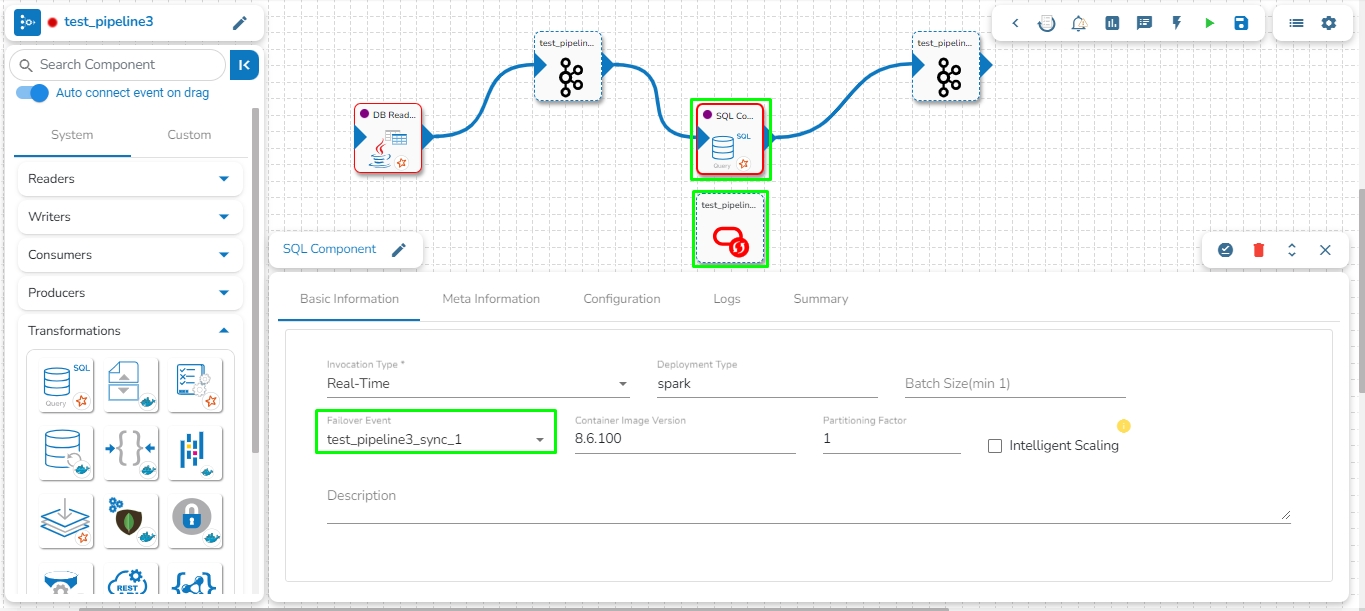

A Failover Event is designed to capture data that a component in the pipeline fails to process. In cases where a connected event's data cannot be processed successfully by a component, the failed data is sent to the Failover Event.

Follow these steps to map a Failover Event with the component in the pipeline:

Create a Failover Event following the provided steps.

Drag the Failover Event to the pipeline workspace.

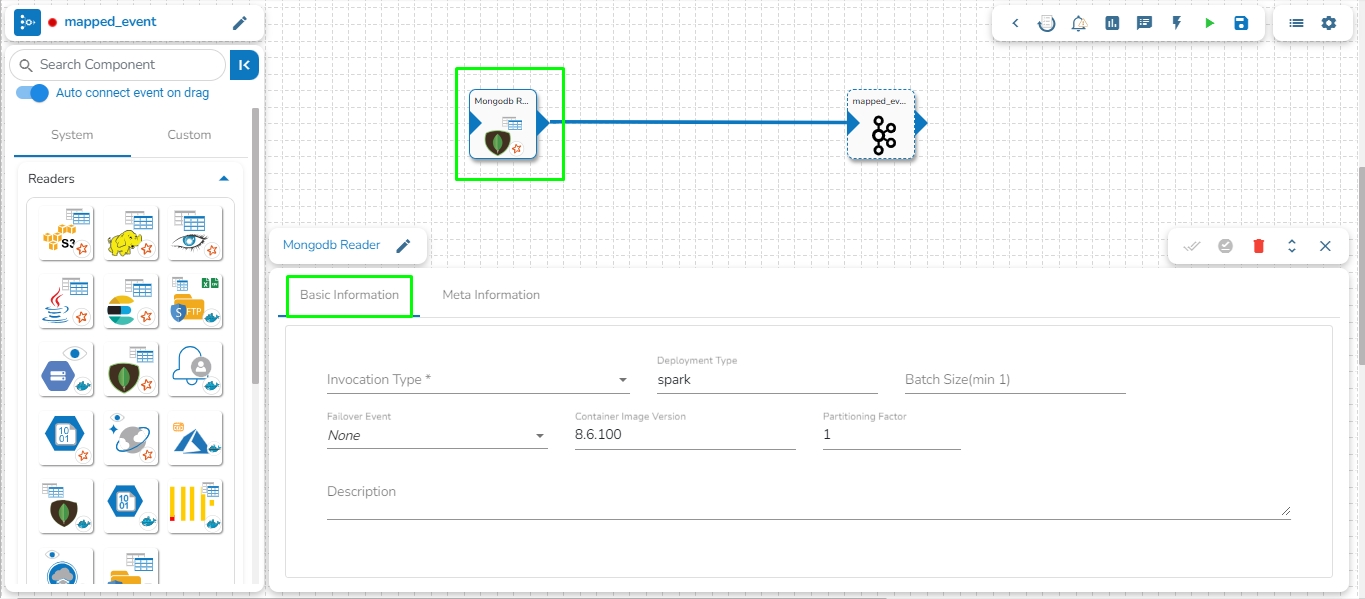

Navigate to the Basic Information tab of the desired component where the Failover Event should be mapped.

From the drop-down, select the Failover Event.

Save the component configuration.

The Failover Event is now successfully mapped. If the component encounters processing failures with data from its preceding event, the failed data will be directed to the Failover Event.

The Failover Event holds the following keys along with the failed data:

Cause: Cause of failure.

eventTime: Date and Time at which the data gets failed.

When hovering over the Failover Event, the associated component in the pipeline will be highlighted. Refer to the image below for visual reference.

Please see the below-given video on how to map a Failover Event.

This feature automatically connects the Kafka/Data Sync Event to the component when dragged from the events panel. In order to use this feature, users need to ensure that the Auto connect components on drag option is enabled from the Events panel.

Please see the below-given illustrations on auto connecting a Kafka and Data Sync Event to a pipeline component.

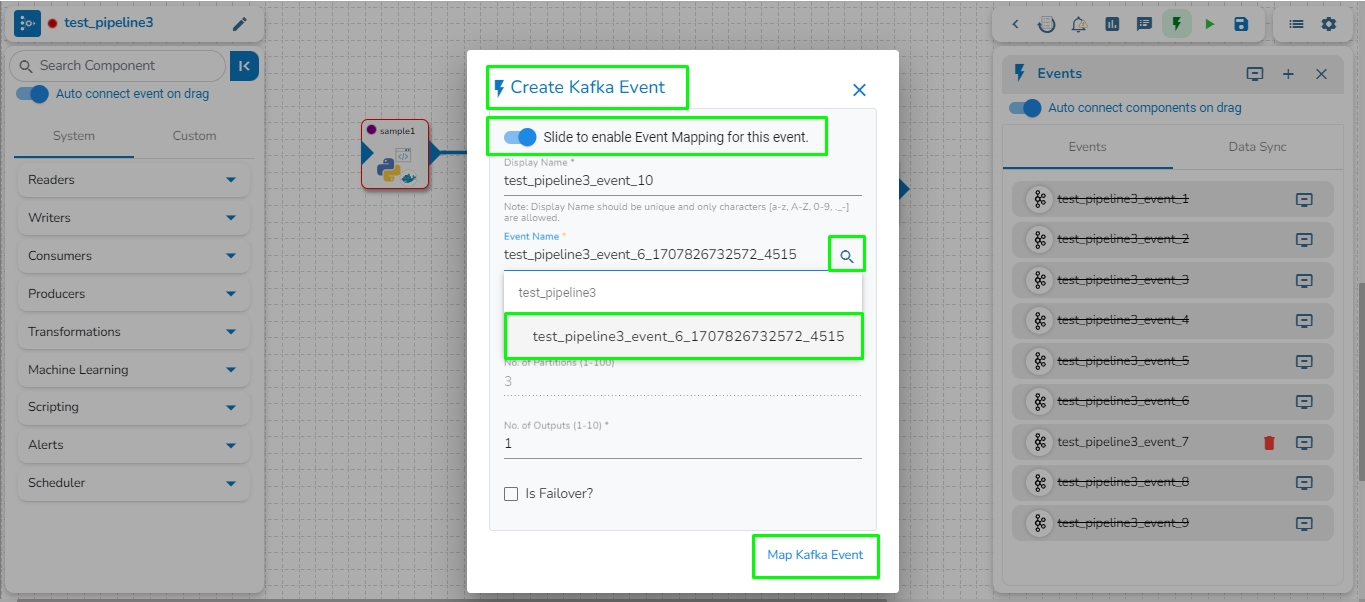

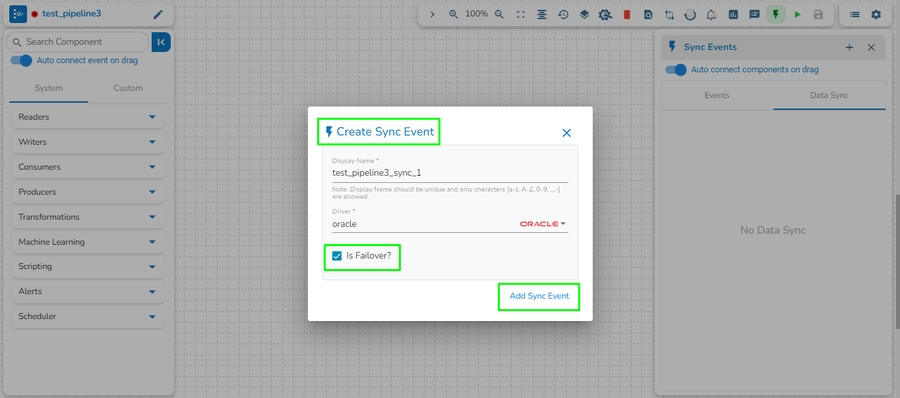

This feature allows user to directly connect a Kafka/Data Sync event to a component by right clicking on the component in the pipeline.

Follow these steps to directly connect a Kafka/Data Sync event to a component in the pipeline:

Right-click on the component in the pipeline.

Select the Add Kafka Event or Add Sync Event option.

The Create Kafka Event or Create Sync Event dialog box will open.

Enter the required details.

Click on the Add Kafka Event or Create Sync Event option.

The newly created Kafka/Data Sync Event will be directly connected to the component.

Please see the below-given illustrations on how to add a Kafka and Data Sync Event to a pipeline workflow.

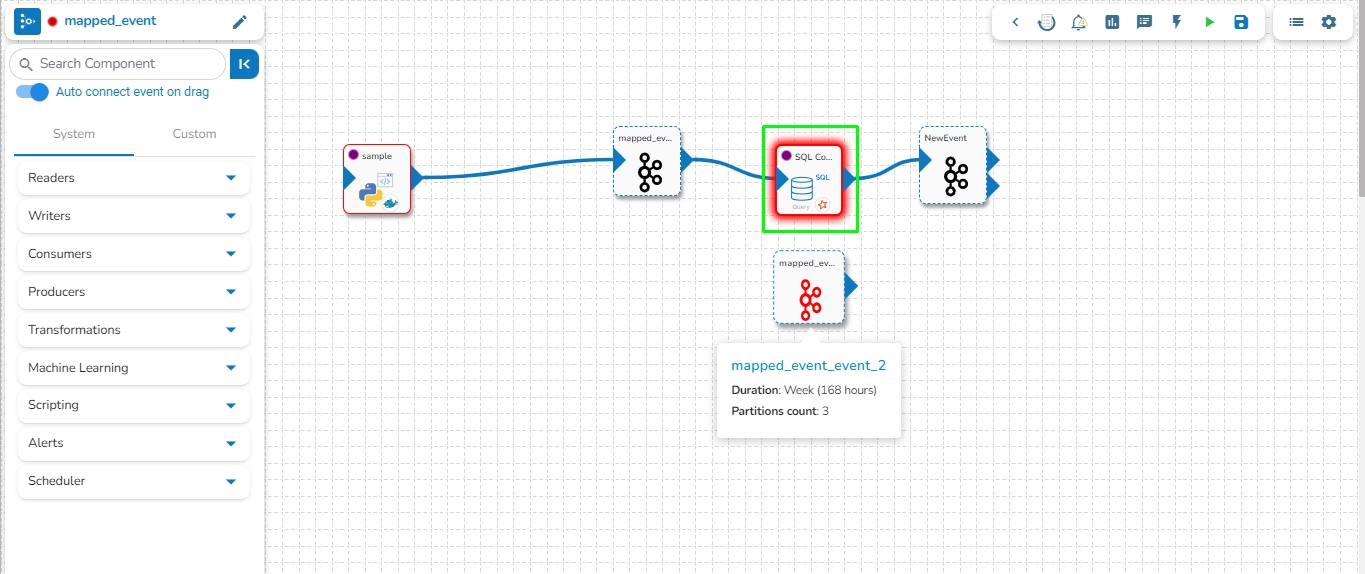

This feature enables users to map a Kafka Event with another Kafka event. In this scenario, the mapped event will have the same data as its source event.

Please see the below-given illustrations on how to create mapped Kafka Event.

Follow these steps to create a mapped event in the pipeline:

Choose the Kafka event from the pipeline as the source event for which mapped events need to be created.

Open the events panel from the pipeline toolbar and select the "Add New Event" option.

In the Create Kafka Event dialog box, enable the option at the top to enable mapping for this event.

Enter the Source Event Name in the Event name and click on the search icon to select the name of the source event from the suggestions.

The Event Duration and No. of Partitions will be automatically filled in the same as the source event. Users can modify the No. of Outputs between 1 to 10 for the mapped event.

Click on the Map Kafka Event option to create the Mapped Event.

Please Note: The data of the Mapped Event itself cannot be directly flushed. To clear the data of a Mapped Event, the user needs to flush the Source Event to which it is mapped.

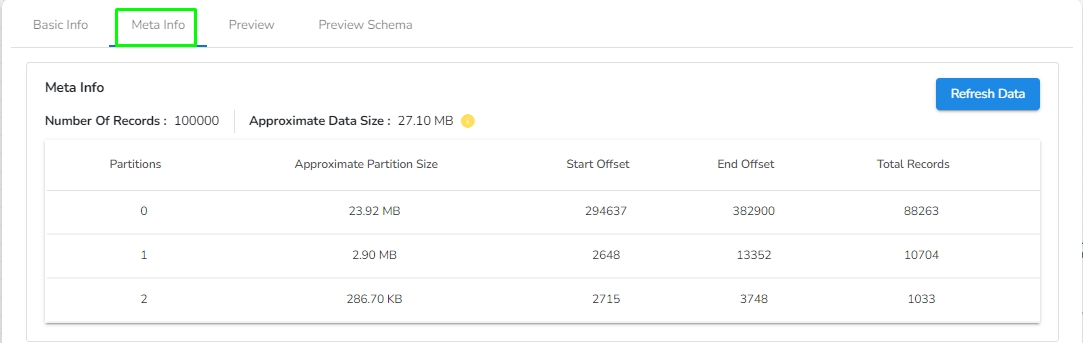

Please go through the below-given steps to check the meta information and download the data from the Kafka topic once the data has been sent to the Kafka event by the producer. The user can download the data from the Kafka event in CSV, Excel, and JSON format. The user will find the following tabs in the Kafka topic:

This tab displays information such as Display Name, Event Name, No. of Partitions, No. of Outputs, Event Duration, etc.

Navigate to the meta information tab to view details such as the Number Of Records in the Kafka topic, Approximate Data Size, Number of Partitions, Approximate Partition Size (in MB), Start and End Offset of partition, and Total Records in each partition.

Navigate to the Preview tab for a pipeline workflow using an Event component. Preferably select a pipeline workflow that has been activated to get data.

It will display the Data Preview.

Please Note:

Users can preview, download, and copy up to 100 data entries.

Click the Download icon to download the data in CSV, JSON, or Excel format.

Click on the Copy option to copy the data as a list of dictionaries.

Data Type icons are provided in each column header to indicate the data type. The icons help users quickly understand the contained data type in each column. The following icons are added:

Users can drag and drop column separators to adjust column widths, allowing for a personalized view of the data.

This feature helps accommodate various data lengths and user preferences.

The event data is displayed in a structured table format.

The table supports sorting and filtering to enhance data usability. The previewed data can be filtered based on the Latest, Beginning, and Timestamp options.

The Timestamp filter option redirects the user to select a timestamp from the Time Range window. The user can either select a start and end date or choose from the available time ranges to apply and get a data preview.

Check out the illustration on sorting and filtering the Event Data Preview for a Pipeline workflow.

This tab holds the Spark schema of the data. Users can download the Spark schema of the data by clicking on the download option.

Kafka Events can be flushed to delete all present records. Flushing an Event retains the offsets of the topic by setting the start-offset value to the end-offset. Events can be flushed by using the Flush Event button beside the respective Event in the Event panel, and all Events can be flushed at once by using the Flush All button. This button is also present at the top of the Event panel.

Check out the given illustration on how to Auto connect a Kafka Event to a pipeline component.

Drag any component to the pipeline workspace.

Configure all the parameters/fields of the dragged component.

Drag the Event from the Events Panel. Once the Kafka event is dragged from the Events panel, it will automatically connect with the nearest component in the pipeline workspace. Please find the below given walk through for the reference.

Click the Update Pipeline icon to save the pipeline workflow.

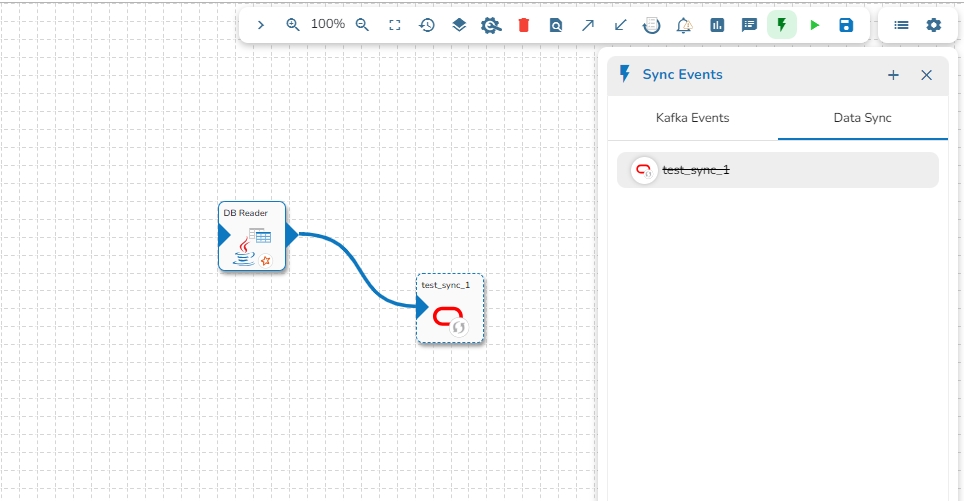

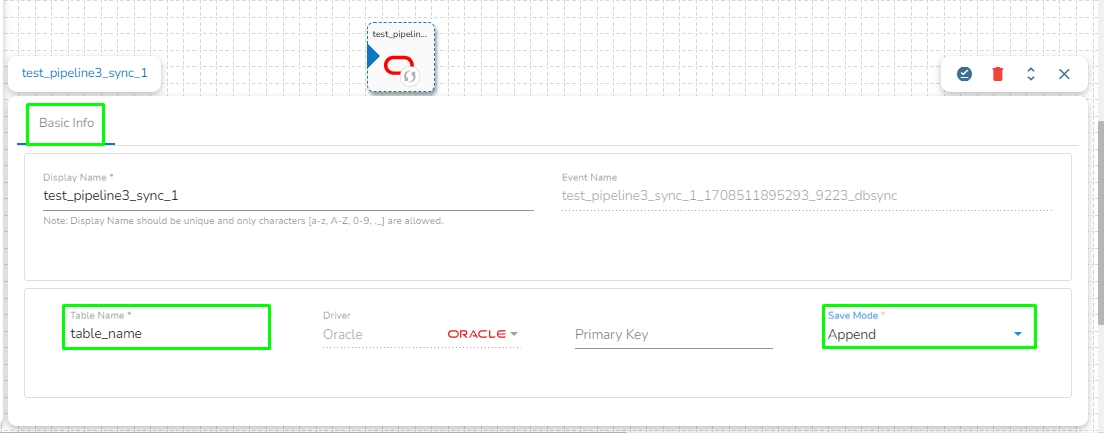

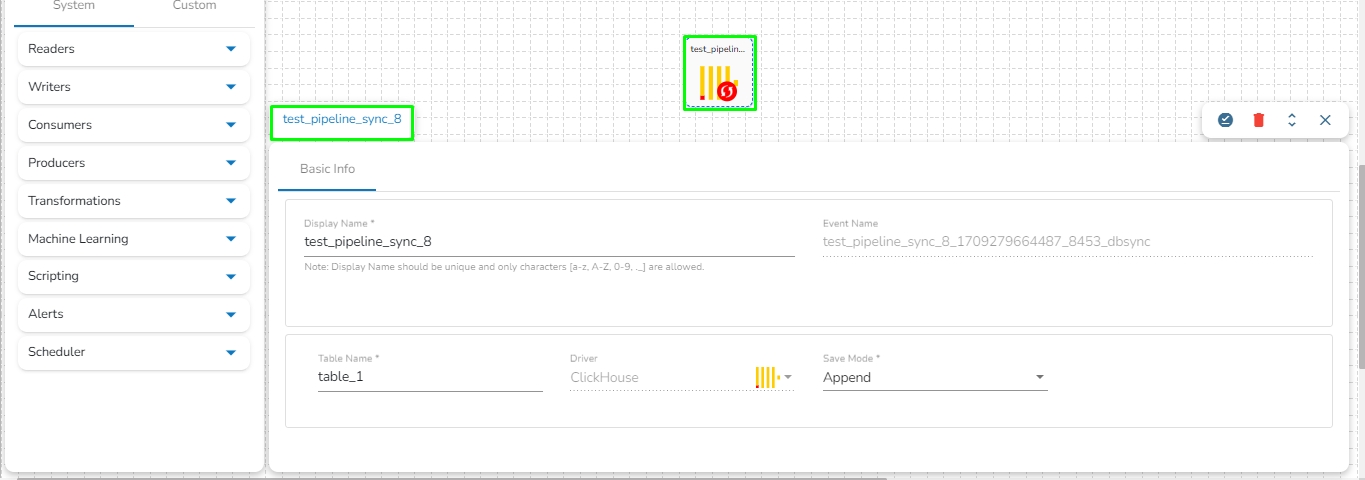

Data Sync Event in the Data Pipeline module is used to write the required data directly to the any of the databases without using the Kafka Event and writer components in the pipeline. Please refer the below image for reference:

It can be seen in the above image that Data Event will directly write the data read from the MongoDB reader component to the table of the configured Database in the Data Sync without using a Kafka Event in-between.

It doesn't need Kafka event to read the data. It can be connected with any component to read the data and it writes it to the tables of respective databases.

Pipeline complexity is reduced because Kafka event and writer is not needed to use in the pipeline.

Since, writers are not used, the resource consumption are low.

Once Data sync are configured, multiple Data Sync events can be created for the same configuration and the data can be written to multiple tables.

DB Sync Event enables direct write to the DB that helps in reducing the usage of additional compute resources like Writers in the Pipeline Workflow.

Please Note: The supported drivers for the Data Sync component are as listed below:

ClickHouse

MongoDB

MSSQL

MySQL

Oracle

PostgreSQL

Snowflake

Redshift

Check out the given video on how to create a Data Sync component and connect it with a Pipeline component.

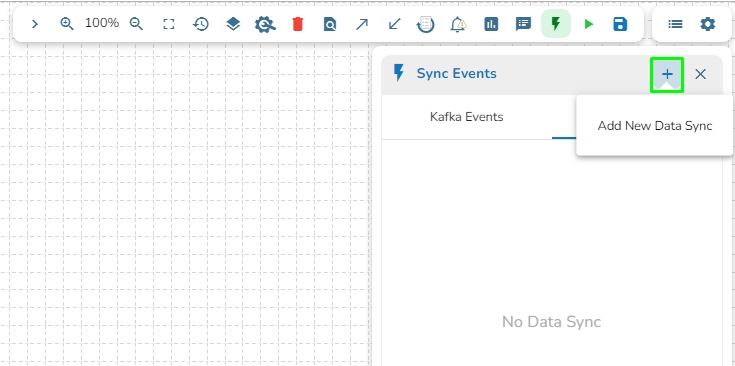

Navigate to the Pipeline Editor page.

Click on the DB Sync tab.

Click on the Add New Data Sync (+) icon from the Toggle Event Panel.

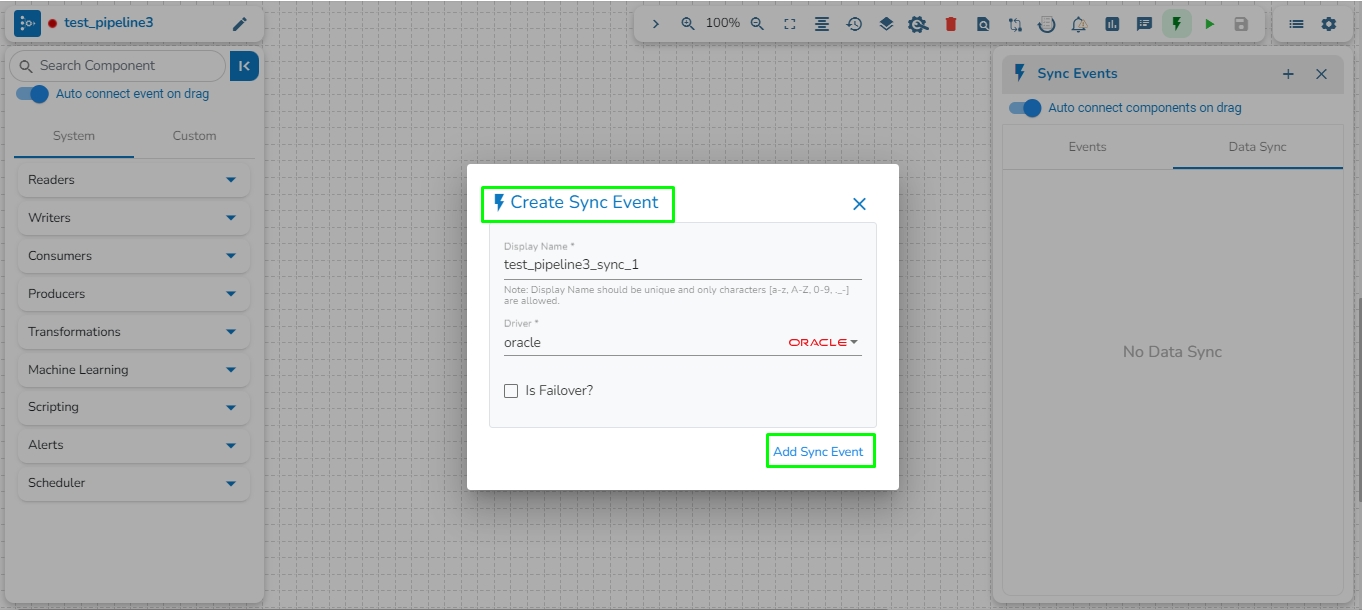

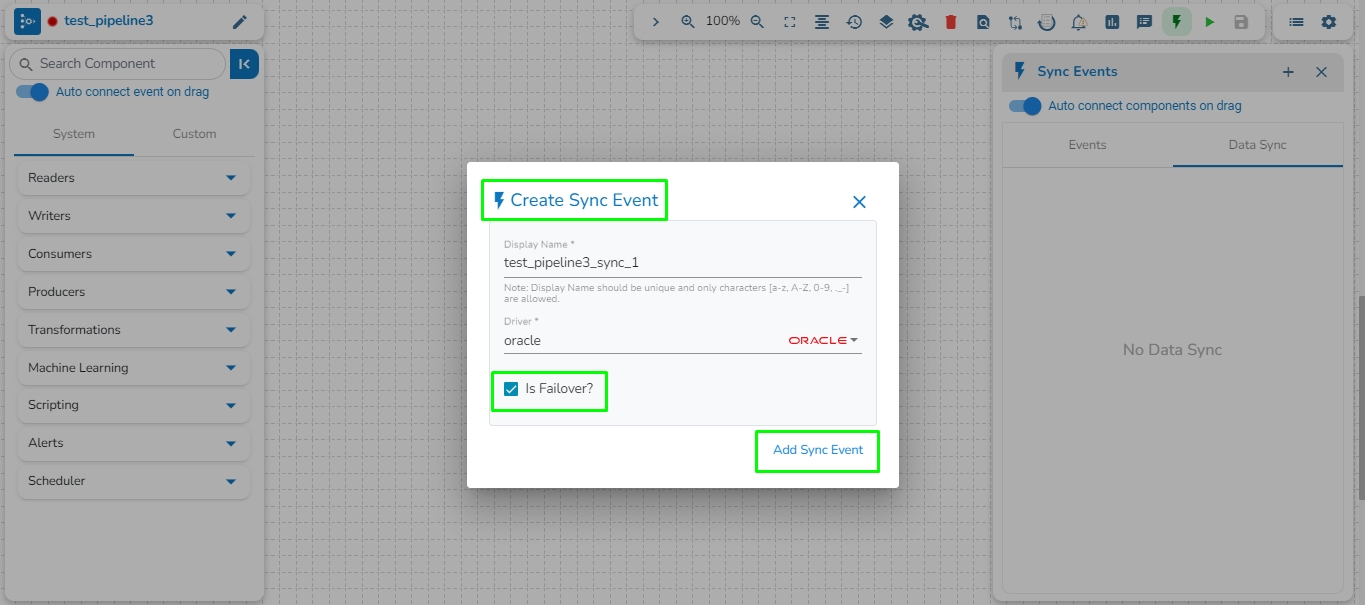

The Create Data Sync window opens.

Provide a display name for the new Data Sync.

Select the Driver

Put a checkmark in the Is Failover option to create a failover Data Sync. In this case, it is not enabled.

Click the Save option.

Please Note:

The Data Sync component gets created as Failover Data Sync, if the Is Failover option is enabled while creating a Data Sync.

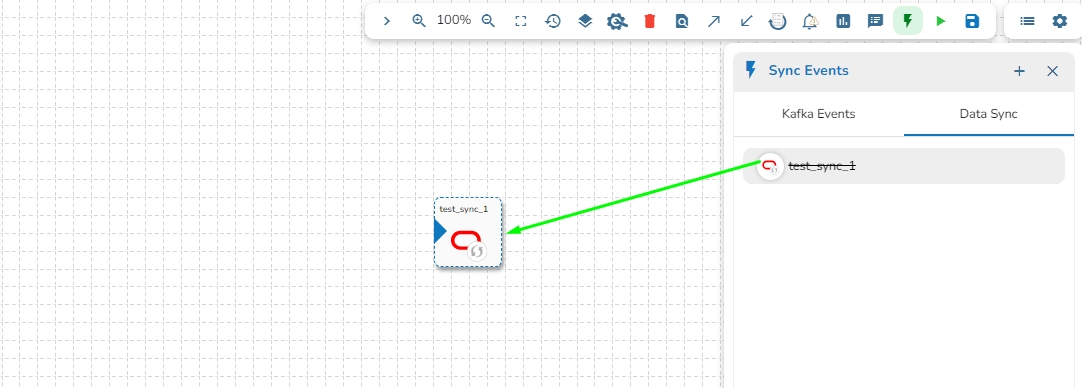

Drag and drop Data Sync Event to the workflow editor.

Click on the dragged Data Sync component.

The Basic Information tab appears with the following fields:

Display Name: Display name of the Data Sync

Event Name: Event name of the Data Sync

Table name: Specify table name.

Driver: This field will be pre-selected.

Save Mode: Select save mode from the drop-down: Append or Upsert.

Composite Key: This field is optional. This field will only appear when upsert is selected as the Save Mode.

Click on the Save Data Sync icon to save the Data Sync information.

Once the Data Sync Event is dragged from the Events panel, it will automatically connect with the nearest component in the pipeline workflow.

Update and activate the pipeline.

Open the Logs tab to view whether the data gets written to a specified table.

Please Note:

In the Save mode, there are two available options.

Append

Upsert: One extra field will be displayed for upsert save mode i.e. Composite Key.

When the SQL component is set to Aggregate Query mode and connected to Data Sync, the data resulting from the query will not be written to the Data Sync Event. Please refer to the following image for a visual representation of the flow and avoid using such scenario.

The Events Panel appears on the right side of the Pipeline Workflow Editor page.

Click on the Data Sync tab.

Click on the Add New Data Sync (+) icon from the Toggle Event Panel.

The Create Data Sync dialog box opens.

Provide the Display Name and

Enable the Is Failover option.

Click the Save option.

The failover Data Sync will be created successfully.

Drag & drop the failover data sync to the pipeline workflow editor.

Click on the failover Data Sync and fill in the following field:

Table name: Enter the table name where the failed data has to be written.

Primary key: Enter the column name to be made as the primary key in the table. This field is optional.

Save mode: Select a save mode from the given choices. Select either Append or Upsert.

The failover Data Sync Event gets configured, and now the user can map it with any component in the pipeline workflow.

The image displays that the failover Data Sync Event is mapped with the SQL Component.

If the component fails, it will write all the data to the given table of the configured DB in the Failover Data Sync.

Please Note: There is no information available on UI that the failover Data Sync Event has been configured while hovering on the failover Data Sync Event.

Elasticsearch is an open-source search and analytics engine built on top of the Apache Lucene library. It is designed to help users store, search, and analyze large volumes of data in real-time. Elasticsearch is a distributed, scalable system that can be used to index and search structured, semi-structured, and unstructured data.

This task is used to read the data located in Elastic Search engine.

Drag the ES reader task to the Workspace and click on it to open the related configuration tabs for the same. The Meta Information tab opens by default.

Host IP Address: Enter the host IP Address for Elastic Search.

Port: Enter the port to connect with Elastic Search.

Index ID: Enter the Index ID to read a document in elastic search. In Elasticsearch, an index is a collection of documents that share similar characteristics, and each document within an index has a unique identifier known as the index ID. The index ID is a unique string that is automatically generated by Elasticsearch and is used to identify and retrieve a specific document from the index.

Resource Type: Provide the resource type. In Elasticsearch, a resource type is a way to group related documents together within an index. Resource types are defined at the time of index creation, and they provide a way to logically separate different types of documents that may be stored within the same index.

Is Date Rich True: Enable this option if any fields in the reading file contain date or time information. The "date rich" feature in Elasticsearch allows for advanced querying and filtering of documents based on date or time ranges, as well as date arithmetic operations.

Username: Enter the username for elastic search.

Password: Enter the password for elastic search.

Query: Provide a spark SQL query.

Please Note: Please click the Save Task In Storage icon to save the configuration for the dragged reader task.

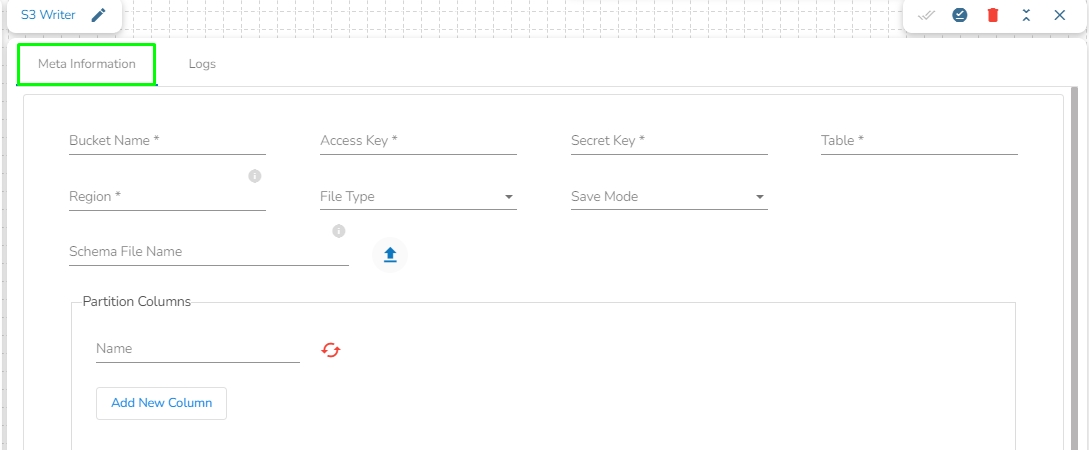

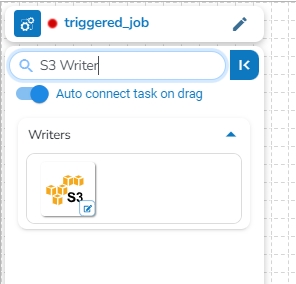

This task is used to write the data in Amazon S3 bucket.

Drag the S3 writer task to the Workspace and click on it to open the related configuration tabs for the same. The Meta Information tab opens by default.

Bucket Name (*): Enter S3 Bucket name.

Region (*): Provide S3 region.

Access Key (*): Access key shared by AWS to login

Secret Key (*): Secret key shared by AWS to login

Table (*): Mention the Table or object name which is to be read

File Type (*): Select a file type from the drop-down menu (CSV, JSON, PARQUET, AVRO are the supported file types).

Save Mode: Select the Save mode from the drop down.

Append

Schema File Name: Upload spark schema file in JSON format.

Please Note: Please click the Save Task In Storage icon to save the configuration for the dragged writer task.

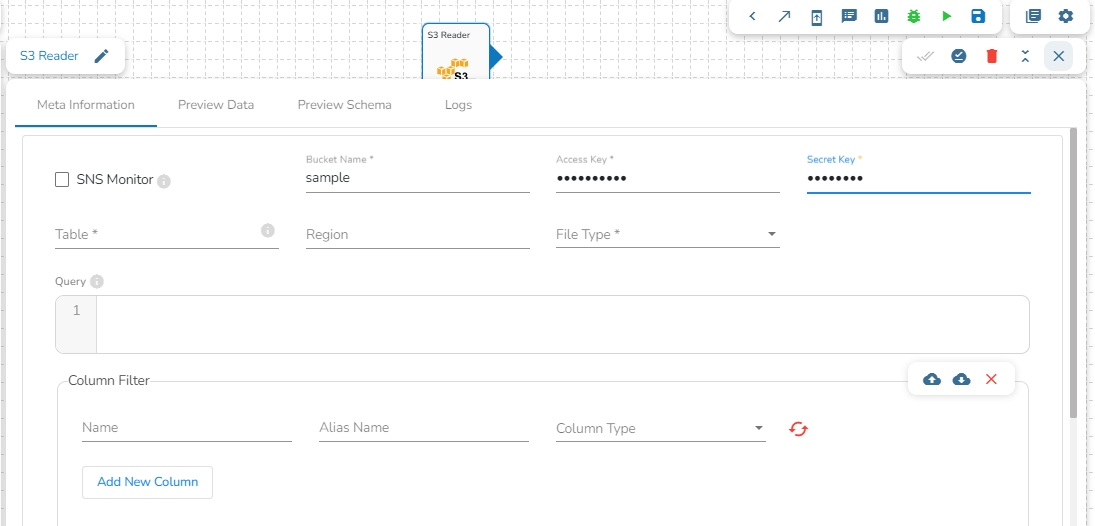

This task reads the file from Amazon S3 bucket.

Please follow the below mentioned steps to configure meta information of S3 reader task:

Drag the S3 reader task to the Workspace and click on it to open the related configuration tabs for the same. The Meta Information tab opens by default.

Bucket Name (*): Enter S3 bucket name.

Region (*): Provide the S3 region.

Access Key (*): Access key shared by AWS to login..

Secret Key (*): Secret key shared by AWS to login

Table (*): Mention the Table or object name which is to be read

File Type (*): Select a file type from the drop-down menu (CSV, JSON, PARQUET, AVRO, XML are the supported file types)

Limit: Set a limit for the number of records.

Query: Insert an SQL query (it supports query containing a join statement as well).

Access Key (*): Access key shared by AWS to login

Secret Key (*): Secret key shared by AWS to login

Table (*): Mention the Table or object name which has to be read

File Type (*): Select a file type from the drop-down menu (CSV, JSON, PARQUET, AVRO, XML are the supported file types)

Limit: Set limit for the number of records

Query: Insert an SQL query (it supports query containing a join statement as well)

Provide a unique Key column name to partition data in Spark.

Please Note:

Please click the Save Task In Storage icon to save the configuration for the dragged reader task.

Once file type is selected the multiple fields will appear. Follow the below steps for the selected different file types.

CSV: The Header and Infer Schema fields get displayed with CSV as the selected File Type. Enable Header option to get the Header of the reading file and enable Infer Schema option to get true schema of the column in the CSV file.

JSON: The Multiline and Charset fields get displayed with JSON as the selected File Type. Check-in the Multiline option if there is any multiline string in the file.

PARQUET: No extra field gets displayed with PARQUET as the selected File Type.

AVRO: This File Type provides two drop-down menus.

Compression: Select an option out of the Deflate and Snappy options.

Compression Level: This field appears for the Deflate compression option. It provides 0 to 9 levels via a drop-down menu.

XML: Select this option to read XML file. If this option is selected, the following fields will get displayed:

Infer schema: Enable this option to get true schema of the column.

Path: Provide the path of the file.

Root Tag: Provide the root tag from the XML files.

Row Tags: Provide the row tags from the XML files.

Join Row Tags: Enable this option to join multiple row tags.

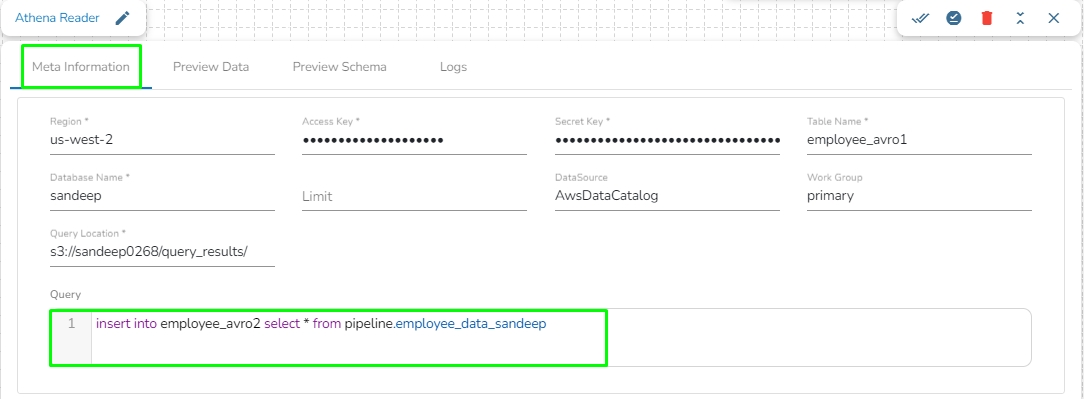

Amazon Athena is an interactive query service that easily analyzes data directly in Amazon Simple Storage Service (Amazon S3) using standard SQL. With a few actions in the AWS Management Console, you can point Athena at your data stored in Amazon S3 and begin using standard SQL to run ad-hoc queries and get results in seconds.

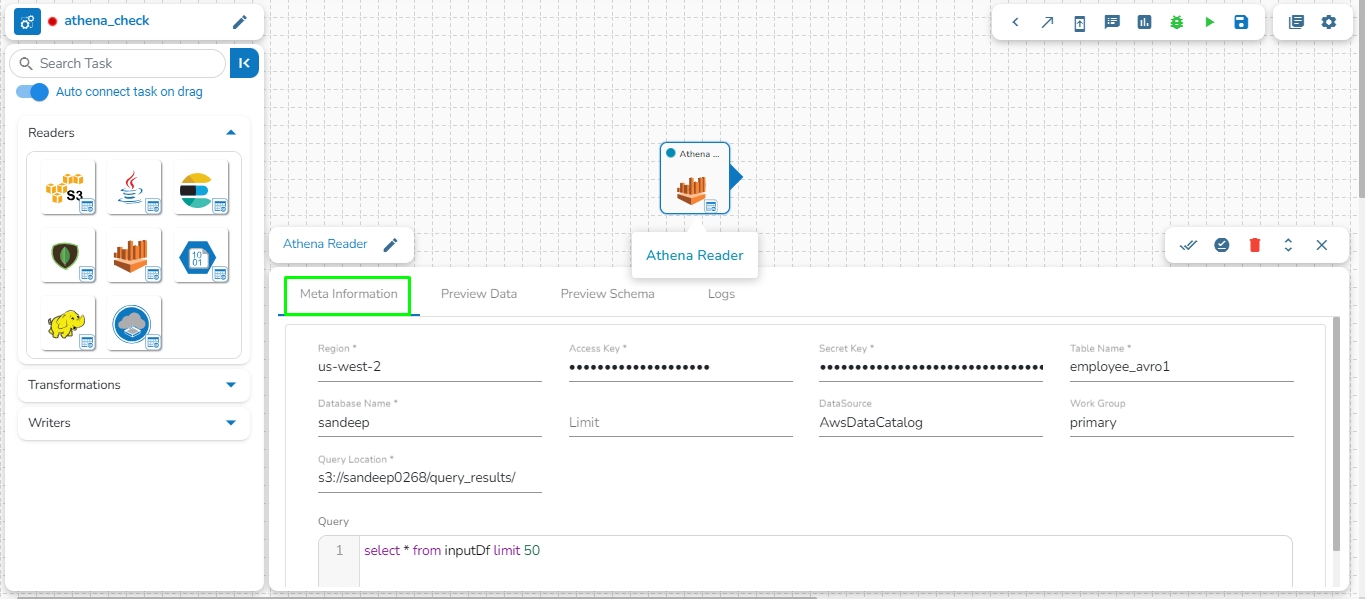

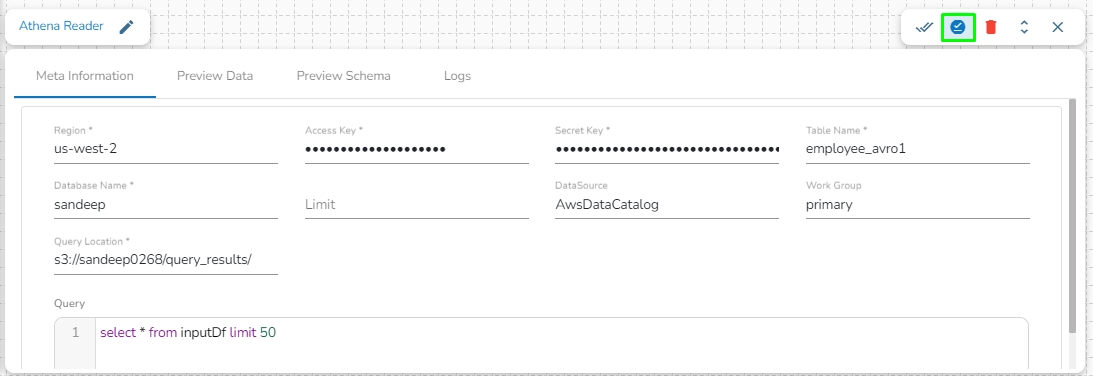

Athena Query Executer task enables users to read data directly from the external table created in AWS Athena.

Please Note: Please go through the below given demonstration to configure Athena Query Executer in Jobs.

Region: Enter the region name where the bucket is located.

Access Key: Enter the AWS Access Key of the account that must be used.

Secret Key: Enter the AWS Secret Key of the account that must be used.

Table Name: Enter the name of the external table created in Athena.

Database Name: Name of the database in Athena in which the table has been created.

Limit: Enter the number of records to be read from the table.

Data Source: Enter the Data Source name configured in Athena. Data Source in Athena refers to your data's location, typically an S3 bucket.

Workgroup: Enter the Workgroup name configured in Athena. The Workgroup in Athena is a resource type to separate query execution and query history between Users, Teams, or Applications running under the same AWS account.

Query location: Enter the path where the results of the queries done in the Athena query editor are saved in the CSV format. Users can find this path under the Settings tab in the Athena query editor as Query Result Location.

Query: Enter the Spark SQL query.

Sample Spark SQL query that can be used in Athena Reader:

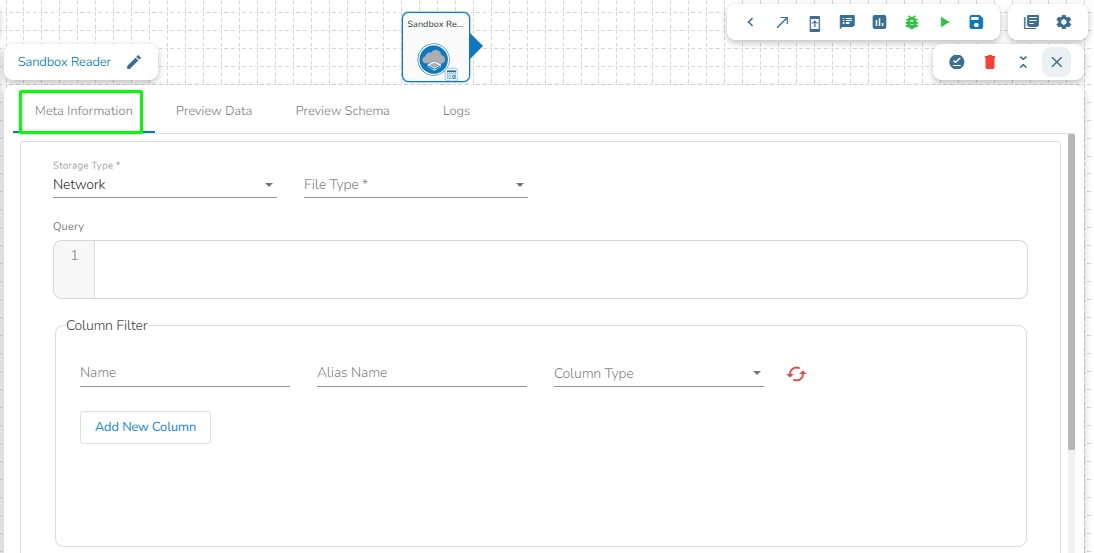

This task can read the data from the Network pool of Sandbox.

Drag the Sandbox reader task to the Workspace and click on it to open the related configuration tabs for the same. The Meta Information tab opens by default.

Storage Type: This field is pre-defined.

Sandbox File: Select the file name from the drop-down.

File Type: Select the file type from the drop down.

There are four(5) types of file extensions are available under it:

CSV: The Header and Infer Schema fields get displayed with CSV as the selected File Type. Enable Header option to get the Header of the reading file and enable Infer Schema option to get true schema of the column in the CSV file.

JSON: The Multiline and Charset fields get displayed with JSON as the selected File Type. Check-in the Multiline option if there is any multiline string in the file.

PARQUET: No extra field gets displayed with PARQUET as the selected File Type.

AVRO: This File Type provides two drop-down menus.

Compression: Select an option out of the Deflate and Snappy options.

Compression Level: This field appears for the Deflate compression option. It provides 0 to 9 levels via a drop-down menu.

XML: Select this option to read XML file. If this option is selected, the following fields will get displayed:

Query: Provide Spark SQL query in this field.

Please Note: Please click the Save Task In Storage icon to save the configuration for the dragged reader task.

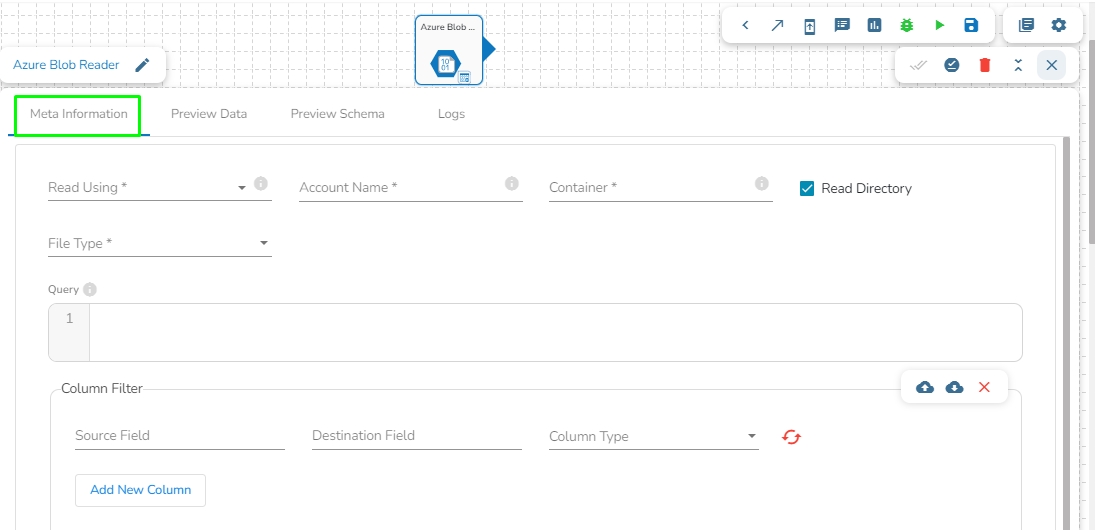

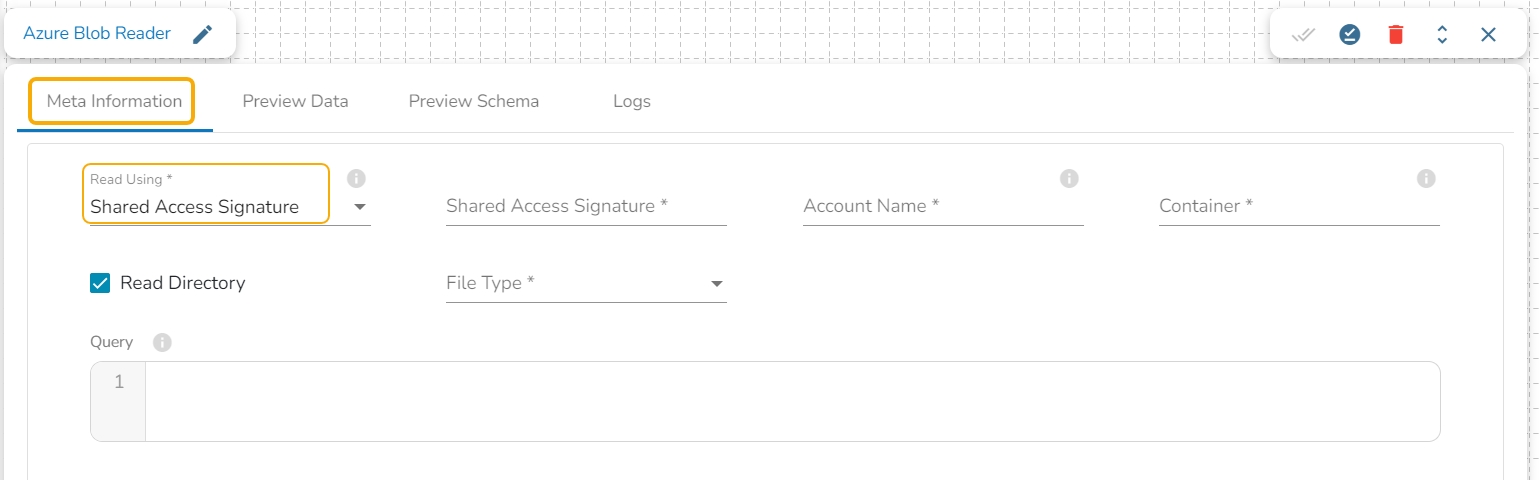

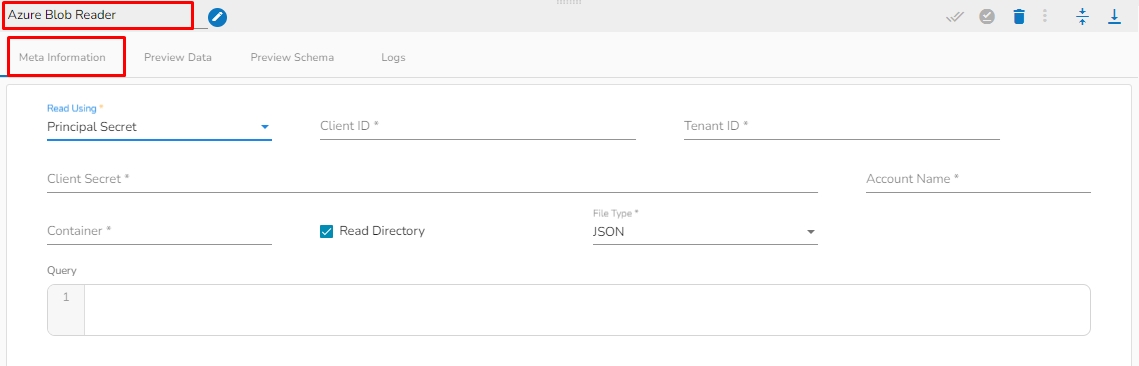

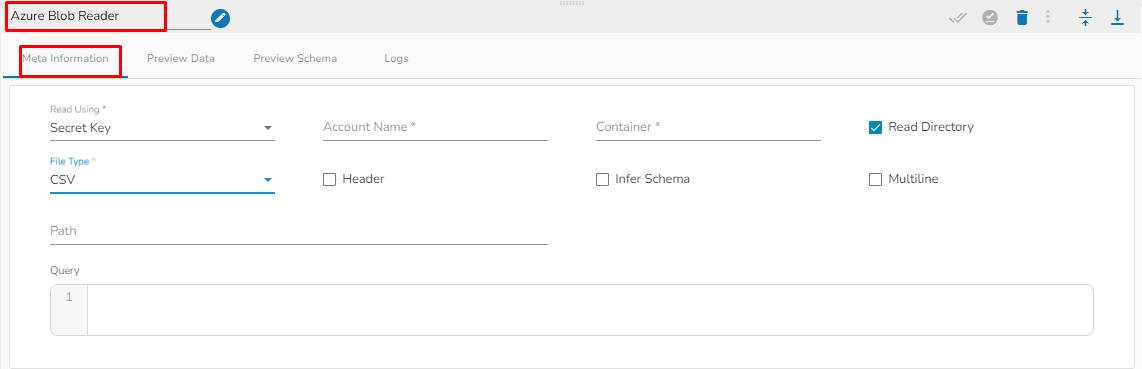

This task is used to read data from Azure blob container.

Drag the Azure Blob reader task to the Workspace and click on it to open the related configuration tabs for the same. The Meta Information tab opens by default.

Read using: There are three(3) options available under this tab:

Provide the following details:

Shared Access Signature: This is a URI that grants restricted access rights to Azure Storage resources.

Account Name: Provide the Azure account name.

Container: Provide the container name from where the file is located and which has to be read.

File type: There are four(5) types of file extensions are available under it:

CSV: The Header and Infer Schema fields get displayed with CSV as the selected File Type. Enable Header option to get the Header of the reading file and enable Infer Schema option to get true schema of the column in the CSV file.

JSON: The Multiline and Charset fields get displayed with JSON as the selected File Type. Check-in the Multiline option if there is any multiline string in the file.

PARQUET: No extra field gets displayed with PARQUET as the selected File Type.

AVRO: This File Type provides two drop-down menus.

Compression: Select an option out of the Deflate and Snappy options.

Compression Level: This field appears for the Deflate compression option. It provides 0 to 9 levels via a drop-down menu.

XML: Select this option to read XML file. If this option is selected, the following fields will get displayed:

Infer schema: Enable this option to get true schema of the column.

Path: Provide the path of the file.

Root Tag: Provide the root tag from the XML files.

Row Tags: Provide the row tags from the XML files.

Join Row Tags: Enable this option to join multiple row tags.

Path: This option will appear once the file type is selected. Enter the path where the selected file type is located.

Read Directory: Check in this box to read the specified directory.

Query: Provide Spark SQL query in this field.

Provide the following details:

Account Key: Enter the Azure account key. In Azure, an account key is a security credential that is used to authenticate access to storage resources, such as blobs, files, queues, or tables, in an Azure storage account.

Account Name: Provide the Azure account name.

Container: Provide the container name from where the blob is located. A container is a logical unit of storage in Azure Blob Storage that can hold blobs. It is similar to a directory or folder in a file system, and it can be used to organize and manage blobs.

File type: There are four(5) types of file extensions are available under it:

CSV: The Header and Infer Schema fields get displayed with CSV as the selected File Type. Enable Header option to get the Header of the reading file and enable Infer Schema option to get true schema of the column in the CSV file.

JSON: The Multiline and Charset fields get displayed with JSON as the selected File Type. Check-in the Multiline option if there is any multiline string in the file.

PARQUET: No extra field gets displayed with PARQUET as the selected File Type.

AVRO: This File Type provides two drop-down menus.

Compression: Select an option out of the Deflate and Snappy options.

Compression Level: This field appears for the Deflate compression option. It provides 0 to 9 levels via a drop-down menu.

XML: Select this option to read XML file. If this option is selected, the following fields will get displayed:

Infer schema: Enable this option to get true schema of the column.

Path: Provide the path of the file.

Root Tag: Provide the root tag from the XML files.

Row Tags: Provide the row tags from the XML files.

Join Row Tags: Enable this option to join multiple row tags.

Path: This option will appear once the file type is selected. Enter the path where the selected file type is located.

Read Directory: Check in this box to read the specified directory.

Query: Provide Spark SQL query in this field.

Provide the following details:

Client ID: Provide Azure Client ID. The client ID is the unique Application (client) ID assigned to your app by Azure AD when the app was registered.

Tenant ID: Provide the Azure Tenant ID. Tenant ID (also known as Directory ID) is a unique identifier that is assigned to an Azure AD tenant, which represents an organization or a developer account. It is used to identify the organization or developer account that the application is associated with.

Client Secret: Enter the Azure Client Secret. Client Secret (also known as Application Secret or App Secret) is a secure password or key that is used to authenticate an application to Azure AD.

Account Name: Provide the Azure account name.

Container: Provide the container name from where the blob is located. A container is a logical unit of storage in Azure Blob Storage that can hold blobs. It is similar to a directory or folder in a file system, and it can be used to organize and manage blobs.

Query: Provide Spark SQL query in this field.

File type: There are four(5) types of file extensions are available under it:

CSV: The Header and Infer Schema fields get displayed with CSV as the selected File Type. Enable Header option to get the Header of the reading file and enable Infer Schema option to get true schema of the column in the CSV file.

JSON: The Multiline and Charset fields get displayed with JSON as the selected File Type. Check-in the Multiline option if there is any multiline string in the file.

PARQUET: No extra field gets displayed with PARQUET as the selected File Type.

AVRO: This File Type provides two drop-down menus.

Compression: Select an option out of the Deflate and Snappy options.

Compression Level: This field appears for the Deflate compression option. It provides 0 to 9 levels via a drop-down menu.

XML: Select this option to read XML file. If this option is selected, the following fields will get displayed:

Infer schema: Enable this option to get true schema of the column.

Path: Provide the path of the file.

Root Tag: Provide the root tag from the XML files.

Row Tags: Provide the row tags from the XML files.

Join Row Tags: Enable this option to join multiple row tags.

Please Note: Please click the Save Task In Storage icon to save the configuration for the dragged reader task.

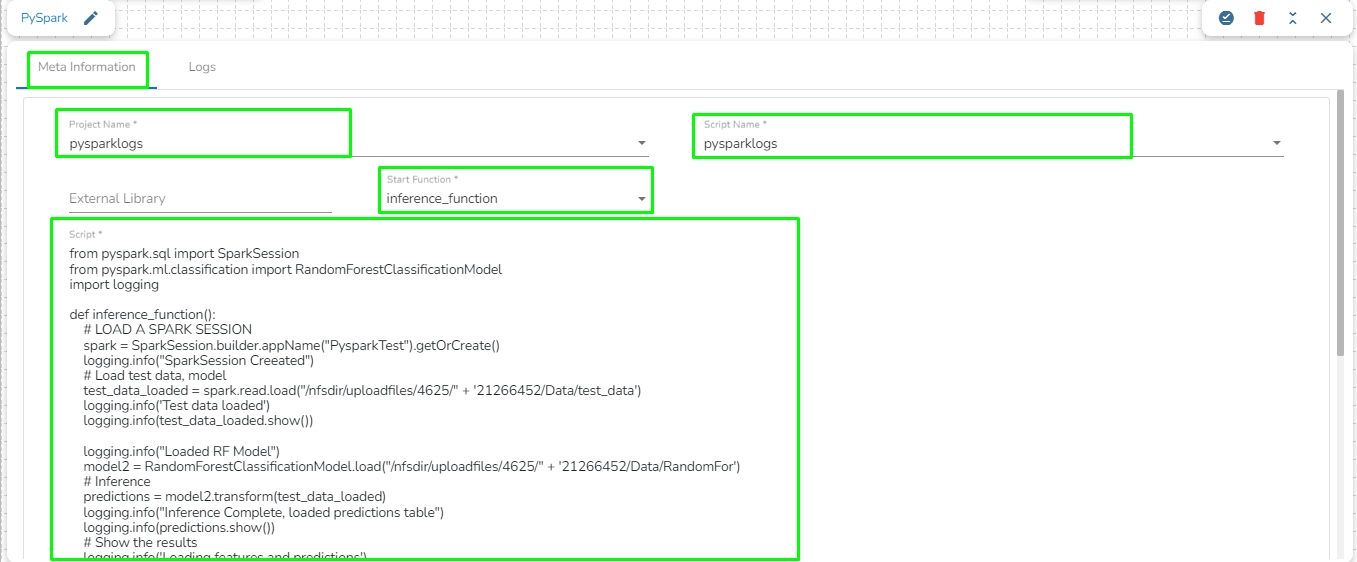

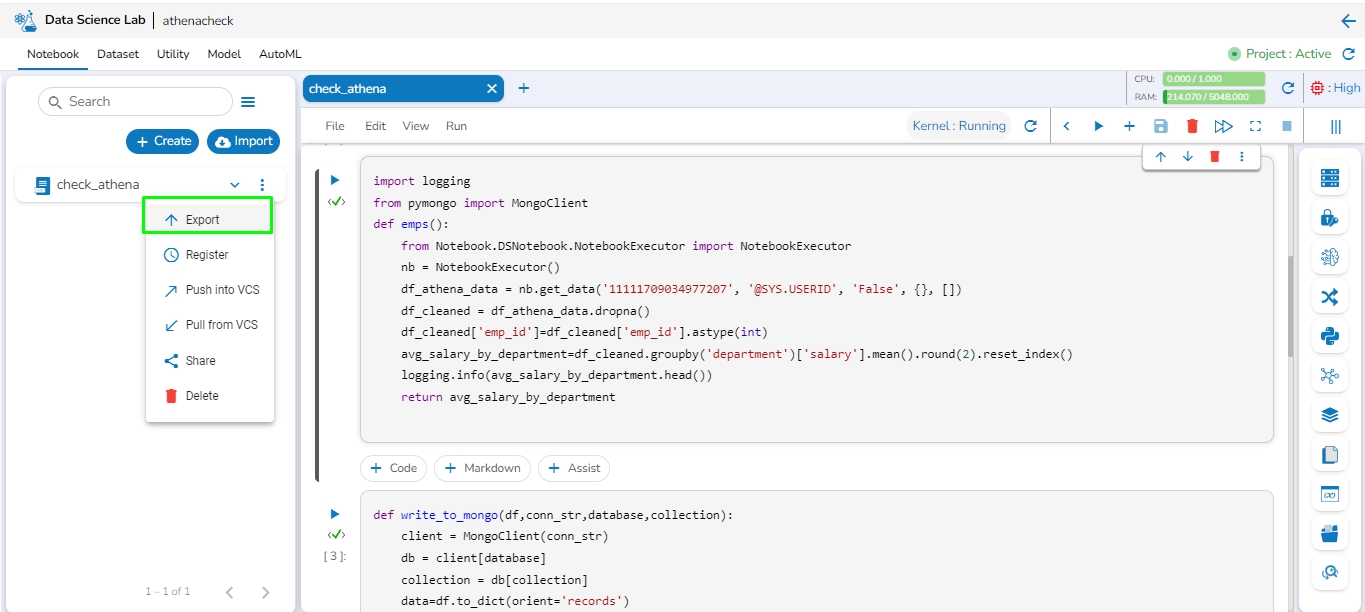

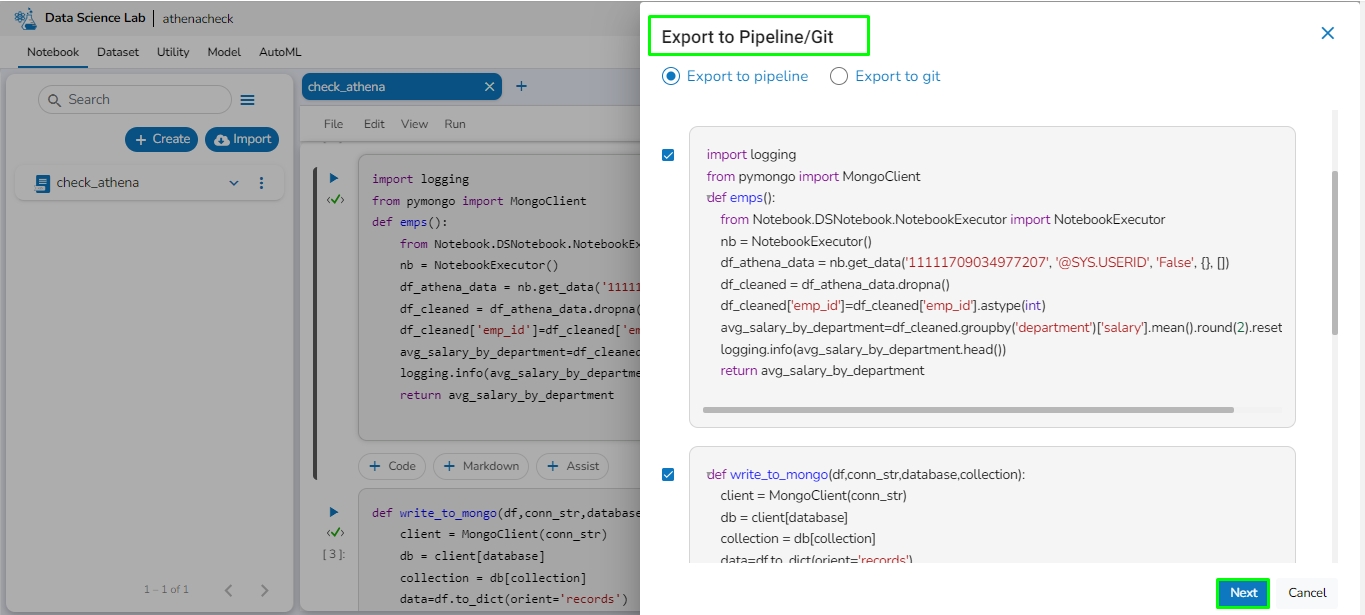

Write PySpark scripts and run them flawlessly in the Jobs.

This feature allows users to write their own PySpark script and run their script in the Jobs section of Data Pipeline module.

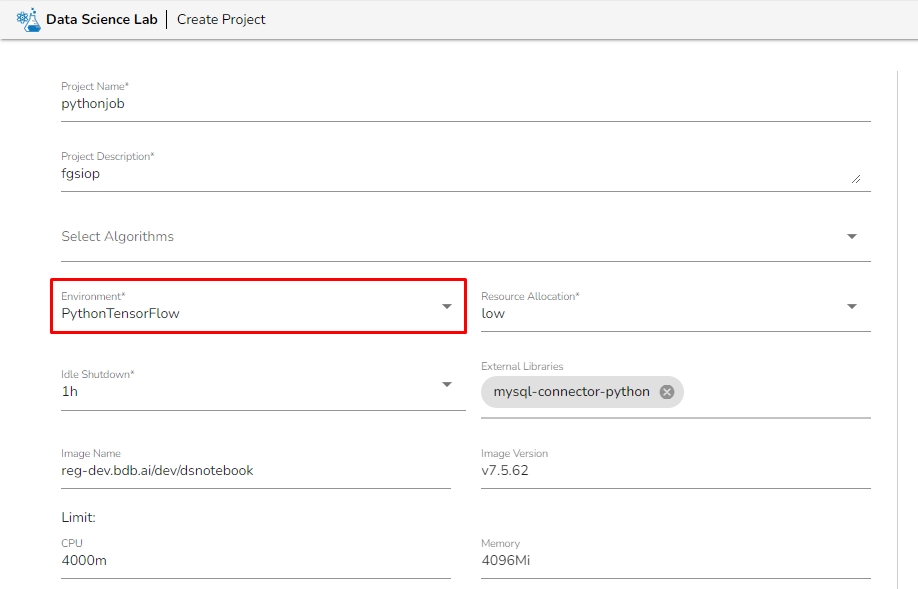

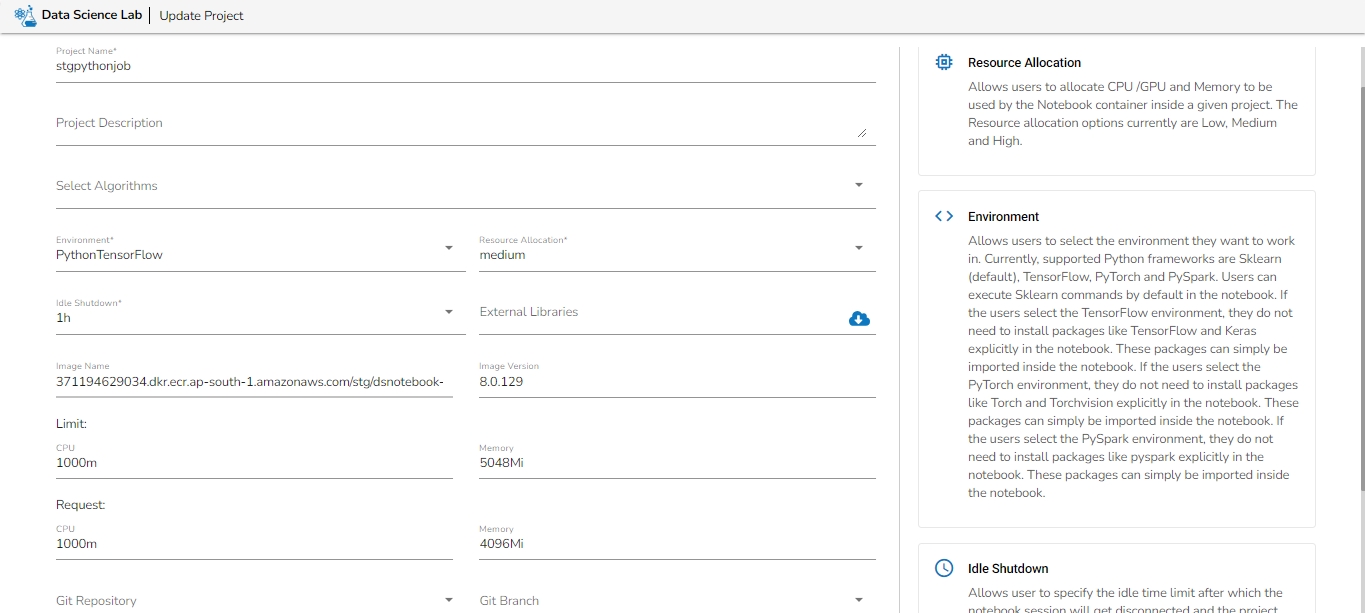

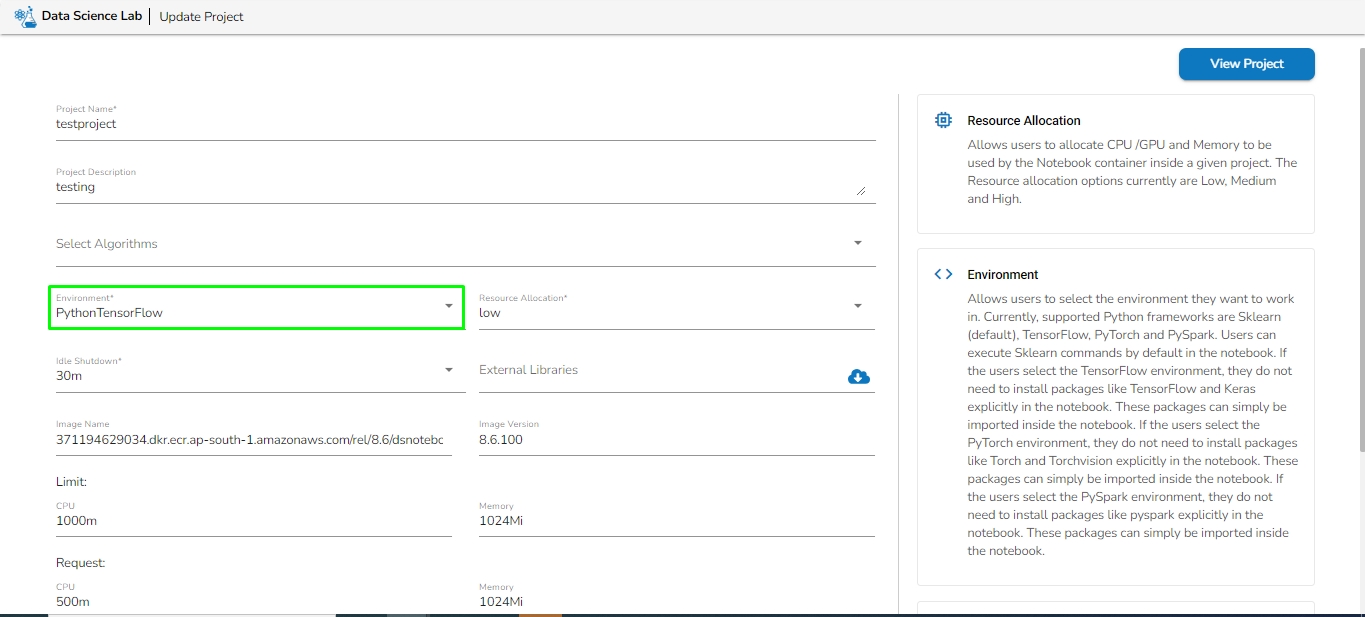

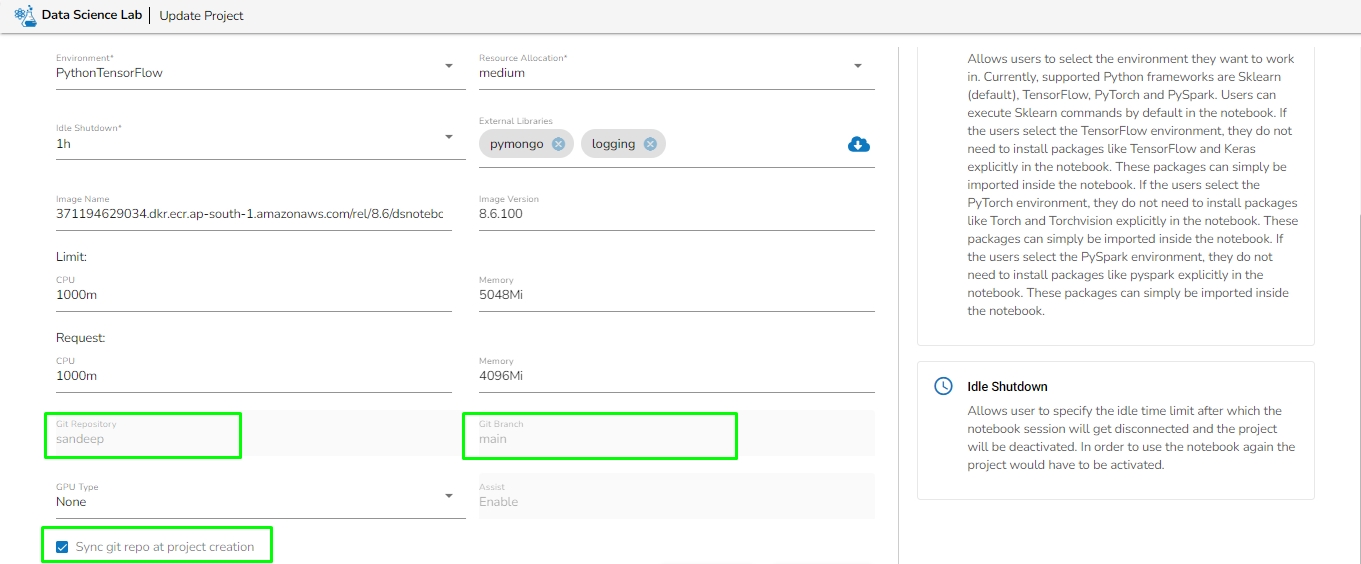

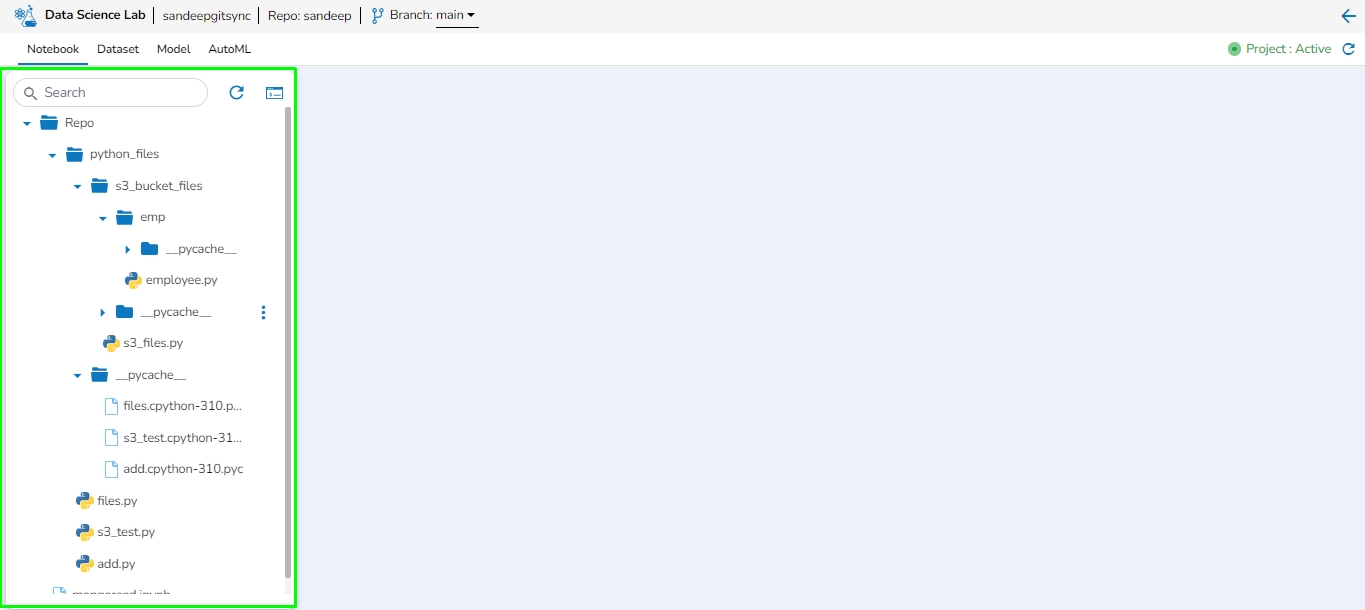

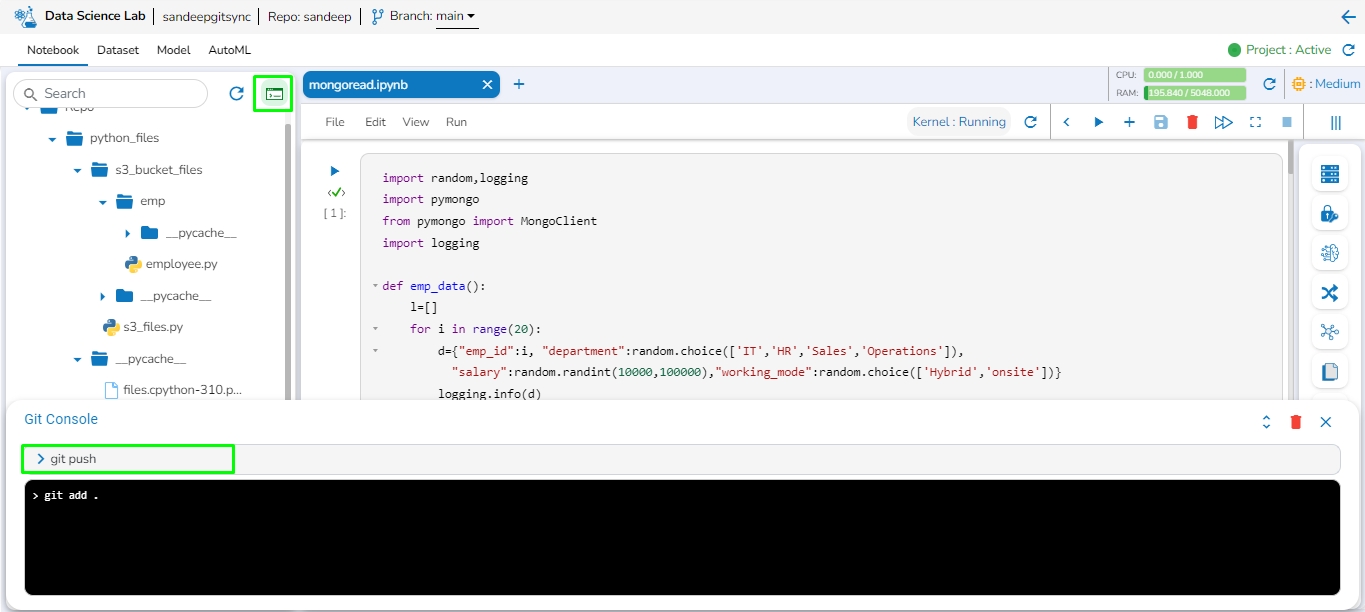

Before creating the PySpark Job, the user has to create a project in the Data Science Lab module under PySpark Environment. Please refer the below image for reference:

Please go through the below given demonstration to create and configure a PySpark Job.

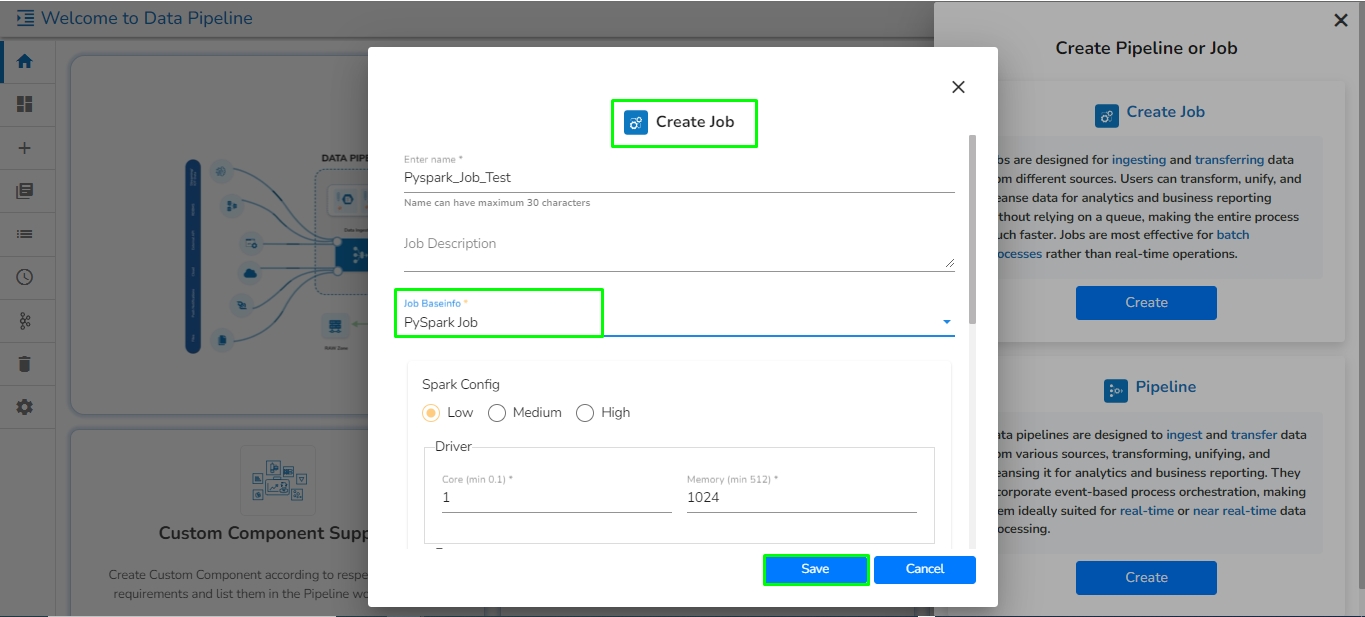

Open the pipeline homepage and click on the Create option.

The new panel opens from right hand side. Click on Create button in Job option.

Provide the following information:

Enter name: Enter the name for the job.

Job Description: Add description of the Job (It is an optional field).

Job Baseinfo: Select PySpark Job option from the drop in Job Base Information.

Trigger By: The PySpark Job can be triggered by another Job or PySpark Job. The PySpark Job can be triggered in two scenarios from another jobs:

On Success: Select a job from drop-down. Once the selected job is run successfully, it will trigger the PySpark Job.

On Failure: Select a job from drop-down. Once the selected job gets failed, it will trigger the PySpark Job.

Is Schedule: Put a checkmark in the given box to schedule the new Job.

Spark config: Select resource for the job.

Click on Save option to save the Job.

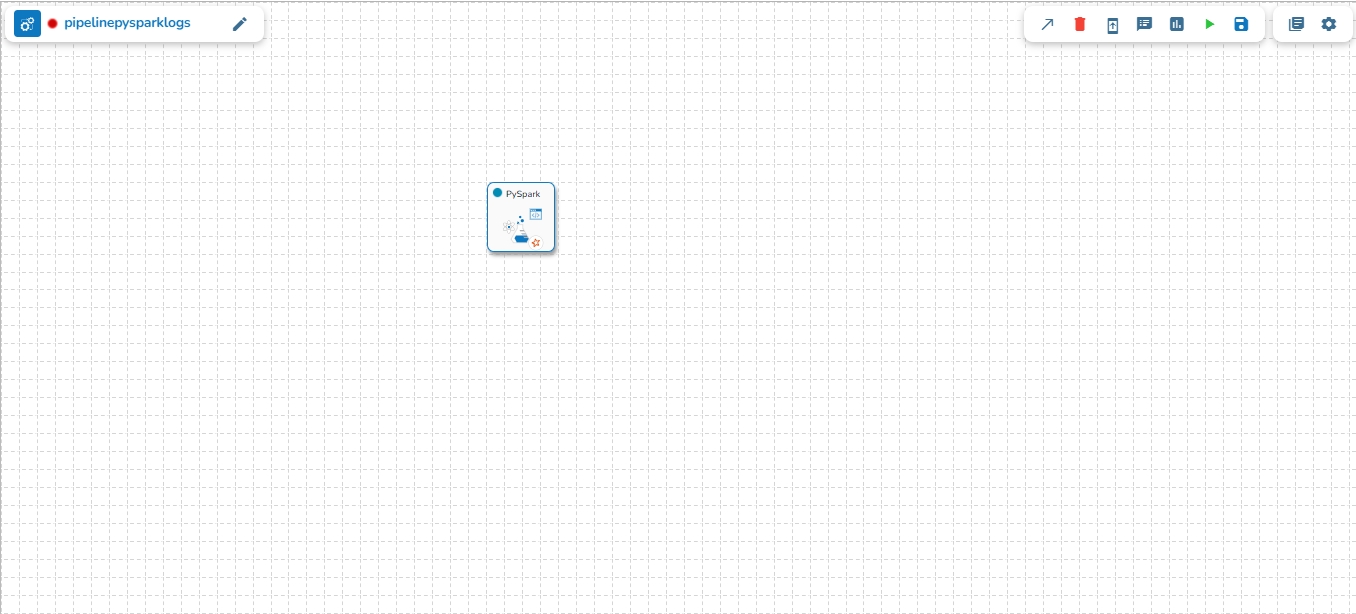

The PySpark Job gets saved and it will redirect the user to the Job workspace.

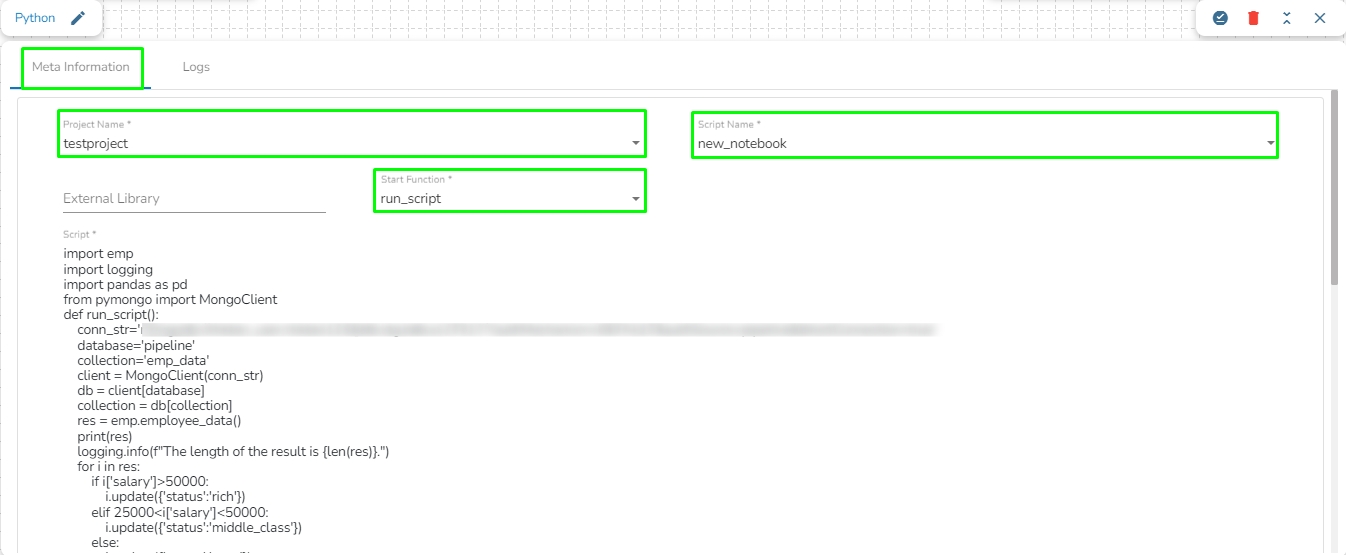

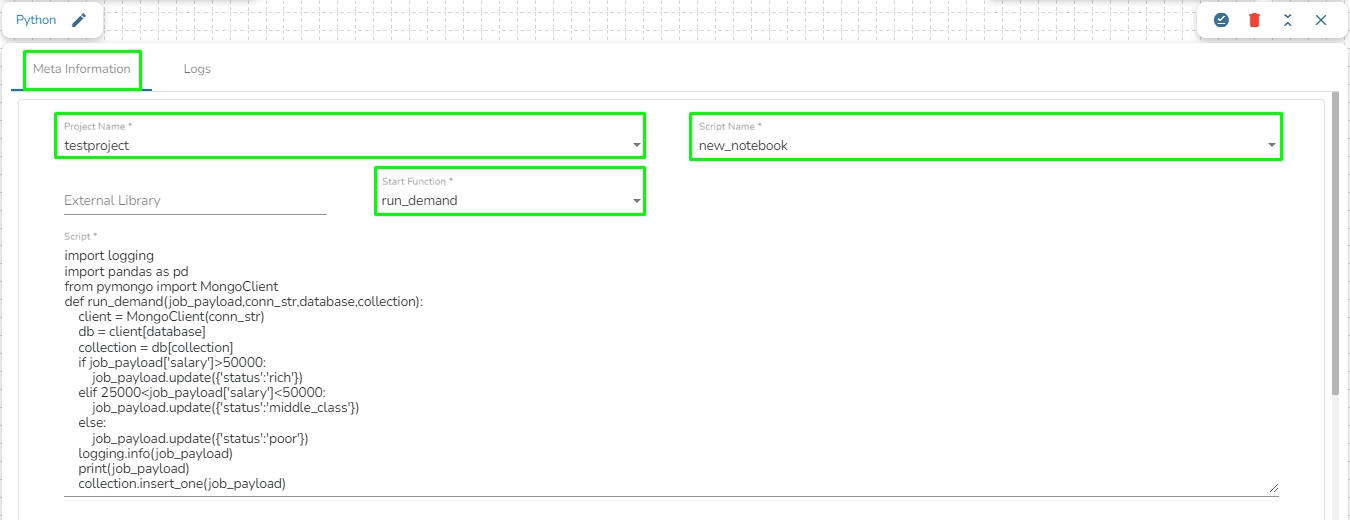

Once the PySpark Job is created, follow the below given steps to configure the Meta Information tab of the PySpark Job.

Project Name: Select the same Project using the drop-down menu where the concerned Notebook has been created.

Script Name: This field will list the exported Notebook names which are exported from the Data Science Lab module to Data Pipeline.

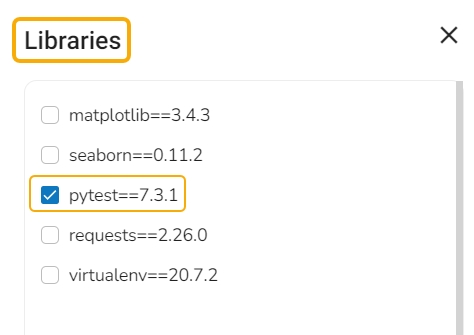

External Library: If any external libraries are used in the script the user can mention it here. The user can mention multiple libraries by giving comma(,) in between the names.

Start Function: Here, all the function names used in the script will be listed. Select the start function name to execute the python script.

Script: The Exported script appears under this space.

Input Data: If any parameter has been given in the function, then the name of the parameter is provided as Key and value of the parameters has to be provided as value in this field.

Please note: We are currently supporting following JDBC connector:

MySQL

MSSQL

Oracle

MongoDB

PostgreSQL

ClickHouse

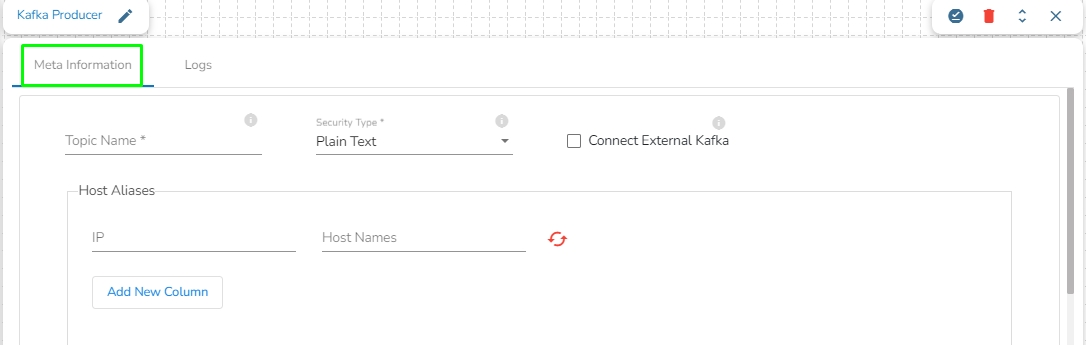

In Apache Kafka, a "producer" is a client application or program that is responsible for publishing (or writing) messages to a Kafka topic.

A Kafka producer sends messages to Kafka brokers, which are then distributed to the appropriate consumers based on the topic, partitioning, and other configurable parameters.

Drag the Kafka Producer task to the Workspace and click on it to open the related configuration tabs for the same. The Meta Information tab opens by default.

Topic Name: Specify topic name where user want to produce data.

Security Type: Select the security type from drop down:

Plain Text

SSL

Is External: User can produce the data to external Kafka topic by enabling 'Is External' option. ‘Bootstrap Server’ and ‘Config’ fields will display after enable 'Is External' option.

Bootstrap Server: Enter external bootstrap details.

Config: Enter configuration details.

Host Aliases: In Apache Kafka, a host alias (also known as a hostname alias) is an alternative name that can be used to refer to a Kafka broker in a cluster. Host aliases are useful when you need to refer to a broker using a name other than its actual hostname.

IP: Enter the IP.

Host Names: Enter the host names.

Please Note: Please click the Save Task In Storage icon to save the configuration for the dragged writer task.

Write Python scripts and run them flawlessly in the Jobs.

This feature allows users to write their own Python script and run their script in the Jobs section of Data Pipeline module.

Before creating the Python Job, the user has to create a project in the Data Science Lab module under Python Environment. Please refer the below image for reference:

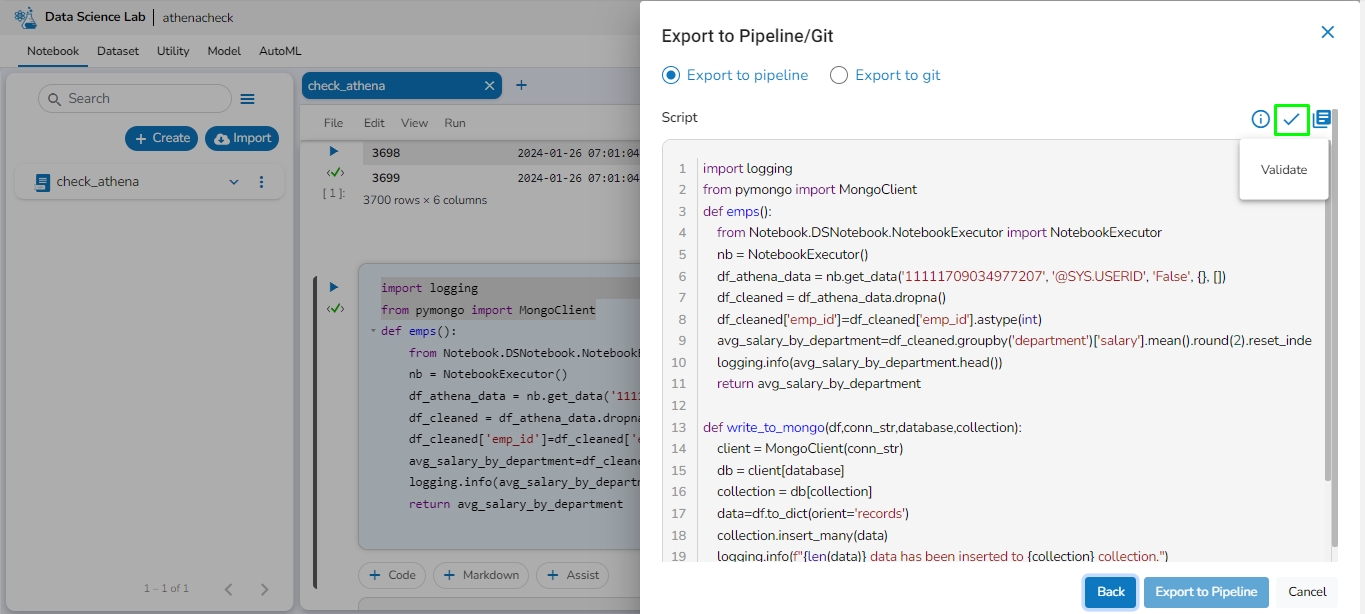

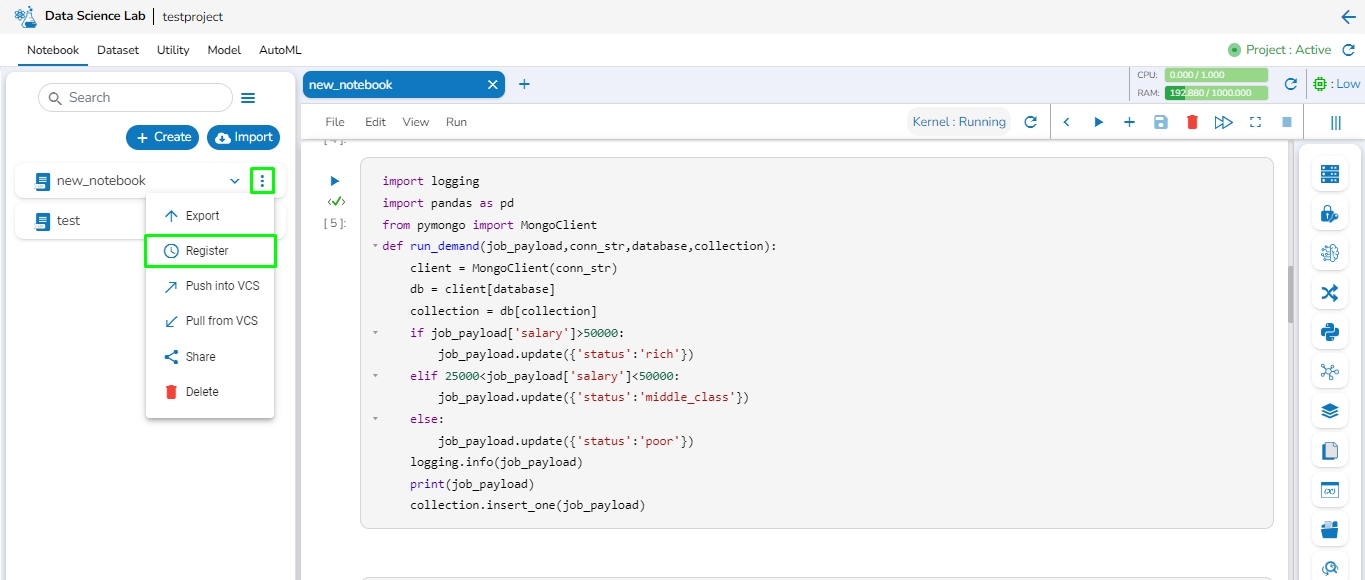

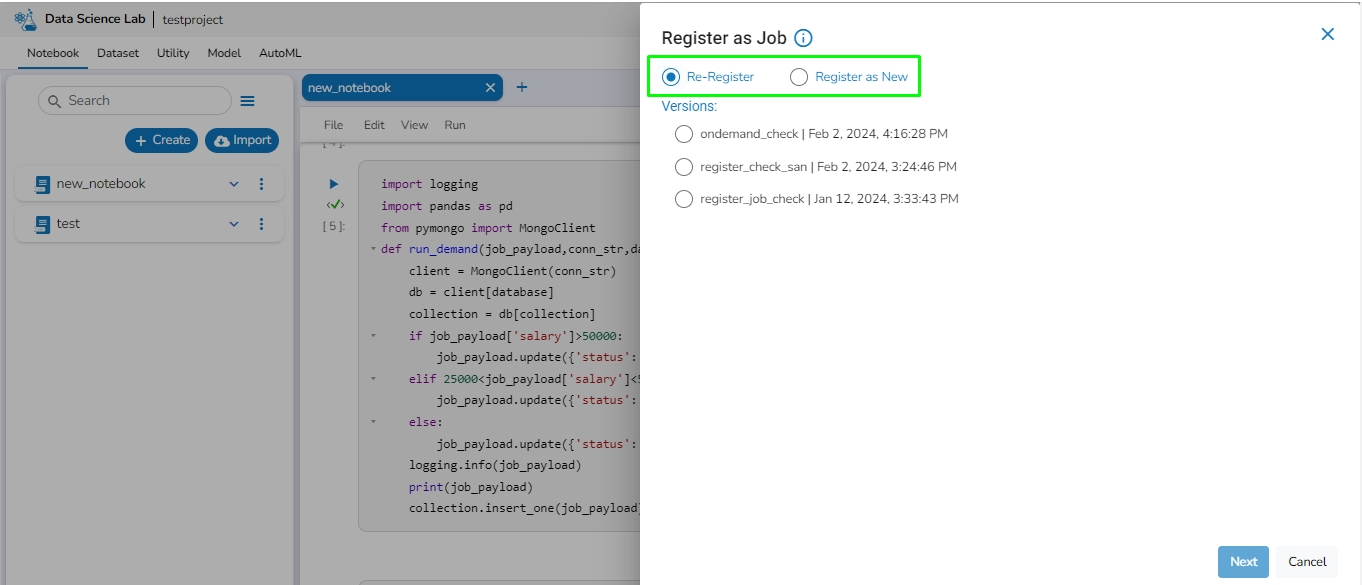

After creating the Data Science project, the users need to activate it and create a Notebook where they can write their own Python script. Once the script is written, the user must save it and export it to be able to use it in the Python Jobs.

Navigate to the Data Pipeline module homepage.

Open the pipeline homepage and click on the Create option.

The new panel opens from right hand side. Click on Create button in Job option.

Enter a name for the new Job.

Describe the Job (Optional).

Job Baseinfo: Select Python Job from the drop-down.

Trigger By: There are 2 options for triggering a job on success or failure of a job:

Success Job: On successful execution of the selected job the current job will be triggered.

Failure Job: On failure of the selected job the current job will be triggered.

Is Scheduled?

A job can be scheduled for a particular timestamp. Every time at the same timestamp the job will be triggered.

Job must be scheduled according to UTC.

Docker Configuration: Select a resource allocation option using the radio button. The given choices are:

Low

Medium

High

Provide the resources required to run the python Job in the limit and Request section.

Limit: Enter max CPU and Memory required for the Python Job.

Request: Enter the CPU and Memory required for the job at the start.

Instances: Enter the number of instances for the Python Job.

Click the Save option to save the Python Job.

The Python Job gets saved, and it will redirect the users to the Job Editor workspace.

Check out the below given demonstration configure a Python Job.

Once the Python Job is created, follow the below given steps to configure the Meta Information tab of the Python Job.

Project Name: Select the same Project using the drop-down menu where the Notebook has been created.

Script Name: This field will list the exported Notebook names which are exported from the Data Science Lab module to Data Pipeline.

External Library: If any external libraries are used in the script the user can mention it here. The user can mention multiple libraries by giving comma (,) in between the names.

Start Function: Here, all the function names used in the script will be listed. Select the start function name to execute the python script.

Script: The Exported script appears under this space.

Input Data: If any parameter has been given in the function, then the name of the parameter is provided as Key, and value of the parameters has to be provided as value in this field.

The on-demand python job functionality allows you to initiate a python job based on a payload using an API call at a desirable time.

Before creating the Python (On demand) Job, the user has to create a project in the Data Science Lab module under Python Environment. Please refer the below image for reference:

Please follow the below given steps to configure Python Job(On-demand):

Navigate to the Data Pipeline homepage.

Click on the Create Job icon.

The New Job dialog box appears redirecting the user to create a new Job.

Enter a name for the new Job.

Describe the Job(Optional).

Job Baseinfo: In this field, there are three options:

Spark Job

PySpark Job

Python Job

Select the Python Job option from Job Baseinfo.

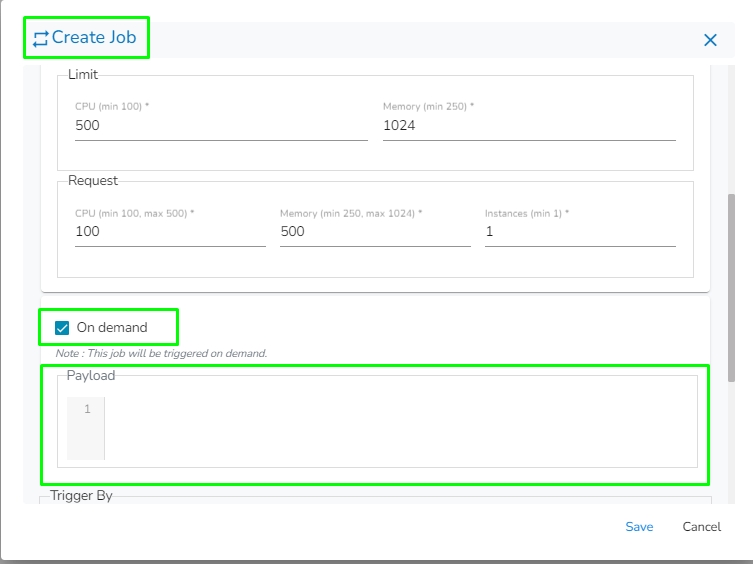

Check-in On demand option as shown in the below image.

Docker Configuration: Select a resource allocation option using the radio button. The given choices are:

Low

Medium

High

Provide the resources required to run the python Job in the limit and Request section.

Limit: Enter max CPU and Memory required for the Python Job.

Request: Enter the CPU and Memory required for the job at the start.

Instances: Enter the number of instances for the Python Job.

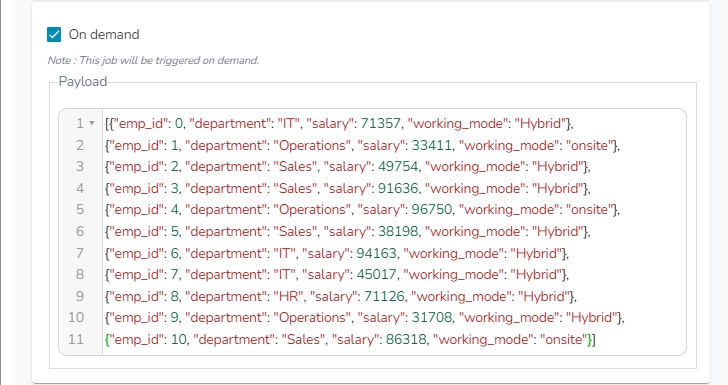

The payload field will appear once the "On Demand" option is checked. Enter the payload in the form of a JSON array containing JSON objects.

Trigger By: There are 2 options for triggering a job on success or failure of a job:

Success Job: On successful execution of the selected job the current job will be triggered.

Failure Job: On failure of the selected job the current job will be triggered.

Click the Save option to create the job.

A success message appears to confirm the creation of a new job.

The Job Editor page opens for the newly created job.

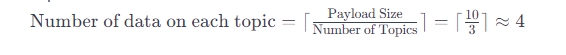

Based on the given number of instances, topics will be created, and the payload will be distributed across these topics. The logic for distributing the payload among the topics is as follows:

The number of data on each topic can be calculated as the ceiling value of the ratio between the payload size and the number of instances.

For example:

Payload Size: 10

Number of Topics=Number of Instances: 3

The number of data on each topic is calculated as Payload Size divided by Number of Topics:

In this case, each topic will hold the following number of data:

Topic 1: 4

Topic 2: 4

Topic 3: 2 (As there are only 2 records left)

In the On-Demand Job system, the naming convention for topics is based on the Job_Id, followed by an underscore (_) symbol, and successive numbers starting from 0. The numbers in the topic names will start from 0 and go up to n-1, where n is the number of instances. For clarity, consider the following example:

Job_ID: job_13464363406493

Number of instances: 3

In this scenario, three topics will be created, and their names will follow the pattern:

Please Note:

When writing a script in DsLab Notebook for an On-Demand Job, the first argument of the function in the script is expected to represent the payload when running the Python On-Demand Job. Please refer to the provided sample code.

job_payload is the payload provided when creating the job or sent from an API call or ingested from the Job trigger component from the pipeline.

Once the Python (On demand) Job is created, follow the below given steps to configure the Meta Information tab of the Python Job:

Project Name: Select the same Project using the drop-down menu where the Notebook has been created.

Script Name: This field will list the exported Notebook names which are exported from the Data Science Lab module to Data Pipeline.

External Library: If any external libraries are used in the script the user can mention it here. The user can mention multiple libraries by giving comma (,) in between the names.

Start Function: Here, all the function names used in the script will be listed. Select the start function name to execute the python script.

Script: The Exported script appears under this space.

Input Data: If any parameter has been given in the function, then the name of the parameter is provided as Key, and value of the parameters has to be provided as value in this field.

Python (On demand) Job can be activated in the following ways:

Activating from UI

Activating from Job trigger component

For activating Python (On demand) job from UI, it is mandatory for the user to enter the payload in the Payload section. Payload has to be given in the form of a JSON Array containing JSON objects as shown in the below given image.

Once the user configure the Python (On demand) job, the job can be activated using the activate icon on the job editor page.

Please go through the below given walk-through which will provide a step-by-step guide to facilitate the activation of the Python (On demand) Job as per user preferences.

Sample payload for Python Job (On demand):

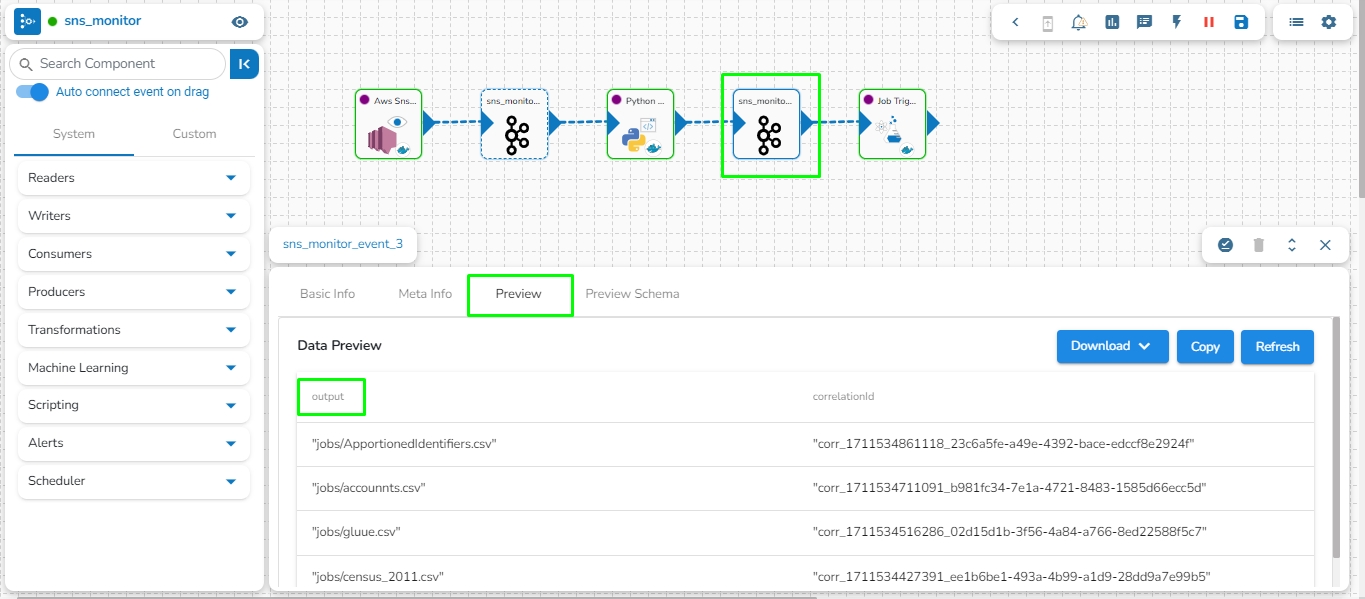

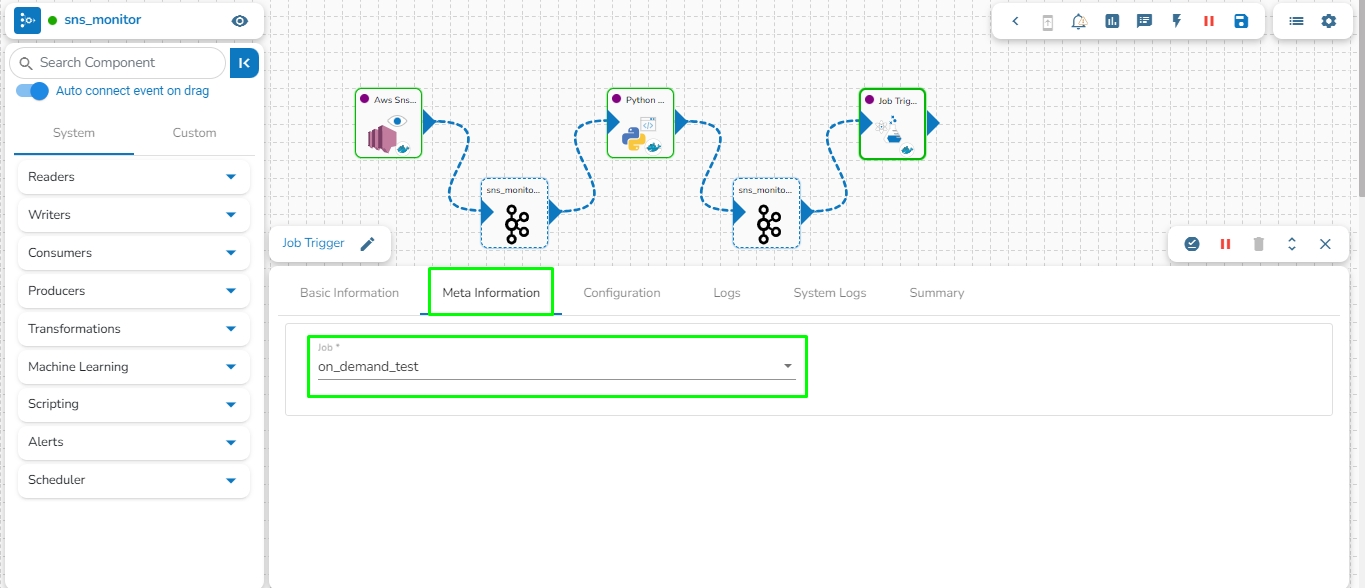

The Python (On demand) job can be activated using the Job Trigger component in the pipeline. To configure this, the user has to set up their Python (On demand) job in the meta-information component within the pipeline. The in-event data of the Job Trigger component will then be utilized as a payload in the Python (On demand) Job.

Please go through the below given walk-through which will provide a step-by-step guide to facilitate the activation of the Python (On demand) Job through Job trigger component.

Please follow the below given steps to configure Job trigger component to activate the Python (On demand) job:

Create a pipeline that generates meaningful data to be sent to the out event, which will serve as the payload for the Python (On demand) job.

Connect the Job Trigger component to the event that holds the data to be used as payload in the Python (On demand) job.

Open the meta-information of the Job Trigger component and select the job from the drop-down menu that needs to be activated by the Job Trigger component.

The data from the previously connected event will be passed as JSON objects within a JSON Array and used as the payload for the Python (On demand) job. Please refer to the image below for reference:

In the provided image, the event contains a column named "output" with four different values: "jobs/ApportionedIdentifiers.csv", "jobs/accounnts.csv", "jobs/gluue.csv", and "jobs/census_2011.csv". The payload will be passed to the Python (On demand) job in the JSON format given below.

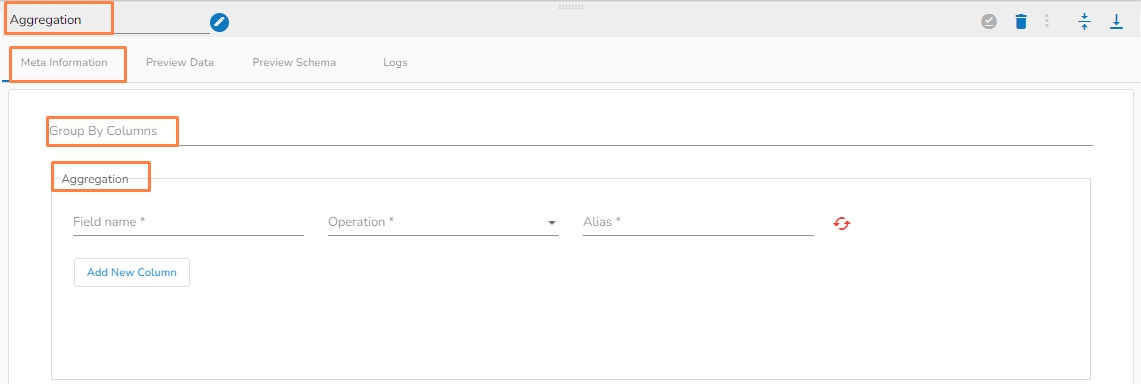

This page aims to explain the various transformation options provided on the Jobs Editor page.

The following transformations are provided under the Transformations section.

Alter Columns

Select Columns

Date Formatter

Query

Filter

Formula

Join

Aggregation

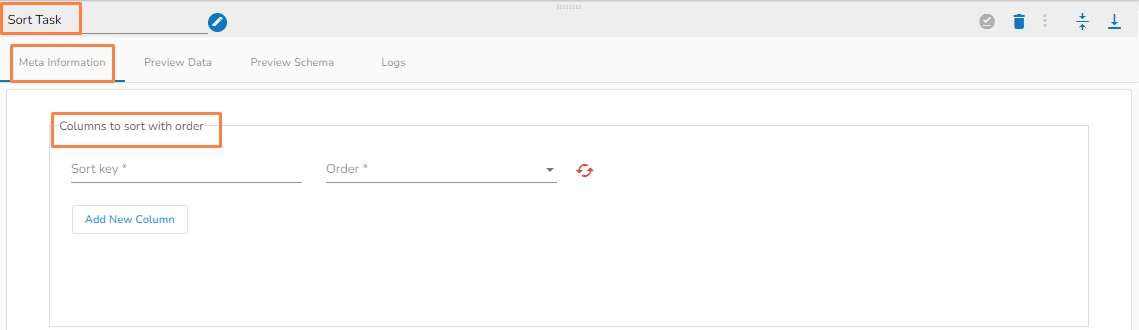

Sort Task

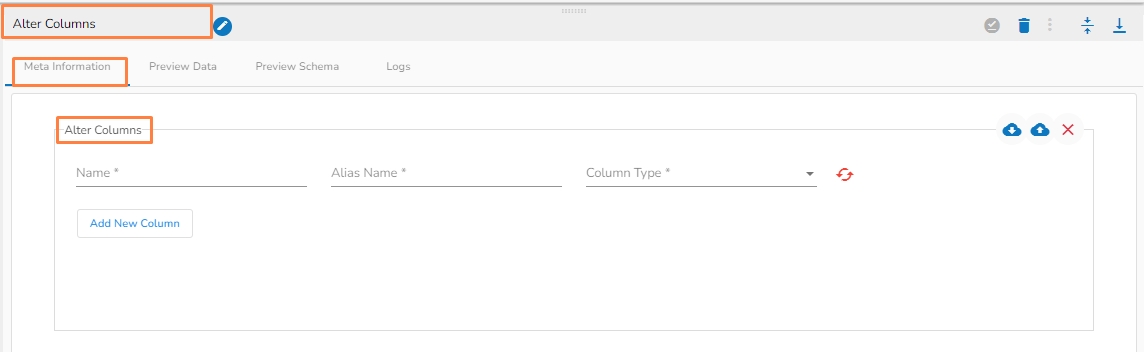

The Alter Columns command is used to change the data type of a column in a table.

Add the Column name from the Alter Columns tab where the datatype needs to be changed.

Name (*): Column name.

Alias Name(*) : New column name.

Column Type(*): Specify datatype from dropdown.

Add New Column: Multiple columns can be added for desired modification in the datatype.

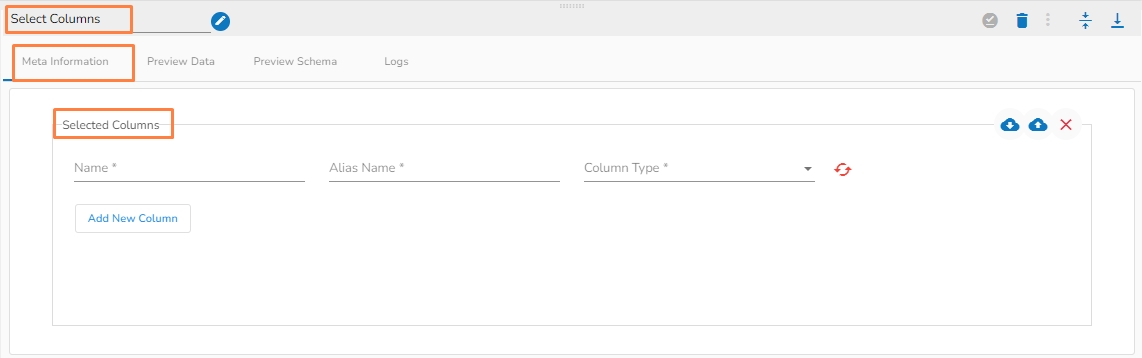

Helps to select particular columns from a table definition.

Name (*): Column name.

Alias Name(*) : New column name.

Column Type(*) : Specify datatype from dropdown.

Add New Column: Multiple columns can be added for the desired result

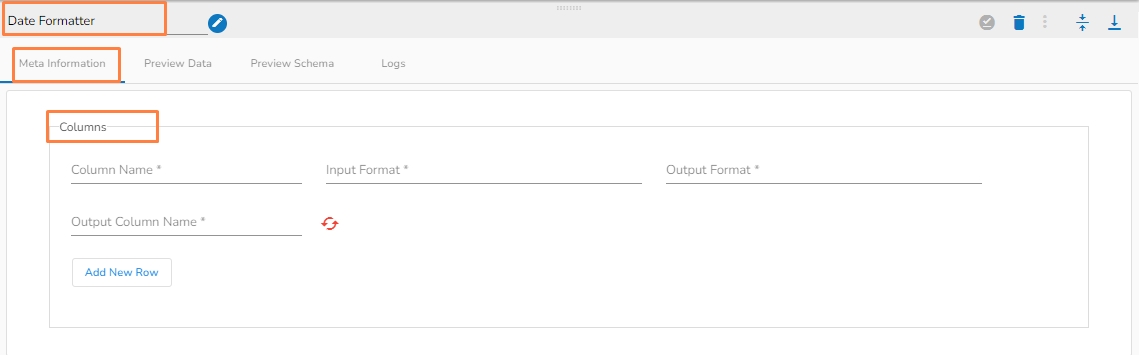

It helps in converting Date and Datetime columns to a desired format.

Name (*): Column name.

Input Format(*) : The function has total 61 different formats.

Output Format(*): The format in which the output will be given.

Output Column Name(*): Name of the output column.

Add New Row: To insert a new row.

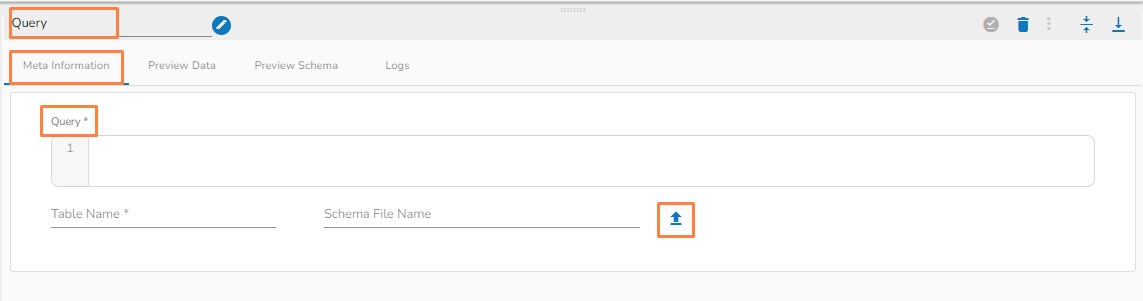

The Query transformation allows you to write SQL (DML) queries such as Select queries and data view queries.

Query(*): Provide a valid query to transform data.

Table Name(*): Provide the table name.

Schema File Name: Upload Spark Schema file in JSON format.

Choose File: Upload a file from the system.

Please Note: Alter query will not work.

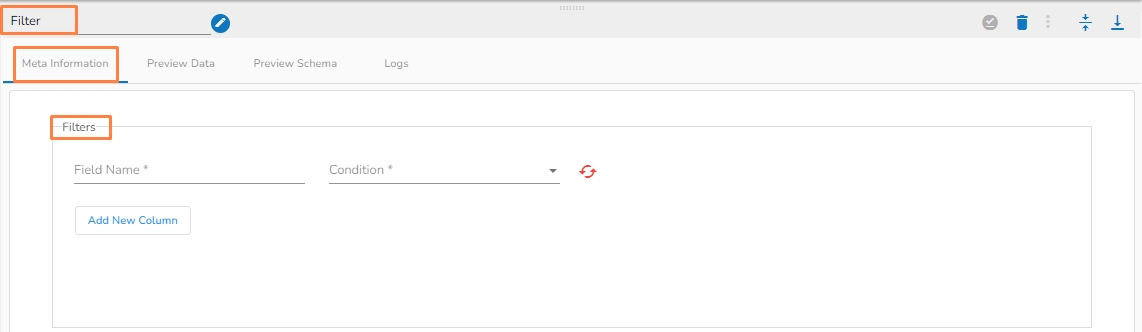

The Filter columns allow the user to filter table data based on different defined conditions.

Field Name(*): Provide field name.

Condition (*): 8 condition operations are available within this function.

Logical Condition(*)(AND/OR):

Add New Column: Adds a new Column.

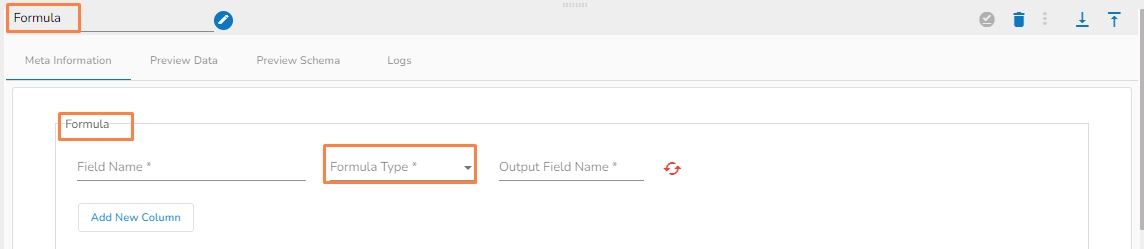

It gives computation results based on the selected formula type.

Field Name(*): Provide field name.

Formula Type(*): Select a Formula Type from the drop-down option.

Math (22 Math Operations)

String (16 String Operations )

Bitwise (3 Bitwise Operations)

Output Field Name(*): Provide the output field name.

Add New Column: Adds a new column.

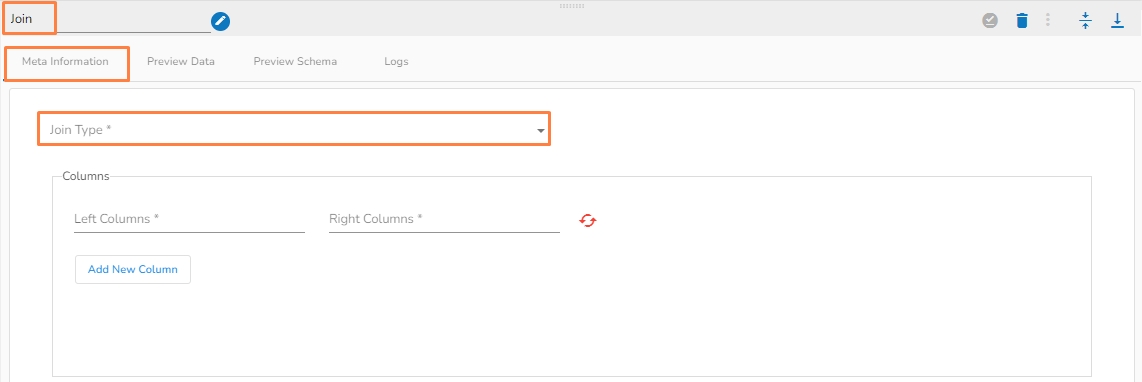

It joins 2 tables based on the specified column conditions.

Join Type (*): Provides drop-down menu to choose a Join type.